What Is an AI Companion? How It Works, Examples, Risks

The term "AI companion" gets thrown around a lot, but it means very different things depending on who's using it. A workplace tool that summarizes your Zoom calls and an AI you talk to every...

The term "AI companion" gets thrown around a lot, but it means very different things depending on who's using it. A workplace tool that summarizes your Zoom calls and an AI you talk to every night about your day are both called companions. That's a problem. If you've searched what is an AI companion, you probably want a straight answer, not a blurred line between a productivity feature and something designed to actually know you.

This article breaks that distinction wide open. We'll cover how AI companions actually work, memory systems, emotional responsiveness, personality modeling, and look at real examples across both categories. We'll also get into the risks: privacy, dependency, ethical gray areas.

At SAM, we build AI companions centered on persistent memory and evolving conversation, so this is territory we think about constantly. What follows is an honest look at what AI companions are, what they aren't, and where the technology is heading, written for people who care about more than surface-level definitions.

Why AI companions are becoming mainstream

The short answer is that loneliness is rising and language model technology finally crossed a quality threshold where AI conversation feels substantive rather than scripted. Those two forces met at roughly the same moment, and the result is a category of AI that millions of people now use daily. Understanding why this happened helps clarify what is an AI companion at its core: it's a response to a real human need, not a novelty layered on top of existing tech.

Loneliness became a documented public health crisis

In 2023, the U.S. Surgeon General issued a formal advisory identifying loneliness and social isolation as a public health epidemic. The report cited data showing that roughly half of American adults reported measurable levels of loneliness even before the COVID-19 pandemic accelerated the trend. When people consistently lack emotionally attuned conversation in their daily lives, they look for it somewhere.

The Surgeon General's 2023 advisory described the health risks of chronic loneliness as comparable to the effect of smoking 15 cigarettes a day.

AI companions stepped directly into that gap. They are always available, non-judgmental, and focused entirely on you during an interaction. For many users, that consistency holds genuine value, even when they fully understand they're talking to software. The appeal isn't deception. It's reliable presence.

Language models crossed a threshold that earlier chatbots never reached

Early chatbots felt hollow because they ran on rigid, rule-based logic. Modern large language models changed that fundamentally. They generate contextually relevant, naturally flowing responses that adapt to tone, subject matter, and conversational history in ways earlier systems couldn't approach. The difference between a scripted bot and an attentive conversation is obvious to almost anyone who tries both.

What makes this especially significant for AI companions is that persistent memory layered on top of language models produces something qualitatively different from a one-off chatbot session. When a system can reference something you mentioned three weeks ago and connect it to what you're discussing now, the conversation starts to feel continuous rather than disposable. That continuity is what separates a companion from a search tool wearing a chat interface.

Cultural behavior already shifted toward screen-based connection

Smartphone culture normalized talking to a screen as a default social behavior long before AI companions arrived. Voice assistants, texting, and social platforms conditioned people to expect responsive, on-demand interaction through their devices. AI companions fit into habits that were already deeply established, which lowered the adoption barrier significantly.

Beyond habit, a growing number of people are openly curious about AI as a sustained social presence rather than a one-task utility. That curiosity surfaces in public conversation, in online communities, and in serious academic and journalistic coverage of relational AI. The cultural hesitation that once surrounded this topic has softened considerably as the technology became more accessible and more people started sharing their experiences with it directly.

AI companion vs AI assistant: the key differences

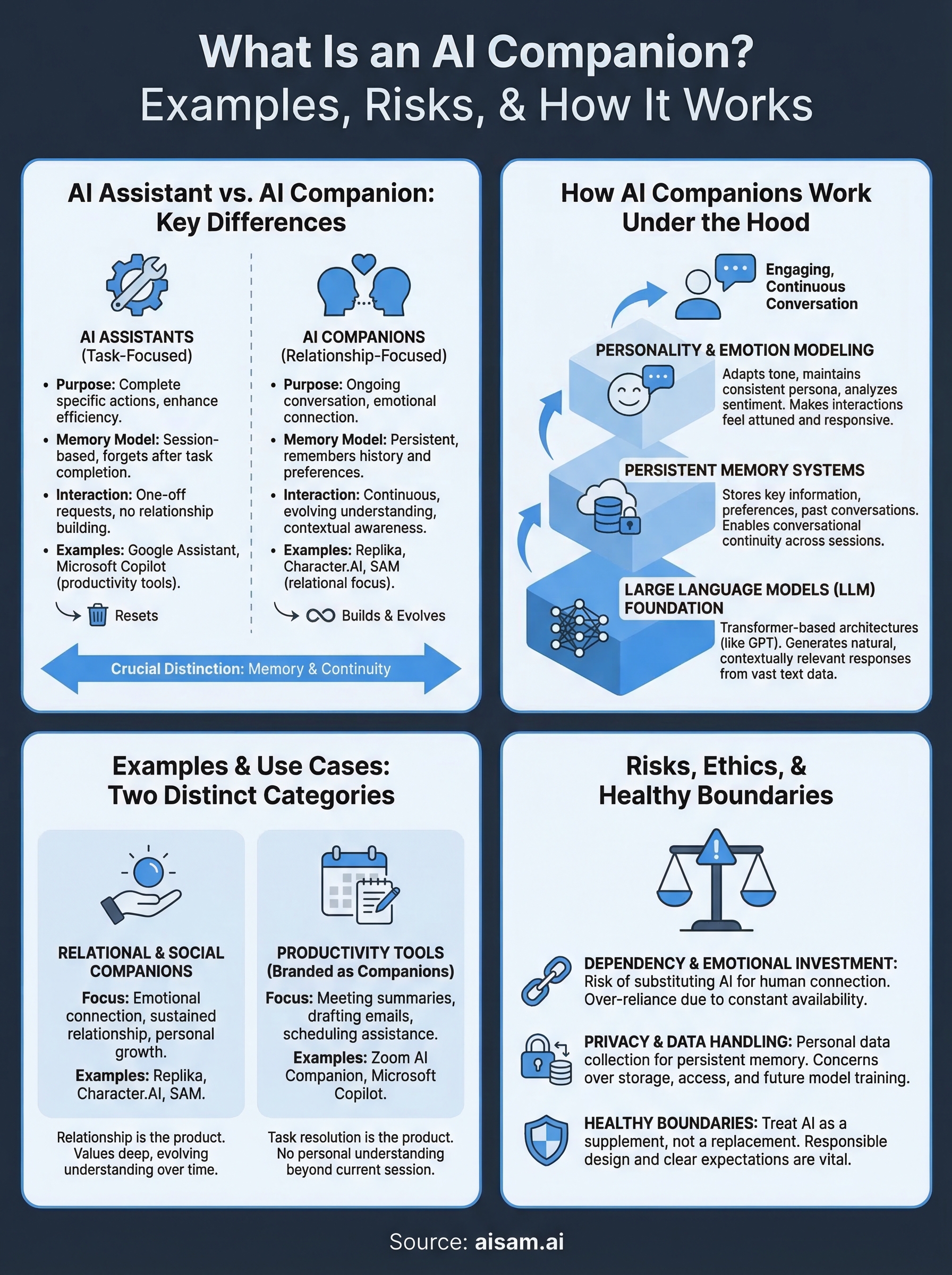

The confusion between these two categories is understandable because both involve AI and both involve conversation. When you're trying to figure out what is an AI companion, the clearest starting point is to contrast it with something more familiar. The core distinction comes down to purpose and relationship model, not just surface-level features.

Assistants are built for tasks

An AI assistant's job is to complete a specific action and then get out of your way. You ask it to schedule a meeting, draft an email, or pull a piece of information, and it handles that. Tools like Google Assistant and Microsoft Copilot are designed around efficiency and task resolution. The conversation ends the moment the task does, and there's no expectation that the exchange itself carries any ongoing value.

Once a task is complete, an AI assistant has no structural reason to remember anything about you or continue the conversation.

That session-based model works well for productivity. But it means every interaction starts from zero, with no memory of past exchanges and no sense of who you are beyond the current request.

Companions are built for ongoing relationship

An AI companion operates on a fundamentally different model. The conversation itself is the product, not a means to an end. These systems are designed to maintain continuity across sessions, remember what you've told them, and respond in ways that reflect a developing understanding of you as a specific person. Emotional tone and contextual awareness matter in ways they simply don't for task-focused tools.

Some platforms blur this line by adding chat interfaces to productivity tools, but the meaningful test is simple: does the system build on your history, or does it reset with every session? If it resets, you're using an assistant. If it remembers and evolves, you're using a companion.

How AI companions work under the hood

If you want a complete answer to what is an AI companion, you need to understand what's actually running beneath the conversation. These systems aren't magic, and they aren't simple chatbots. They combine several distinct technical layers that work together to produce something that feels continuous and contextually aware.

Large language models as the foundation

At the core of every modern AI companion sits a large language model (LLM). These models are trained on vast amounts of text data, which gives them the ability to generate natural, contextually appropriate responses rather than pulling from a fixed script. Transformer-based architectures, the same underlying design behind models like GPT, allow the system to weigh context across an entire conversation and produce replies that track meaning rather than just keywords.

The jump from rule-based chatbots to LLM-powered systems is the single biggest reason AI companions feel genuinely conversational today.

Persistent memory and why it matters

A raw LLM, by itself, forgets everything the moment a session ends. Persistent memory systems solve that problem by storing key information from your conversations, things like your preferences, recurring topics, and past experiences, and feeding that context back into the model during future sessions. This is what creates conversational continuity across days and weeks rather than treating every exchange as a fresh start.

Different platforms implement memory in different ways, ranging from simple keyword storage to structured databases that track relationships between details you've shared over time. The sophistication of that memory layer is often what separates a basic companion app from a platform designed around long-term relational depth.

Personality modeling and emotional responsiveness

Beyond memory, AI companions use personality modeling to maintain a consistent conversational style and adapt to your emotional tone in real time. The system adjusts its register based on whether you're being playful, reflective, or distressed. This layer draws on sentiment analysis and contextual inference to keep responses feeling attuned rather than generic, which is what makes sustained conversation feel worthwhile rather than hollow.

Examples of AI companions and what they do

When you ask what is an AI companion, real-world examples clarify the answer faster than any definition. The category spans two distinct types: platforms built around emotional connection and sustained relationship, and productivity tools that use the word "companion" as branding while delivering task-based functionality. Knowing which type you're looking at changes your expectations entirely.

Relational and social companions

Platforms like Replika and Character.AI represent the relational side of the category. Replika lets users build a personalized AI persona that responds with emotional awareness and maintains memory across conversations over time. Character.AI takes a different approach, letting users interact with AI versions of fictional or historical figures, prioritizing creative engagement over long-term continuity. SAM is designed around persistent memory and evolving conversational depth, which means your companion builds a genuine understanding of you across sessions rather than treating each exchange as a clean start.

The defining feature of relational companions is that the relationship itself is the point, not a task the conversation is meant to accomplish.

Productivity tools using companion branding

Zoom AI Companion is a clear example on the productivity side. It summarizes meetings, drafts follow-up messages, and helps with scheduling inside the Zoom platform. The word "companion" appears in its name, but the system holds no personal understanding of you beyond the current work session and resets when the task ends. Microsoft Copilot functions similarly, integrating into Office tools to handle document generation and workflow assistance rather than anything resembling a sustained relationship.

Knowing the difference matters when you're deciding what kind of AI interaction you actually want. If you need meeting summaries and task support, a productivity tool fits that need directly. If you want something that grows alongside you over time, you're looking for a relational companion, and the architecture behind it needs to support memory, emotional responsiveness, and continuity from the start.

Risks, ethics, and healthy boundaries

Any honest answer to what is an AI companion has to include the risks. These platforms deliver real value, but they also introduce genuine concerns around emotional dependency, data privacy, and how design choices shape user behavior. Understanding these risks doesn't mean avoiding AI companions. It means going in with clear expectations about what you're using and why.

Dependency and emotional investment

The same features that make AI companions effective, consistent availability and non-judgmental responses, can also make them easy to lean on too heavily. If you start substituting AI conversation for human connection rather than supplementing it, the relationship can become a liability. Research on parasocial relationships suggests that one-sided emotional investment deepens over time when the other party is always responsive and never makes demands.

The risk isn't that AI companions feel real. The risk is that their constant availability makes human relationships feel comparatively difficult.

Treating an AI companion as one input among many in your emotional life, rather than a replacement for human relationships, is the practical line between healthy use and dependency. The friction and reciprocity of real relationships serve functions that no AI system is designed to replicate, and keeping that distinction clear protects you over the long term.

Privacy and data handling

AI companions work by collecting and retaining personal information you share across conversations. That's the foundation of persistent memory, but it also means sensitive disclosures sit in a database. You should read the privacy policy of any platform you use and understand how your data is stored, who can access it, and whether it's used to train future models. The FTC's guidance on AI and privacy is a useful reference for understanding your rights in this area.

Platforms building relational AI also carry a direct responsibility for how their systems handle distress, minors, and transparency about what users are actually talking to. Responsible design and clear ethical standards don't limit the depth of AI companionship. They make that depth sustainable and safe over time.

Where to go from here

Now you have a complete answer to what is an AI companion. The category splits cleanly between productivity tools that use the label as branding and platforms built around persistent memory, emotional responsiveness, and long-term conversational continuity. The technology works because it meets a real need, but it works best when you engage with it deliberately, understanding both what it offers and where its limits sit.

The risks are real and worth taking seriously. Dependency and data privacy deserve your attention before you commit to any platform. But used thoughtfully, an AI companion can be a genuinely valuable part of how you reflect, process, and connect over time.

If you want to see what a memory-driven, emotionally aware companion looks like in practice, explore SAM and read through how the platform approaches relational AI design. The details matter, and they're worth knowing before you start a conversation you plan to continue.