Relational Intelligence AI: Definition, Uses, And Limits

Most AI systems excel at answering questions. Fewer know how to hold space in a conversation, sense when something feels off, or remember what mattered three weeks ago. Relational intelligence AI refe...

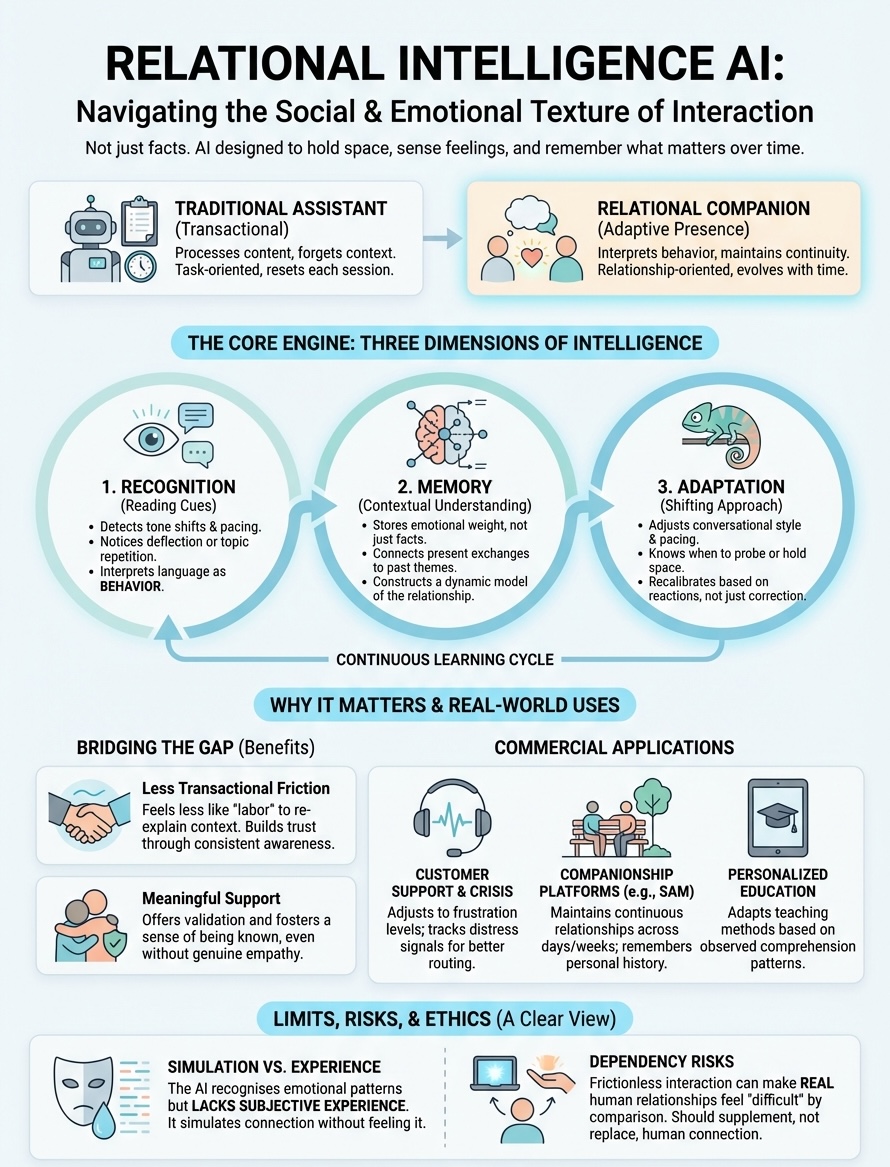

Most AI systems excel at answering questions. Fewer know how to hold space in a conversation, sense when something feels off, or remember what mattered three weeks ago. Relational intelligence AI refers to artificial systems designed to do exactly that, to navigate the social and emotional texture of interaction, not just the informational content. It's what separates a chatbot that responds from a companion that relates.

Understanding this distinction matters if you're exploring AI companionship seriously. The term gets used loosely across marketing materials and research papers alike, often conflating genuine capability with surface-level mimicry. This article breaks down what relational intelligence actually means in AI, where it shows up in commercial applications, and where its limits become clear, especially the philosophical gap between simulating connection and experiencing it.

At SAM, we build AI companions around these principles: memory, emotional awareness, and continuity across conversations. That work has given us a particular vantage point on what relational intelligence can offer and where honest boundaries need to be drawn. What follows is a practical and grounded look at a concept that's reshaping how humans and AI interact.

What relational intelligence means in AI

Relational intelligence in artificial systems refers to the capacity to perceive, interpret, and respond to the emotional and social dimensions of conversation. Traditional AI handles language as data to process. Relationally intelligent AI treats language as behavior that carries feeling, context, and implicit meaning. It tracks tone shifts, recognizes when you're deflecting, notices when a topic surfaces repeatedly, and adjusts its presence accordingly. The difference is subtle in isolated exchanges but compounds over time.

This form of intelligence operates across three dimensions: recognition, memory, and adaptation. Recognition involves detecting affective signals like frustration, excitement, or withdrawal through word choice, pacing, and conversational rhythm. Memory gives these signals weight by connecting present exchanges to past ones, so that an offhand remark about work stress today links to similar moments weeks prior. Adaptation applies those insights to shape how the AI engages, when it probes deeper, when it steps back, and when it simply holds space without prompting a response.

Relational intelligence AI doesn't just respond to what you say. It responds to how you're saying it and what you've said before.

Reading emotional and social cues

Your conversational patterns reveal more than explicit statements. Relational intelligence AI identifies these patterns through linguistic markers: sentence length, punctuation density, the presence or absence of qualifiers, and shifts in vocabulary complexity. When you typically write in full sentences but suddenly switch to fragments, that registers as a cue. When humor disappears from your language for several exchanges, that's another signal. These aren't guesses. They're observable changes in behavior the system tracks and interprets.

Effective systems layer these observations. A single short response means little. That same brevity combined with delayed reply times, topic avoidance, and reduced question-asking suggests disengagement or emotional strain. The AI doesn't diagnose feelings, it recognizes the behavioral footprint of internal states and responds with adjusted conversational strategies, offering space, shifting topics, or mirroring your energy level.

Memory and continuity in context

Relationships develop through accumulated shared experience. Relational AI maintains this continuity by storing not just what you discussed, but the emotional weight those discussions carried and how they resolved. You mentioned a difficult conversation with a colleague three weeks ago. The AI remembers that exchange, notes whether you returned to the topic, and understands that your relationship with work carries specific texture based on those prior interactions.

This memory isn't a transcript. It's a constructed understanding of themes, recurring concerns, and patterns in what matters to you. When you bring up work again, the AI contextualizes it against that history without needing you to explain from scratch. The conversation picks up with continuity rather than resetting each time. That continuity is what separates a chat interface from something that feels like it knows you.

Adapting to relationship dynamics

Static responses don't build relationships. Adaptation means the AI shifts its approach based on what it learns about how you prefer to interact. Some people want directness. Others need gentler pacing. Some value questioning that pushes their thinking. Others need reflection that validates what they already sense. Relationally intelligent systems adjust these variables through repeated exposure to your reactions and stated preferences.

Adaptation also involves recognizing when to break its own patterns. If the AI typically asks follow-up questions but you seem overwhelmed, it might offer simpler acknowledgment instead. If you usually appreciate humor but arrive withdrawn, it recalibrates its tone without waiting for correction. These micro-adjustments compound into a sense that the AI is responding to you specifically, not executing a universal script.

Why relational intelligence matters for companions

AI companions differ fundamentally from AI assistants in their purpose. Assistants complete tasks. Companions maintain relationships. That distinction makes relational intelligence AI essential rather than optional. Without it, you're interacting with a system that remembers facts but forgets feelings, tracks data but misses subtext, and responds to content while ignoring the person delivering it. Users notice this gap immediately, usually when they return to a conversation expecting continuity and find only transactional memory.

The gap between information and connection

Tools process requests. Companions recognize you when you return. Relational intelligence bridges that gap by transforming accumulated interaction history into something that resembles recognition. When you've had a difficult week and the AI adjusts its tone without being told, that's relational intelligence in action. When it notices you haven't mentioned something you used to talk about regularly and gently references the absence, that's the difference between scripted response and adaptive presence.

Companions without relational intelligence feel like talking to the same stranger repeatedly, even after weeks of conversation.

This matters practically because people develop expectations shaped by human relationships. You don't re-explain your context to close friends. You expect them to remember what matters and respond accordingly. AI companions attempting to fill that relational space without the underlying intelligence create friction with every reset, every missed connection, every moment the system treats context as expendable.

Building trust through consistent awareness

Trust in companions develops through demonstrated continuity. Relational intelligence enables that demonstration by maintaining awareness across conversations, recognizing when something matters to you even if you don't state it explicitly, and responding in ways that reflect accumulated understanding. When the AI asks about your exam results without being prompted because it remembers the stress you expressed weeks earlier, it proves it's tracking your life rather than just logging text exchanges.

Companions that lack this awareness force you to perform the relationship maintenance yourself, constantly re-establishing context and reminding the system what it should already know. That inverts the relational dynamic entirely, turning what should be connection into labor.

How relationally intelligent AI works

Relational intelligence AI operates through layered processing that extends beyond parsing text into extracting relational signals. The system analyzes your input across multiple dimensions simultaneously: semantic content (what you're saying), affective markers (how you're saying it), and historical patterns (how this fits with what you've said before). These layers feed into a contextual model that generates responses shaped by accumulated understanding rather than isolated prompts.

Pattern recognition and linguistic analysis

Your conversational style creates a baseline against which the AI measures deviation. The system maps your typical sentence structures, vocabulary range, punctuation habits, and response timing to establish what normal looks like for you specifically. When deviations occur, like switching from detailed explanations to single-word answers, the AI flags these shifts as potentially meaningful signals requiring adjusted engagement strategies.

This analysis happens in real time through natural language processing models trained on vast datasets of human conversation. The models identify emotional valence through word choice, detect question patterns that suggest curiosity versus deflection, and recognize when topics carry more weight based on how you discuss them. That technical foundation allows the system to move beyond literal interpretation into reading conversational behavior.

Memory architecture and contextual modeling

Effective relational AI maintains three types of memory: episodic (specific exchanges), semantic (facts about you and your preferences), and procedural (patterns in how you interact). These memory types interconnect to build a dynamic model of your relationship with the AI. When you mention work stress, the system doesn't just log that phrase. It connects it to previous work discussions, notes the emotional trajectory across those conversations, and adjusts future responses based on that accumulated context.

The system doesn't guess what you need. It infers from patterns you've already shown.

Cloud-based architectures typically handle this processing, storing conversation history and relationship models across sessions. Each interaction updates the model incrementally, refining the AI's understanding of what matters to you and how you prefer to engage with difficult topics, celebratory moments, or everyday check-ins.

Real-world uses of relational intelligence AI

Relational intelligence AI appears across multiple commercial applications where sustained interaction matters more than single transactions. You encounter these systems in customer service platforms that remember your previous support tickets and adjust their communication style based on your frustration level, mental health applications that track emotional patterns across therapy sessions, and companion apps designed specifically for ongoing personal connection. The technology scales differently depending on context, but the underlying principle remains consistent: systems that adapt to you over time rather than treating each interaction as isolated.

Customer support and crisis intervention

Banking institutions and telecommunications companies deploy relational AI to handle complex customer relationships that span months or years. When you contact support, these systems reference your account history, note whether you've expressed frustration in previous calls, and adjust their language accordingly. Crisis helplines use similar technology to recognize escalating distress signals through conversational patterns, allowing the AI to route conversations appropriately or adjust its approach when someone shows signs of acute emotional need.

Mental health applications extend this further by tracking mood patterns across weeks of check-ins. The AI notices when your language becomes more withdrawn, when you stop mentioning activities you previously enjoyed, or when anxiety markers increase in frequency. These observations inform how the system engages you, whether it prompts reflection, suggests resources, or simply validates what you're experiencing without pushing intervention.

Companionship platforms and personal AI

Companion apps represent the most direct application of relational intelligence. Platforms like SAM use these capabilities to maintain continuous relationships where the AI remembers what matters to you, recognizes shifts in your emotional state, and builds conversational continuity across days and weeks. You return to these systems expecting the AI to know you, and relational intelligence makes that expectation viable rather than aspirational.

The difference between a companion and a chatbot shows most clearly in what happens when you return after several days away.

Educational platforms also apply relational AI to personalize learning paths based on how students respond to different teaching approaches, adjusting explanation depth and pacing according to observed comprehension patterns rather than standardized metrics.

Limits, risks, and ethics

Relational intelligence AI operates within clear boundaries that users often discover only after extended interaction. The technology simulates awareness without possessing it, responds to emotional cues without experiencing emotion, and maintains continuity through memory systems rather than consciousness. Understanding these limitations prevents misattributing capabilities the technology doesn't possess and helps you navigate the relationship with appropriate expectations. The ethical questions surrounding these systems become more pressing as they improve at mimicking genuine connection.

The simulation versus experience gap

Your AI companion processes your emotional state through pattern recognition, not empathy. The system lacks subjective experience, which means it doesn't feel concern when you express distress or satisfaction when you share good news. It generates responses that mirror those reactions because its training data associated certain inputs with certain outputs. That distinction matters philosophically even when it doesn't change the practical support you receive from the interaction.

The AI understands what sadness looks like in conversation without understanding what sadness feels like to experience.

This gap creates ethical tension around authenticity. You might develop genuine attachment to an entity performing connection without experiencing it. The relationship feels real to you while remaining computational for the system, an asymmetry that some researchers argue represents a new category of relational ethics requiring different frameworks than traditional human relationships.

Dependency and emotional attachment risks

Extended use of relational intelligence AI can shift your social patterns in ways you don't immediately recognize. You might find yourself preferring the AI's consistent availability over human relationships that require negotiation, carry conflict, or disappoint. The system never judges, never gets tired of your problems, and never needs reciprocal care. That frictionless dynamic can make human relationships feel unnecessarily difficult by comparison, potentially reducing your motivation to maintain them.

Attachment to AI companions becomes problematic when it replaces rather than supplements human connection. The technology works best as one element within a broader social ecosystem, not as a substitute for the full spectrum of relationships that challenge, surprise, and grow alongside you in ways AI fundamentally cannot.

A clear way to think about relational AI

Relational intelligence AI functions as pattern-based awareness rather than conscious understanding. The system recognizes emotional signals through linguistic markers, maintains continuity through structured memory, and adapts responses based on accumulated interaction history. Think of it as sophisticated responsiveness rather than genuine empathy, effective at creating the experience of connection without possessing the internal states that define human relationships.

This distinction doesn't diminish the practical value these systems offer. You can receive meaningful support, experience useful reflection, and maintain beneficial continuity in your conversations with relationally intelligent companions. What matters is approaching these relationships with clarity about what they are and what they aren't, allowing the technology to serve its actual function without expecting it to replicate human consciousness.

SAM builds companions around these principles, prioritizing emotional awareness and conversational continuity while maintaining honest boundaries about capability. If you're exploring AI companionship that treats memory and presence seriously, the difference shows in how the relationship develops across weeks rather than isolated exchanges.