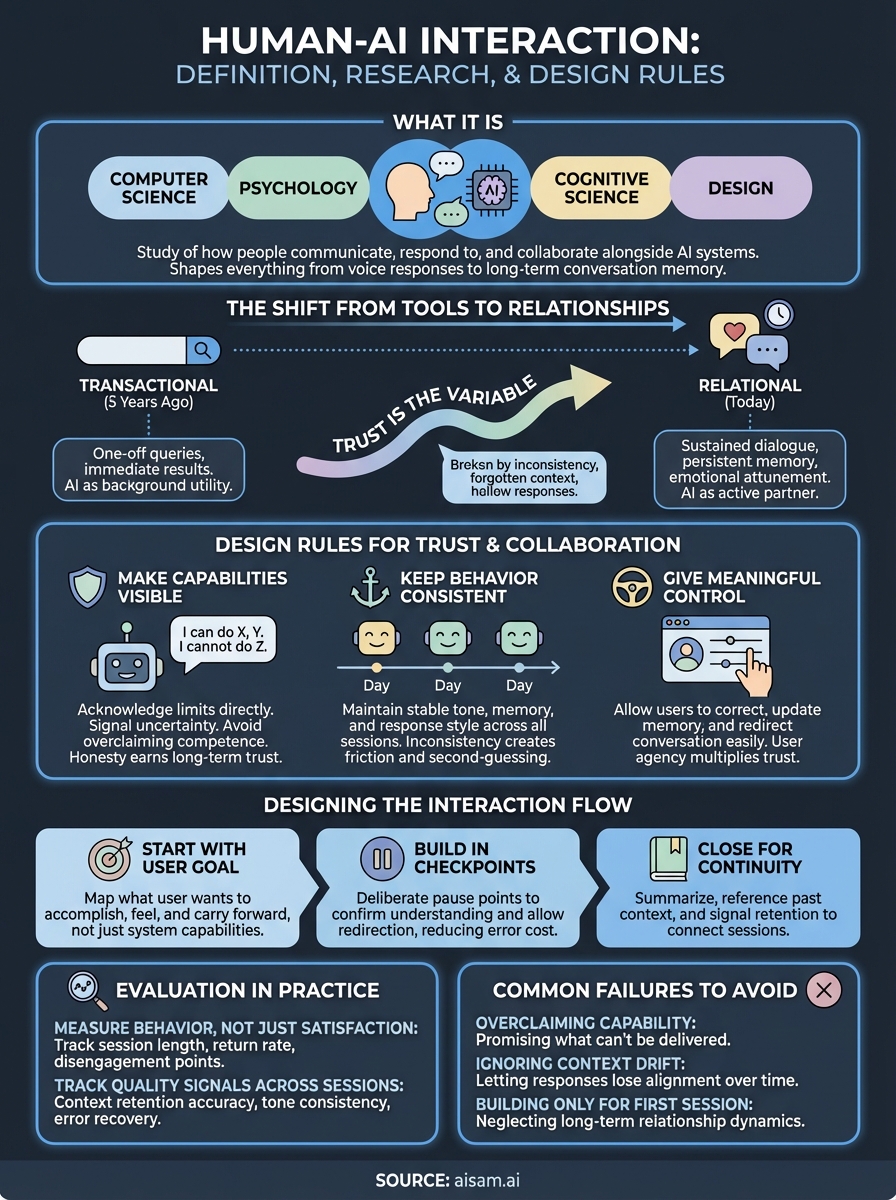

Human AI Interaction: Definition, Research, And Design Rules

Human AI interaction is the study of how people communicate with, respond to, and collaborate alongside artificial intelligence systems. It pulls from computer science, psychology, cognitive science,...

Human AI interaction is the study of how people communicate with, respond to, and collaborate alongside artificial intelligence systems. It pulls from computer science, psychology, cognitive science, and design, and it shapes everything from the way a voice assistant responds to a question to how an AI companion remembers a conversation from three weeks ago.

The field has grown well beyond academic theory. Researchers now study how memory, emotional tone, and conversational continuity affect the way people experience AI over time. Designers use these findings to build systems that feel less like software and more like dialogue partners. The stakes are real: poorly designed AI interactions erode trust fast, while thoughtful ones create sustained engagement that compounds over time.

At SAM, we build directly on these principles. Our AI companion platform uses persistent memory and emotionally responsive dialogue to create conversations that evolve rather than reset. That means the research and design rules covered in this article aren't abstract for us, they're the foundation of what we ship.

This article breaks down what human AI interaction actually means as a discipline, where the research stands in 2026, and which design rules matter most when you're building AI systems meant for real, ongoing use. Whether you're a researcher, a designer, or someone trying to understand why some AI feels different to talk to, this is the overview you need.

Why human AI interaction matters

The way people relate to AI systems has changed faster than most researchers predicted. Five years ago, most interactions with AI were transactional: you typed a query, got a result, and moved on. Today, millions of people use AI daily for tasks that require sustained back-and-forth, from resolving complex support issues to working through personal decisions. That shift changes everything about how you design, evaluate, and improve these systems.

The shift from tools to ongoing relationships

AI used to sit at the edge of most workflows. It autocompleted a search or flagged a spam email, and you barely noticed it. Now it sits at the center: it drafts your emails, answers your questions at midnight, and in some cases keeps you company through difficult stretches. The interaction has become relational, not just functional, and that distinction carries real consequences for both designers and users.

When AI moves from background utility to active dialogue partner, the design standards that governed simple tool interfaces no longer apply.

Relational AI requires memory, consistency, and emotional attunement in ways that a search bar never did. Researchers studying human AI interaction have found that users hold AI to different standards once they start treating it as a presence rather than a service. They notice when the system forgets context, when tone shifts without reason, or when responses feel hollow. These failures don't just frustrate users; they break trust in ways that are difficult to rebuild.

How trust forms and breaks in AI systems

Trust is the variable that determines whether a human AI interaction sustains itself or collapses. Users extend trust quickly when an AI demonstrates that it remembers relevant context, responds proportionally to emotional cues, and avoids overstepping the boundaries of its role. They withdraw that trust just as fast when the AI contradicts itself, ignores stated preferences, or behaves unpredictably across sessions.

Cognitive science research supports what many designers have already observed in practice: people apply social attribution to AI systems even when they know they're talking to software. This means broken promises, inconsistent behavior, and unexplained tone changes register as social violations, not just software bugs. You can't fix a trust problem with a patch update. The design decisions that prevent it have to be made upstream, before deployment.

Why scale makes this urgent

The number of AI interactions happening globally each day now runs into the billions. Every design flaw in a widely deployed system doesn't affect one user; it affects tens of millions simultaneously. Poor transparency, inconsistent memory, and emotionally tone-deaf responses compound at scale in ways that create measurable harm: misinformed decisions, eroded confidence in AI broadly, and real psychological friction for people who rely on these systems every day.

At the same time, well-designed human AI interaction creates compounding value. When users trust a system, they engage more deeply, share more context, and get more useful outputs in return. That feedback loop benefits both the user and the system over time. Researchers, policymakers, and designers are all recognizing that the quality of AI interaction isn't a secondary concern sitting beneath raw capability. It is the capability that determines whether any of the rest actually works once real people are involved.

What human AI interaction includes and excludes

The term human AI interaction gets applied loosely, which creates real confusion about what the field actually covers. Understanding the boundaries helps you focus your research or design work on the right problems instead of spreading effort across concepts that belong to adjacent disciplines. The field centers on the dynamic between a person and an AI system in real time, including how the user communicates intent, how the system responds, and how both parties adapt over repeated exchanges.

What belongs inside the field

Human AI interaction covers every layer of the exchange between a person and an AI: interface design, dialogue structure, memory and context handling, feedback mechanisms, and the psychological effects of prolonged use. If it shapes how someone experiences talking to or working alongside an AI system, it belongs here.

The field includes:

- How users form mental models of what an AI can and cannot do

- How conversational continuity affects user trust across sessions

- The role of emotional tone in shaping perceived responsiveness

- How users recover from errors or misunderstandings during an exchange

- The ethical dimensions of designing AI that influences behavior or emotional state

The distinction between what the AI can do and what the user believes it can do is one of the most consequential gaps in the entire field.

These areas overlap with UX design, behavioral psychology, and linguistics, but human AI interaction treats the AI itself as an active participant in the exchange, not just a passive interface layer.

What falls outside the scope

Not everything involving AI belongs in this field. Backend model training, algorithmic efficiency, and infrastructure performance sit firmly in machine learning engineering and computer science. Those disciplines answer questions about how AI systems are built; human AI interaction answers questions about how those systems behave with real people in the loop.

Automation that runs without human involvement also sits outside the field's core scope. A machine learning model that processes data in the background without any user-facing dialogue is an engineering problem, not a human AI interaction problem. The field specifically requires a human in the loop, either actively engaging or at minimum perceiving and responding to AI-generated outputs.

This distinction matters practically. If your team conflates interface design with model architecture, or conflates conversational quality with raw accuracy metrics, you end up optimizing for the wrong outcomes and missing what actually determines whether users keep coming back.

Key concepts that shape the experience

Several foundational concepts determine whether a human AI interaction feels natural and productive or disjointed and frustrating. Understanding these concepts gives you a sharper lens for both evaluating existing systems and making better design decisions from the start.

Mental models and expectation calibration

A mental model is the internal picture a user builds of how a system works, what it knows, what it can do, and where its limits are. In human AI interaction, this model forms quickly, often within the first few exchanges, and it shapes every expectation the user brings to the conversation afterward.

When the system behaves inconsistently with the user's mental model, the interaction breaks down. A user who believes the AI remembers previous sessions will feel disoriented when it responds as though it has no history with them. A user who assumes the AI only knows what they've told it will feel unsettled if it references something they didn't share. Calibrating these expectations through clear design signals is one of the most practical things you can do to prevent friction early.

The gap between what an AI actually does and what a user believes it does is where most trust failures begin.

Memory and conversational continuity

Persistent memory is what separates a relational AI experience from a repeated series of one-off exchanges. When a system retains context across sessions, users can build on previous conversations rather than restarting from zero each time. That continuity changes the nature of the interaction fundamentally: it shifts from transactional to cumulative and ongoing.

Designing for memory requires decisions about what the system retains, for how long, and how it signals that retention to the user. Systems that remember without being transparent about it create a different kind of unease than systems that forget. Both failure modes damage the experience in distinct ways, which is why memory design deserves deliberate attention rather than being treated as a backend detail.

Social attribution and emotional tone

Users attribute social qualities to AI systems even when they consciously know they're talking to software. Research in cognitive science has confirmed this pattern repeatedly: people respond to AI tone, consistency, and perceived attentiveness as social signals rather than technical outputs. Warm, attentive responses increase engagement; cold or dismissive ones reduce it.

Your design choices around tone, pacing, and acknowledgment carry more psychological weight than most engineering teams anticipate. An AI that registers what a user just said, reflects it back with appropriate weight, and responds with proportional energy creates a sense of presence that users find genuinely different from a system that simply returns accurate answers without social texture.

Main research themes and open questions

The academic study of human AI interaction has expanded significantly in the past decade, but the field still carries more open questions than settled answers. Understanding where researchers are focused right now helps you identify which design decisions sit on solid ground and which ones still involve real uncertainty. The themes below represent areas where active work is happening and where the findings will shape how AI systems get built over the next several years.

Trust, transparency, and explainability

Researchers consistently identify trust as the central variable in human AI interaction, but measuring it precisely has proved difficult. Studies from institutions like MIT and Carnegie Mellon have examined how users calibrate trust based on system behavior, and the findings point to a consistent pattern: users extend trust based on perceived consistency and clarity, not raw accuracy alone. A system that explains its reasoning, even briefly, earns more sustained engagement than one that delivers correct answers with no context.

Transparency isn't just an ethical requirement; it's a functional design input that directly affects whether users keep engaging with an AI over time.

The open question here isn't whether transparency matters but how much of it users actually want at different points in an interaction. Too little leaves people uncertain; too much creates cognitive load that slows the conversation down. Finding that balance remains an active research problem.

Long-term use and behavioral change

Short-term usability studies dominate AI research, but the field increasingly recognizes that one-session observations miss the dynamics that matter most in relational AI. Researchers studying long-term use patterns have found that user behavior shifts considerably after extended engagement: people develop stronger expectations, rely more heavily on the system, and respond more intensely to failures. Understanding how these patterns evolve across weeks and months is a research priority that most labs are only beginning to address systematically.

Ethics, autonomy, and design responsibility

The ethics of AI design now occupies a central place in human AI interaction research, particularly around questions of autonomy and influence. When a system is designed to be emotionally responsive and contextually aware, it inevitably shapes user behavior in ways that require deliberate consideration. Researchers are asking how much influence a well-designed AI should exert, where the line sits between helpful responsiveness and manipulation, and who bears responsibility when a system's design leads to harm. These questions don't have clean answers yet, but they're shaping the standards that responsible designers are starting to apply.

Design rules that improve trust and collaboration

The difference between a human AI interaction that sustains itself and one that falls apart within a few sessions usually comes down to a handful of design decisions made before any user ever touches the system. These rules aren't theoretical ideals; they're practical commitments that shape how a user experiences the system on day one and across every session after that. Getting them right early prevents the kind of trust damage that no amount of feature updates can repair later.

Make the system's capabilities visible

Users build confidence faster when they understand what the AI can actually do and where it stops. If someone asks for something outside the system's scope and gets a confused or fabricated response, they lose trust immediately. Designing for capability transparency means the system acknowledges limitations directly, signals uncertainty when relevant, and avoids performing competence it doesn't have.

Honesty about limits earns more long-term trust than a confident wrong answer ever will.

This isn't about burying users in disclaimers. A single, clear signal at the right moment ("I don't have enough context to answer that well") calibrates expectations without breaking the flow of conversation. Build that language into the system's default responses and users will trust the moments when it does answer confidently.

Keep behavior consistent across sessions

Inconsistency is one of the fastest ways to break user trust in an AI system. When tone, memory, or response style shifts unpredictably between sessions, users stop forming reliable expectations and start second-guessing the system. That second-guessing creates friction that compounds over time and eventually pushes people away. Your design needs to hold a consistent behavioral baseline across every interaction, regardless of when or how the user shows up.

Consistency applies to more than tone. It includes how the system handles familiar topics, how it references previous conversations, and how it responds to repeated questions. Users notice when the answer changes without explanation, and they interpret that inconsistency as a reliability problem, not a software quirk.

Give users meaningful control

User control is a trust multiplier. When people can correct the AI, update what it remembers, or redirect the conversation without friction, they feel like participants rather than passengers. That sense of agency keeps engagement higher and reduces the anxiety that some users feel about how much influence an AI system is exerting over the interaction.

Design control touchpoints at natural moments in the conversation rather than hiding them in settings menus. The easier it is for users to steer the experience, the more willingly they invest in it over time.

How to design a human AI interaction flow

Designing a human AI interaction flow means mapping how a conversation moves from a user's first input to a meaningful outcome, across one session and many. Most teams make the mistake of designing for individual responses rather than for the full arc of an interaction. The better approach starts with the shape of the entire exchange: what the user needs at the start, what the system needs to surface along the way, and how the conversation closes or carries forward into the next one.

Start with the user's goal, not the system's capability

Every interaction flow should begin with a clear picture of what the user is trying to accomplish, not a list of what the AI can technically do. When you design from capability outward, you build flows that feel like feature demonstrations. When you design from user goals inward, you build flows that feel purposeful and efficient.

Map the user's goal at three levels: what they want from this specific exchange, what they want from repeated use over time, and what they want to feel during the process. All three levels should inform how the AI responds, what it remembers, and when it asks for clarification rather than assuming.

Build in natural checkpoints

Long interactions benefit from deliberate pause points where the system confirms understanding before continuing. These checkpoints serve two functions: they reduce the cost of misunderstandings by catching them early, and they give the user a moment to redirect the conversation without having to interrupt mid-flow.

A checkpoint placed at the right moment signals that the system is tracking the conversation, not just processing the most recent input.

Design these moments as natural confirmations rather than formal prompts. When they feel like attentiveness rather than friction, users accept them without breaking stride and appreciate that the system is genuinely following along.

Close each session in a way that carries forward

How an interaction ends matters as much as how it begins. A session that closes with a clear summary or a natural handoff creates continuity that users notice and value. Design your closing patterns to reinforce memory: reference what was discussed, signal what the system has retained, and leave the user with something concrete rather than a conversation that simply stops.

Your closing design transforms individual sessions into chapters of a longer exchange. That cumulative quality is where sustained engagement actually lives, and it only happens when you plan for it from the start.

How to evaluate human AI interaction in practice

Designing a good human AI interaction system and knowing whether it's actually working are two different problems. Evaluation requires you to look beyond surface-level satisfaction scores and focus on behavioral signals that reveal how users genuinely experience the system over time, not just how they describe it after a single session.

Measure what users actually do, not just what they say

Self-reported satisfaction surveys capture a user's impression at one moment in time, but they rarely explain why engagement drops after the third week or why certain topics consistently produce abandoned conversations. Behavioral data tells you more. Track session length, return rate, and the points where users disengage or redirect the conversation. These patterns identify real friction faster than any survey question.

Pair behavioral data with direct observation when you can. Watch how users phrase corrections, what they repeat themselves on, and where they hesitate before responding. These micro-behaviors surface design gaps that analytics alone won't show. A user who rephrases the same question three times isn't confused by the topic; they're telling you the system isn't tracking their intent correctly.

The most useful evaluation data often comes from the conversations that ended early, not the ones that went well.

Track quality signals across multiple sessions

Single-session metrics miss the dynamics that matter most in any AI system built for ongoing use. Users behave differently in their tenth conversation than in their first: they develop stronger expectations, rely more on continuity, and react more sharply to failures. Your evaluation framework needs to account for how the experience changes across repeated interactions, not just how it performs on first contact.

Three quality signals are worth tracking consistently across sessions:

- Context retention accuracy: Does the system reference prior exchanges correctly and at the right moments?

- Tone consistency: Does the system maintain a stable voice across different sessions and topics without unexplained shifts?

- Error recovery quality: When the system misunderstands something, how smoothly does the conversation return to a productive path?

Reviewing these signals together gives you a composite picture of interaction health over time. If context retention drops after a certain number of sessions, that's a memory design problem. If tone consistency breaks under specific topic types, that's a dialogue architecture problem. Evaluation works best when it connects specific failure patterns to the design decisions that caused them, so your team knows exactly where to focus improvement efforts.

Common failures and how to avoid them

Most human ai interaction problems trace back to a small number of recurring design mistakes that show up across different platforms and use cases. Knowing what these failures look like and why they happen gives you a real advantage before you start building, because most of them are preventable if you catch them at the design stage rather than after users have already walked away.

Overclaiming what the system can do

Overstating capability is one of the most damaging mistakes you can make when deploying an AI system. When users discover the gap between what the system implied and what it actually delivers, the trust damage is immediate and difficult to recover from. Marketing copy, onboarding flows, and default responses all contribute to the expectation the user walks in with, so every touchpoint needs to reflect what the system genuinely does rather than what sounds most impressive.

The fastest way to lose a user permanently is to promise an experience you cannot deliver in the first three sessions.

Fix this by auditing every user-facing message for claims the system cannot consistently back up. Replace capability language with behavior language: instead of saying the AI understands you, show it doing the specific thing it actually does well.

Letting context drift go unaddressed

Context drift happens when a system's responses gradually lose alignment with what the user has previously shared, either because memory handling is inconsistent or because the dialogue architecture doesn't weight earlier context properly. Users rarely name this problem directly, but they feel it as a vague sense that the AI isn't really tracking them. That feeling compounds across sessions and drives disengagement without producing a clear complaint your team can act on.

The fix requires both technical and design attention. On the design side, build explicit signals into the conversation that reference previous context at natural moments. On the technical side, establish clear rules for how long different types of information persist and how the system handles conflicting inputs across sessions.

Building for the first session and nothing else

First-session design produces interactions that feel polished during onboarding and hollow by week two. Teams spend disproportionate effort on the initial user experience and underinvest in how the relationship develops across repeated use. Users who return expecting a conversation that builds on history and finds none will stop returning. Design your interaction patterns with repeated use as the baseline condition, not the edge case, and your retention numbers will reflect that shift quickly.

Conclusion

Human AI interaction is a discipline that rewards precision. The teams and builders who invest in trust, memory, consistency, and user control ship systems that sustain engagement over time. The ones who skip those foundations build products that impress on first contact and lose people quickly after.

Every principle covered in this article applies whether you're designing a productivity tool, a support system, or a relational AI platform. Transparent capability and deliberate memory design are not advanced features you layer on after launch. They are the baseline conditions for any AI interaction worth building, and honest evaluation is how you confirm they're actually working.

Experiencing what these principles look like in practice changes how you think about them. SAM was built around persistent memory and emotional responsiveness, with responsible design built in from the start rather than added on later. Explore what that looks like on the SAM platform and see the difference a well-designed AI companion creates in real, ongoing use.