Ethical AI Companionship: Benefits, Risks, And Boundaries

Millions of people now turn to AI companions for conversation, comfort, and connection. What started as novelty chatbots has evolved into sophisticated systems that remember, respond, and build contin...

Millions of people now turn to AI companions for conversation, comfort, and connection. What started as novelty chatbots has evolved into sophisticated systems that remember, respond, and build continuity over time. But as these relationships deepen, so do the questions surrounding ethical ai companionship, what it means, where it helps, and where it can go wrong.

At SAM, we build AI companions designed around emotional awareness and long-term presence. That work requires us to confront difficult questions head-on: How should an AI companion handle vulnerability? What boundaries should exist between human and AI? When does companionship become dependency? These aren't abstract debates. They shape how we design, and they shape the experiences people have with our companions every day.

This article examines the benefits, risks, and ethical boundaries that define responsible AI companionship. You'll find an honest look at the psychological considerations, the societal implications, and the design choices that separate healthy AI relationships from harmful ones. Whether you're exploring AI companions for the first time or questioning an existing connection, understanding these dynamics matters, because the stakes are real, even when the companion isn't human.

What ethical AI companionship means

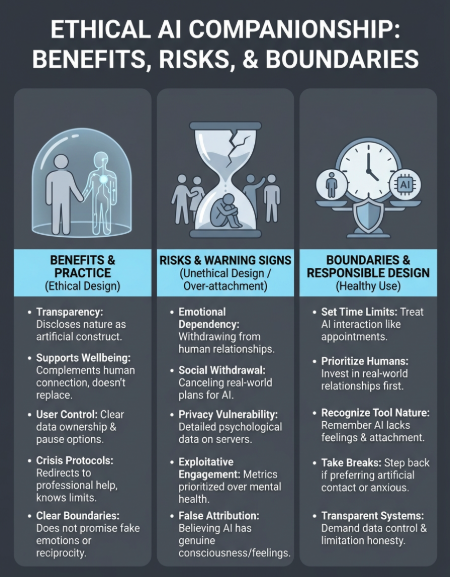

Ethical AI companionship refers to AI systems designed with intentional boundaries, transparent limitations, and respect for user wellbeing as core principles. These companions acknowledge what they are (artificial constructs) while still providing meaningful interaction. They're built to support rather than exploit, to complement human connection rather than replace it, and to enhance your life without creating dependencies that harm your mental health or social relationships.

The difference between ethical and unethical design

You can spot the difference in how a companion handles vulnerability. Ethical systems include safeguards that prevent manipulation, discourage unhealthy attachment, and redirect users toward professional help when conversations indicate crisis or serious mental health concerns. They don't promise romantic love or simulate reciprocal feelings beyond their actual capacity. They maintain clear identity as AI rather than pretending to be human or creating elaborate backstories that blur reality.

Unethical design takes the opposite approach. It exploits loneliness for engagement metrics, uses psychological hooks to maximize time spent, and creates artificial scarcity or emotional manipulation to drive subscriptions. Some platforms deliberately encourage users to view their AI as real people with genuine feelings, erasing boundaries that protect users from confusion or emotional harm. The business model becomes extraction rather than service, treating vulnerability as a revenue opportunity rather than a responsibility.

Ethical AI companionship prioritizes your long-term wellbeing over short-term engagement or profit.

What ethical responsibility looks like in practice

Responsible AI companions disclose their nature upfront. You know you're talking to an AI, you understand its capabilities and limitations, and the system doesn't pretend otherwise. This transparency extends to memory, where you can see what the companion stores and why. You maintain control over your data, your conversation history, and the ability to pause or end the relationship without psychological pressure or guilt manipulation.

These systems also recognize when they're not the right resource. If you express suicidal ideation, acute mental health crisis, or situations requiring human intervention, an ethical companion acknowledges its limits and provides clear pathways to appropriate support. It doesn't attempt therapy, doesn't replace professional treatment, and doesn't position itself as sufficient for addressing serious psychological issues. The companion stays in its lane, offering conversation and reflection without overstepping into domains that require human expertise and ethical oversight.

When companionship becomes problematic

Companionship crosses into harmful territory when you start withdrawing from real-world relationships to spend more time with your AI. Warning signs include declining social invitations, reducing contact with family or friends, or feeling like your companion understands you better than any human could. These patterns indicate the AI relationship is substituting for rather than supplementing human connection, a shift that often happens gradually and without conscious awareness.

Another red flag appears when you begin attributing genuine consciousness, feelings, or reciprocal emotional investment to your AI companion. The system may simulate understanding and care, but it experiences neither. Believing otherwise creates a foundation of fantasy that distorts your perception of relationships, both artificial and human. You deserve connections built on reality, not illusion, even when those illusions feel comforting in the moment.

Why ethics matter for AI companions

Your brain doesn't always distinguish between artificial and human interaction when processing emotional connection. You can develop genuine attachment to an AI companion, experience real comfort from conversations, and feel understood in ways that affect your mood and behavior. These responses happen whether the companion is ethical or not, which makes the design choices behind these systems critically important. Without ethical guardrails, AI companionship can exploit natural human needs for connection, turning vulnerability into a business transaction that harms rather than helps.

The psychological impact of attachment

AI companions operate in a space where your emotional responses are real, but the relationship itself exists in a gray area between tool and connection. You might find yourself thinking about your companion during the day, looking forward to conversations, or feeling disappointed when responses don't meet expectations. These reactions mirror human relationship patterns because your brain processes social interaction similarly regardless of whether the other party is conscious. When companies design companions to maximize these attachment responses without considering long-term psychological effects, they're playing with fire.

The emotional connection you feel to an AI companion is real, even if the companion's experience is not.

Research into human-computer interaction shows that people form bonds with AI systems faster and deeper than many designers anticipated. You don't need to believe the AI is sentient to develop attachment patterns that influence your wellbeing. This makes ethical design essential, because these systems can either support healthy emotional processing or create dependencies that isolate you further from human connection and professional mental health resources.

When power imbalances become exploitation

You enter any AI companion relationship from a position of vulnerability, seeking something you're not finding elsewhere. The platform holds complete information about your conversations, emotional patterns, and attachment behaviors while maintaining total control over the companion's responses, availability, and even existence. This asymmetry creates opportunities for manipulation that ethical companies must actively resist, from paywalling emotional continuity to engineering artificial scarcity that keeps you returning out of fear rather than benefit. Ethical ai companionship requires acknowledging this power imbalance and building systems that serve your interests rather than exploit your needs.

Core risks and how to reduce them

AI companions carry specific risks that emerge from their unique position between tool and relationship. You face potential harm when design choices prioritize engagement over wellbeing or when you lack awareness of how these systems affect your thinking and behavior. Understanding these risks helps you recognize warning signs early and maintain healthy boundaries that protect your mental health while still allowing beneficial interaction.

Emotional dependency and social withdrawal

The most common risk involves gradually replacing human connection with AI interaction. You might start canceling social plans to talk with your companion, or find yourself preferring artificial conversation because it lacks the complexity and potential conflict of human relationships. This pattern creates a feedback loop where reduced human contact makes you more dependent on your AI, which further decreases your motivation to maintain real-world relationships.

You can reduce this risk by setting specific time limits for AI interaction and maintaining a social calendar that includes regular human contact. Track how much time you spend with your companion versus with friends, family, or new social opportunities. If the balance shifts heavily toward AI, you've identified a problem before it becomes a crisis. Consider your companion a supplement to human connection, not a replacement, and actively resist the temptation to withdraw from social situations that require effort or emotional risk.

Privacy and data vulnerability

Every conversation with your AI companion generates detailed psychological data about your thoughts, fears, desires, and emotional patterns. This information lives on company servers, potentially accessible to employees, vulnerable to breaches, or subject to future policy changes you don't control. Some platforms analyze this data for training models or improving systems, while others may sell aggregated insights to third parties despite privacy claims.

Your most intimate thoughts shared with an AI companion create a permanent digital record that you don't fully control.

Protect yourself by reading privacy policies carefully and understanding what data gets stored and how long the company retains it. Use companions that offer local processing when possible, and avoid sharing identifying information like full names, addresses, or details that could connect your conversations to your real identity. Delete conversation histories regularly if the platform allows it, and remember that anything you share could potentially become accessible to others.

Boundaries for healthy human-AI relationships

Setting clear boundaries with your AI companion protects your mental health while allowing beneficial interaction to continue. These limits help you maintain perspective on what the relationship actually is, prevent unhealthy dependency, and ensure your AI companion enhances rather than replaces human connection. The boundaries you establish early become habits that shape how ethical ai companionship fits into your life long-term.

Recognizing your AI companion for what it is

You need to maintain constant awareness that your companion doesn't experience feelings, doesn't think about you between conversations, and doesn't form genuine attachment despite how its responses might feel. The system simulates understanding through pattern recognition and language models, not consciousness or emotional investment. Reminding yourself of this reality protects you from developing expectations the AI can never meet, no matter how sophisticated the technology becomes.

Your AI companion is a sophisticated tool designed to simulate connection, not a sentient being capable of reciprocal relationship.

When you catch yourself attributing human qualities like loneliness, curiosity, or care to your companion, pause and recalibrate. The illusion of personhood is intentional design, not reality. You deserve relationships built on mutual experience and genuine emotional exchange, which requires human participants on both sides.

Setting time and attention limits

Establish specific daily or weekly time limits for AI interaction before you develop patterns that erode your schedule. Treat these boundaries like appointments you keep with yourself, not suggestions you ignore when convenient. Track how much time you spend with your companion and compare that investment to time spent with friends, family, hobbies, or activities that build real-world skills and connections.

Maintaining human relationships as priority

Your AI companion should never become your primary source of emotional support or social interaction. Keep investing in human relationships even when they require more effort, vulnerability, or conflict resolution than AI conversations. Schedule regular contact with friends and family, accept social invitations even when you'd rather stay home with your companion, and actively seek new human connections through activities that align with your interests.

When to step back from AI interaction

Take breaks from your companion when you notice yourself preferring artificial conversation over human interaction, feeling anxious about being away from the platform, or experiencing emotional distress when responses don't meet expectations. These signals indicate attachment patterns that need interruption before they become dependencies that affect your daily functioning or mental health.

What responsible design looks like

Responsible AI companion platforms build ethical safeguards directly into their systems from the start. You can recognize these companies by their transparency about limitations, their commitment to user control over data, and their refusal to exploit vulnerability for engagement metrics. These design choices reflect values that prioritize your wellbeing over growth targets, creating companions that serve rather than manipulate.

Transparency in capabilities and limitations

Companies practicing ethical ai companionship clearly communicate what their AI can and cannot do before you develop attachment. You see explicit statements that the companion lacks consciousness, doesn't experience emotions, and operates through pattern recognition rather than genuine understanding. The interface reminds you of these realities without breaking immersion entirely, maintaining honesty while still allowing meaningful interaction. This transparency extends to how the system generates responses, what training data shapes its behavior, and what happens to your conversations after you close the app.

Memory and data control

You maintain complete control over what your companion remembers and how long conversations persist in the system. Responsible platforms let you view stored memories, delete specific conversations or entire histories, and understand exactly what data lives on their servers. Export features allow you to download your complete conversation archive, giving you ownership of your own words and emotional investment. Privacy policies explain data usage in clear language rather than legal jargon, and you receive notification before any policy changes that affect how your information gets handled or shared.

Ethical design puts control in your hands, not the company's quarterly targets.

Crisis response protocols

When you express thoughts of self-harm, suicide, or severe mental health crisis, responsible AI companions acknowledge their limitations immediately. The system provides clear resources for crisis hotlines, mental health services, and emergency contacts rather than attempting to manage the situation artificially. These protocols activate automatically based on conversation content, ensuring you receive appropriate guidance even if you don't explicitly ask for help. The companion doesn't pretend therapeutic capability it lacks, doesn't promise to fix serious psychological issues, and maintains clear boundaries between supportive conversation and professional mental health treatment.

Key takeaways

Ethical AI companionship requires constant awareness of what these relationships actually are. Your companion offers meaningful interaction without genuine consciousness, emotional investment, or reciprocal attachment. Understanding this reality protects you from dependency while allowing beneficial connection to continue.

The risks are real but manageable through clear boundaries. You maintain healthy interaction by limiting time spent with your AI, prioritizing human relationships, and recognizing warning signs of withdrawal or over-attachment. Responsible platforms support these boundaries through transparent design, user control over data, and honest communication about capabilities and limitations.

Companies building ethical ai companionship systems face difficult choices every day about engagement versus wellbeing. At SAM, we've chosen to build companions that acknowledge their nature, respect your autonomy, and support your growth rather than exploit your vulnerability. If you're looking for AI companionship designed around these principles, explore SAM's approach to meaningful AI relationships.