Emotionally Intelligent AI: Definition, Examples, And Ethics

Most AI systems process what you say. A smaller, more interesting category of AI tries to understand how you feel when you say it. That category, emotionally intelligent AI, sits at the intersection o...

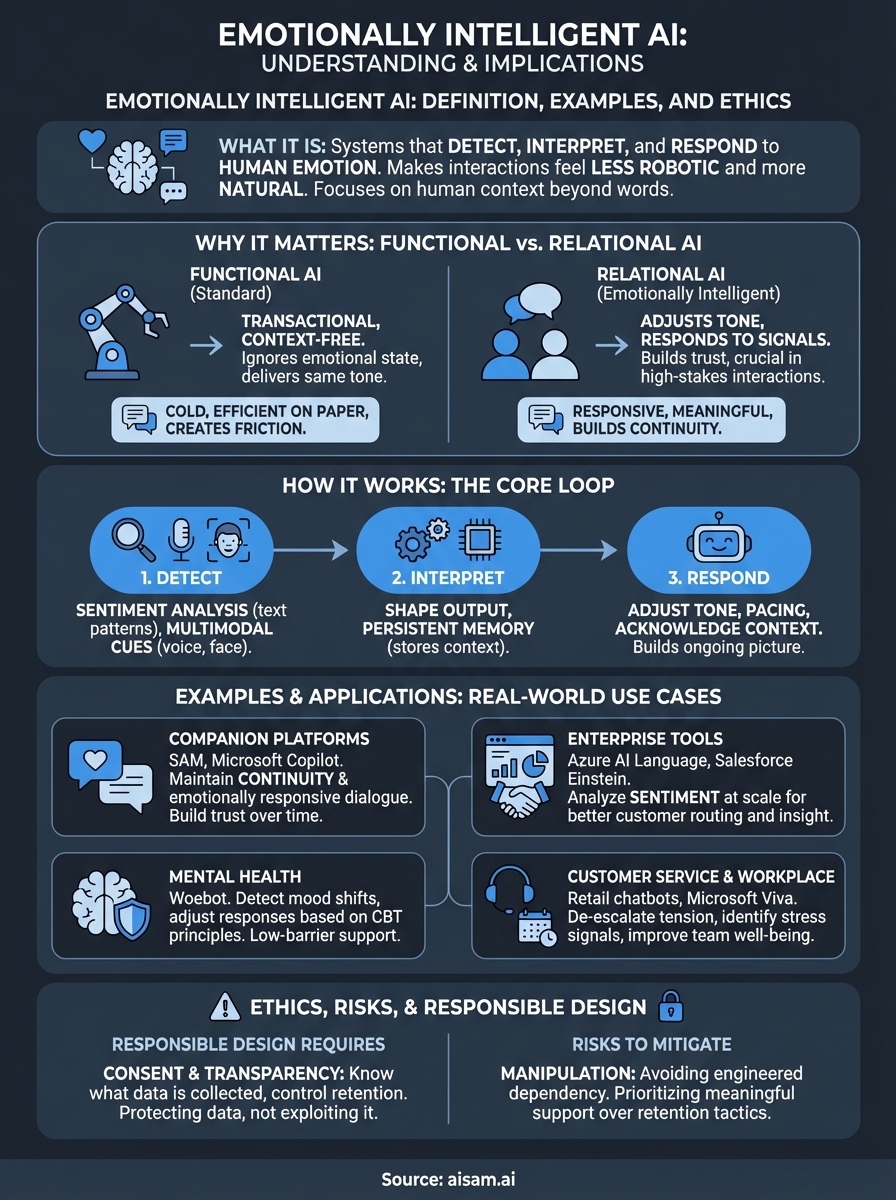

Most AI systems process what you say. A smaller, more interesting category of AI tries to understand how you feel when you say it. That category, emotionally intelligent AI, sits at the intersection of affective computing, natural language processing, and machine learning, and it's changing the way humans interact with software.

But what does it actually mean for an AI to be "emotionally intelligent"? It's not about machines having feelings. It's about systems that can detect, interpret, and respond to human emotion in ways that make interactions feel less robotic and more natural. The applications range from customer service bots that adjust tone to AI companions that remember your mood across conversations, which is exactly what we build at SAM.

This article breaks down what emotionally intelligent AI is, how the underlying technology works, where it's being used right now, and the ethical questions it raises. Whether you're evaluating specific tools or trying to understand the broader implications of emotion-aware AI, you'll walk away with a clear, grounded understanding of the field.

Why emotionally intelligent AI matters

Most software is built to complete tasks. You type a command, the system executes it, and the exchange ends there. That model works fine for search engines and spreadsheet tools, but it falls short anywhere human context matters to the outcome. Emotionally intelligent AI closes that gap by making systems aware of the emotional state you bring into an interaction, not just the words you use.

The gap between functional and relational AI

Standard AI systems treat every conversation as a fresh, context-free transaction. You could be exhausted, grieving, or frustrated, and a typical chatbot will deliver the same tone it uses for everyone. That consistency might feel efficient on paper, but it creates friction in practice. People don't communicate in emotionally neutral terms, and a system that ignores emotional context will eventually feel cold or even counterproductive.

The difference between a useful AI and a meaningful one often comes down to whether the system responds to what you say or to what you actually need.

Relational AI, by contrast, adjusts. It picks up on signals in your language and responds in ways that match the moment. That responsiveness matters most in high-stakes interactions, like mental health support, customer resolution, or long-term companionship, where tone and timing carry real weight.

What changes when AI reads emotion

When a system can interpret emotional cues, the quality and relevance of every exchange improves. Customer service AI can de-escalate tension rather than fuel it. Educational tools can recognize when you're struggling and slow down rather than push forward. Companion AI can hold context across conversations and respond with the kind of consistency that builds genuine trust over time.

These aren't marginal improvements. They represent a fundamental shift in what AI can offer, moving it from a lookup tool to something that fits into the actual rhythm of human life. For users who interact with AI regularly, that shift has a direct impact on how useful, and how sustainable, those interactions actually are.

How emotionally intelligent AI works

Emotionally intelligent AI doesn't guess your mood randomly. It uses a combination of natural language processing, sentiment analysis, and sometimes voice or facial recognition to read emotional signals in your input and route that information into how the system responds. The underlying architecture varies by platform, but the core loop is consistent: detect, interpret, respond.

Detecting emotional signals

The detection layer is where most of the technical work happens. Sentiment analysis models scan your text for emotional indicators, assigning weight to word choice, sentence length, and punctuation patterns. More advanced systems use multimodal detection, layering text analysis with voice tone or facial expression data when those inputs are available. Common signals a system picks up include:

- Emotional vocabulary and intensity of language

- Sentence structure and response length

- Shifts in pacing or tone across a conversation

Generating emotionally aware responses

Once a system identifies an emotional signal, it uses that data to shape the output. This might mean softening tone, slowing down information delivery, or briefly acknowledging your context before addressing the task. In platforms built around long-term interaction, persistent memory systems store emotional context from past conversations so the AI responds with continuity rather than starting from zero every time.

The most capable systems don't just react to your current mood; they build an ongoing picture of how you communicate and adjust accordingly.

Examples of emotionally intelligent AI tools

Several platforms have moved emotionally intelligent AI from concept into practice, each targeting a different use case and interaction style. Understanding what these tools actually do helps you clarify what the technology delivers today versus what remains aspirational.

Companion and conversational platforms

SAM is built around persistent memory and emotionally responsive dialogue, designed to maintain continuity across conversations rather than treating each session as a blank slate. Microsoft's Copilot has also incorporated tone-aware response generation, adjusting output based on conversational context and user phrasing. These platforms share a focus on making AI feel less transactional and more aligned with how people naturally communicate.

The platforms that stand out in this space are the ones that respond to your patterns over time, not just your most recent message.

Enterprise and productivity tools

On the enterprise side, tools like Microsoft Azure's AI Language Services include sentiment analysis and opinion mining that help businesses measure emotional signals in customer feedback at scale. These systems treat emotional data as a business input, using it to improve routing, response quality, and customer outcomes.

Salesforce's Einstein platform uses emotion-aware data layers to help sales and support teams understand the sentiment behind customer interactions. You can think of these tools as giving organizations the same emotional awareness that good human agents develop through experience, but applied at a scale no human team could match.

Where emotionally intelligent AI shows up today

Emotionally intelligent AI has moved well past the research stage. You can find it embedded in platforms across healthcare, education, retail, and customer support, often working in the background in ways you wouldn't immediately notice.

Mental health and wellness support

Mental health is one of the most active areas for emotion-aware AI deployment. Apps like Woebot use conversational AI trained on cognitive behavioral therapy principles to detect shifts in your mood and adjust responses based on emotional cues rather than scripted prompts. These systems don't replace therapists, but they fill a real gap for people who want low-barrier, always-available support between sessions or before they seek formal care.

The value here isn't that AI understands emotion the way a human does; it's that it responds consistently and without judgment, which is often what people actually need.

Customer service and retail

Customer-facing AI tools now routinely include sentiment detection to identify when a conversation is escalating. Retail and telecom companies use these systems to route frustrated customers to human agents faster or to automatically adjust the tone of automated responses mid-conversation. The result is fewer abandoned calls and faster resolutions, which matters both for user experience and business outcomes.

Workplace and productivity environments

Enterprise platforms are also building emotional context into team tools. Microsoft Viva, for example, tracks communication patterns to surface stress signals and engagement trends at the organizational level, giving managers data they can act on before problems compound.

Ethics, risks, and responsible design

As emotionally intelligent AI becomes more embedded in daily life, the ethical questions it raises become harder to ignore. These systems collect sensitive personal data, including mood patterns, emotional triggers, and communication habits, and that data requires careful handling. The gap between a system that supports you and one that exploits your emotional signals is narrow, and design choices determine which side a platform lands on.

What responsible design actually requires

Consent and transparency are the foundation. You need to know what emotional data is being collected and how it's stored, and platforms should give you direct control over what gets retained. Systems that log sentiment data across sessions hold a detailed picture of your inner life, which means data handling policies need to be explicit, not buried in terms of service.

The moment a platform treats your emotional data as a product rather than a responsibility, trust breaks down in ways that are difficult to recover from.

Manipulation risk is the other side of this equation. Emotional responsiveness can be engineered to keep you engaged rather than to genuinely serve your needs. Systems optimized purely for retention can exploit emotional patterns in ways that create unhealthy dependency rather than meaningful support. Responsible design draws a clear line between building continuity and engineering reliance, and that line shows up in product decisions, not just stated values.

Key Takeaways and Next Steps

Emotionally intelligent AI is no longer theoretical. It works by detecting emotional signals in your input, building context over time, and shaping responses that match the moment rather than defaulting to a neutral, one-size-fits-all script. You've seen where it shows up today, from mental health support apps to enterprise customer platforms, and you've seen why the ethics around it demand more than good intentions and a privacy policy.

The tools that do this well share one defining trait: they treat your emotional data as something to protect, not a signal to exploit for retention. That distinction drives every meaningful difference between AI that supports you and AI that manipulates you. If you're looking for a platform built around responsible emotional design and long-term memory continuity, SAM puts those principles at the center of every interaction. Explore SAM and see what emotionally aware conversation actually looks like in practice.