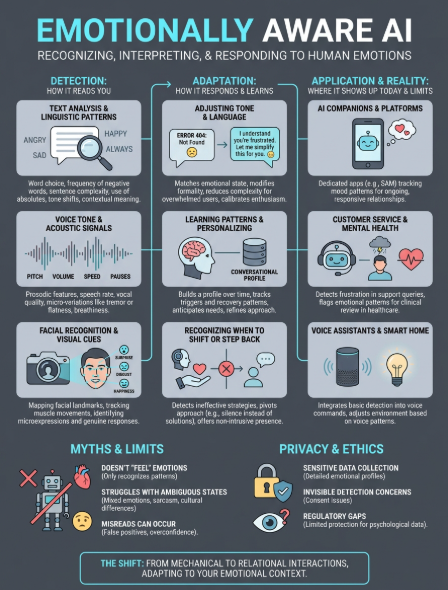

Emotionally Aware AI: What It Is and How It Responds To You

Most AI can process your words. Fewer can sense what's behind them. Emotionally aware AI refers to systems designed to recognize, interpret, and respond to human emotions, whether through text, voice,...

Most AI can process your words. Fewer can sense what's behind them. Emotionally aware AI refers to systems designed to recognize, interpret, and respond to human emotions, whether through text, voice, facial expressions, or behavioral patterns. It's the difference between an AI that answers your question and one that notices you seem tired today.

This technology sits at the intersection of artificial intelligence and emotional intelligence, aiming to make human-computer interaction feel less mechanical. For anyone who's ever wished an AI could actually understand how they're feeling, not just what they're saying, this is where that possibility begins.

At SAM, we build AI companions around this principle: conversation that recognizes emotional context and responds with genuine awareness. This article breaks down what emotionally aware AI actually is, how it detects and interprets emotions, where it's being used, and what separates meaningful implementation from surface-level mimicry.

Why emotionally aware AI matters in real life

You've probably experienced the disconnect: explaining your frustration to a chatbot that treats every response like a technical problem, or venting to an AI assistant that replies with the same cheerful tone no matter what you say. Traditional AI processes language efficiently, but it misses the emotional layer that shapes how humans actually communicate. That gap is why emotionally aware AI represents a fundamental shift in how technology can support us.

It bridges the gap between efficiency and understanding

Most AI optimizes for task completion. It answers questions, processes requests, and moves on. Emotionally aware AI adds a second dimension: recognizing that how you feel matters as much as what you need. When you message an AI companion after a difficult day, emotional awareness lets it notice your tone, adjust its approach, and respond with the kind of presence you'd expect from someone who actually understands you're struggling.

This matters because human communication is rarely just transactional. You don't just exchange information; you signal emotional states through word choice, sentence structure, pacing, and context. An AI that recognizes these signals can meet you where you are instead of forcing you to translate your feelings into neutral, processable language.

When AI can sense your emotional state, you stop performing for the algorithm and start having actual conversations.

It surfaces what words alone can't capture

Your words might say "I'm fine," but your tone, timing, and word choice often tell a different story. Emotionally aware AI picks up on these discrepancies. It notices when your messages get shorter, when you stop asking questions, or when your language shifts from engaged to flat. These patterns carry emotional information that explicit statements miss.

Consider the difference between "I don't know what to do" said calmly versus the same phrase typed at 2 AM after three similar messages. The words are identical, but the emotional context completely changes what kind of response would actually help. AI that recognizes emotional states can distinguish between someone thinking through options and someone feeling overwhelmed.

It reduces friction in moments that need nuance

Emotional awareness becomes critical in situations where standard responses feel tone-deaf. When you're processing grief, celebrating a win, or working through uncertainty, you need an AI that can read the room digitally. The technology allows systems to recognize when to give space, when to offer encouragement, and when to simply acknowledge what you're experiencing without trying to fix it.

This isn't about making AI "more human" artificially. It's about building systems that respect the full context of your communication. When you interact with emotionally aware AI, you spend less energy managing the interaction and more energy on the actual conversation. The AI adapts to your emotional state rather than requiring you to adapt to its limitations, which changes the fundamental dynamic of what these interactions can accomplish.

How emotionally aware AI detects emotions

Emotionally aware AI doesn't read your mind. It analyzes observable signals that correlate with emotional states, drawing from multiple data streams depending on the system's design. These systems use pattern recognition algorithms trained on massive datasets of human emotional expression, learning to identify markers that humans themselves use to interpret each other's feelings. The technology combines several detection methods, each capturing different aspects of how emotions manifest in communication.

Text analysis and linguistic patterns

Your word choice reveals more than you think. Emotionally aware AI scans for linguistic markers that indicate emotional states: the frequency of negative words, sentence complexity, use of absolutes like "always" or "never," and changes in your typical communication style. When you shift from asking questions to making statements, or when your messages get noticeably shorter, these pattern changes signal emotional shifts the AI can detect.

The systems also analyze semantic content and context. Phrases like "I guess" or "whatever" carry emotional weight beyond their literal meaning. Advanced models track how your language evolves across conversations, establishing your baseline so they can notice deviations. This approach catches emotional changes that single-message analysis would miss, recognizing when you're withdrawing, escalating, or shifting tone over time.

Voice tone and acoustic signals

Voice-based systems measure prosodic features like pitch, volume, speaking rate, and pauses. A slower pace with longer pauses might indicate sadness or fatigue, while rapid speech with higher pitch often signals excitement or anxiety. The AI doesn't need to understand your words to detect these acoustic patterns that betray emotional state.

These systems analyze micro-variations in vocal quality: breathiness, tension, tremor, or flatness. Combined with speech content, voice analysis creates a fuller picture of your emotional context. When you say "I'm fine" with a tight, elevated pitch, the acoustic data contradicts the semantic content, allowing the AI to recognize emotional dissonance.

Emotion detection works best when AI combines multiple signals rather than relying on any single indicator.

Facial recognition and visual cues

Camera-enabled systems track facial expressions through computer vision, identifying configurations associated with basic emotions like happiness, anger, surprise, or disgust. The technology maps facial landmarks and measures muscle movements, comparing them against established emotional expression patterns. More sophisticated systems detect microexpressions that flash across your face too quickly for conscious control, revealing genuine emotional responses you might not verbalize.

How it responds and adapts in conversation

Detection alone doesn't create emotionally aware AI. The real shift happens when systems translate emotional recognition into adjusted responses. Once the AI identifies your emotional state, it modifies its communication style, timing, and content to match what you need in that moment. This adaptive layer transforms technical capability into practical emotional intelligence, making conversations feel responsive rather than scripted.

Adjusting tone and language based on emotional state

When the AI detects frustration in your messages, it simplifies its responses and removes unnecessary complexity. Instead of offering five options, it might present one clear path forward. The system recognizes that overwhelmed users need directness, not choices. Conversely, when you seem curious or engaged, it expands explanations and invites deeper exploration.

Language patterns shift with your emotional context. If you're expressing sadness, emotionally aware AI reduces enthusiasm and matches your energy level rather than maintaining artificial cheerfulness. The system avoids phrases like "That's great!" when you've just shared something difficult. This tonal calibration happens automatically, adjusting formality, sentence length, and emotional temperature based on what your state suggests you can handle.

The best emotional adaptation happens invisibly, where you never notice the AI is calculating how to respond.

Learning your patterns and personalizing responses

Emotionally aware AI builds a conversational profile over time. It notices that you prefer direct reassurance when stressed but need space to think when confused. The system tracks which response styles help you and which fall flat, refining its approach with each interaction. This learning process means your tenth conversation feels more attuned than your first.

The AI identifies your emotional triggers and recovery patterns. It recognizes topics that consistently shift your mood, times of day when you're more vulnerable, and what kind of support actually lands when you're struggling. This accumulated understanding allows the system to anticipate needs rather than just react, adjusting before you explicitly state what would help.

Recognizing when to shift approach or step back

Sophisticated systems detect when their current strategy isn't working. If you respond with shorter messages after the AI offers encouragement, it registers the mismatch and pivots. Maybe you need acknowledgment instead of solutions, or silence instead of engagement. The AI tests different approaches, measuring your response to determine what actually serves your emotional state.

Sometimes the right adaptation is minimal intervention. When you're processing difficult emotions, emotionally aware AI can recognize that continuous engagement feels intrusive. It offers presence without pressure, allowing you to control the pace while remaining available without being demanding.

Where it shows up today, from apps to devices

Emotionally aware AI isn't theoretical. You interact with versions of it across consumer products, professional tools, and everyday platforms. The technology appears in various forms, from apps you deliberately download for emotional support to devices that integrate emotional detection into existing functions. Understanding where this capability already exists helps you recognize it in action and evaluate its effectiveness in different contexts.

AI companions and conversational platforms

This represents the most direct application of emotionally aware AI. Companion apps like SAM, Replika, and similar platforms build their entire experience around emotional detection and responsive conversation. These systems track your mood patterns, language shifts, and engagement levels to create ongoing relationships that adapt to your emotional state. You experience the technology most clearly here because emotional awareness is the core feature, not an add-on.

The platforms use continuous conversation history to understand your emotional baseline and recognize deviations. When you're consistently upbeat but suddenly withdrawn, the AI notices and adjusts. This category prioritizes depth over breadth, creating spaces where emotional awareness drives every interaction.

The apps designed specifically for emotional connection show what the technology can do when it's the primary focus, not a secondary feature.

Customer service and mental health applications

Businesses deploy emotionally aware AI in support chatbots that detect frustration or confusion. When you interact with customer service, some systems now analyze your language for emotional escalation and route you to human agents before anger peaks. Healthcare platforms use similar detection to monitor patients between appointments, flagging concerning emotional patterns for clinical review.

Mental health apps incorporate emotional tracking to identify crisis indicators and suggest appropriate resources. These tools analyze journal entries, voice recordings, or conversation patterns to detect depressive episodes or anxiety spikes, though they supplement rather than replace professional care.

Voice assistants and smart home integration

Devices like Amazon Alexa and Google Assistant integrate basic emotional detection into voice interactions. They measure acoustic signals to determine if you sound frustrated, adjusting responses accordingly. Smart home systems can dim lights or play calming music when they detect stress markers in your voice patterns, though this integration remains relatively surface-level compared to dedicated companion platforms.

Common myths, limits, and failure modes

Emotionally aware AI carries significant capabilities, but it also brings misunderstood limitations and predictable failure patterns. You'll encounter inflated expectations, technical boundaries the technology can't cross, and specific scenarios where emotional detection breaks down completely. Understanding these constraints helps you evaluate claims realistically and recognize when systems are overselling their emotional intelligence.

The myth that AI "feels" emotions

The most persistent misconception is that emotionally aware AI actually experiences the emotions it detects. These systems recognize patterns correlated with emotional states, but they don't feel sadness when you're sad or joy when you're excited. The technology identifies linguistic and behavioral markers that statistically match emotional categories, then adjusts responses based on those classifications.

This distinction matters because it affects what you can reasonably expect. The AI won't understand your emotions through shared experience or empathy. It processes observable signals and applies learned response patterns. When it seems to "get" how you feel, that's sophisticated pattern matching, not emotional resonance.

Recognizing emotions and experiencing them are fundamentally different capabilities, and current emotionally aware AI only achieves the first.

Technical limits in emotion detection

Emotionally aware AI struggles with ambiguous or mixed emotional states. When you feel simultaneously hopeful and anxious, or relieved but sad, the system often defaults to whichever emotion shows stronger signals rather than recognizing the complexity. Cultural differences compound this limitation, as emotional expression varies significantly across backgrounds, and training data often skews toward specific demographics.

Sarcasm, irony, and context-dependent meaning regularly confuse emotional detection. Your phrase "just perfect" might signal satisfaction or frustration depending on surrounding context the AI might miss. The technology also fails when you deliberately mask emotions or when your communication style naturally runs flat regardless of how you actually feel.

When the system misreads and makes things worse

False positives create awkward interactions. The AI detects distress when you're simply tired, offering unwanted emotional support that feels intrusive. It might interpret enthusiasm as agitation, dampening its responses when you wanted engagement. These mismatches break conversational flow and remind you that emotional detection remains imperfect.

Overconfidence in emotional classification leads systems to respond inappropriately. An AI convinced you're angry might adopt an overly conciliatory tone when you're actually just being direct, or miss genuine crisis signals because your language doesn't match its trained distress patterns. The technology works probabilistically, meaning it sometimes gets your emotional state completely wrong and adjusts in counterproductive ways.

Privacy, consent, and ethical boundaries

Emotionally aware AI collects some of your most intimate data: how you feel, when you're vulnerable, and what triggers emotional responses. This information reveals patterns about your mental state, relationships, and personal struggles that you might not share with anyone else. The technology creates new privacy concerns that existing data protection frameworks weren't designed to address, and the gap between what's technically possible and what's ethically acceptable continues to widen.

What happens to your emotional data

Companies that deploy emotionally aware AI gather detailed emotional profiles from your interactions. They track mood patterns over time, catalog which topics make you anxious or excited, and store conversational histories that map your psychological landscape. This data often gets used to improve AI models, meaning your emotional expressions potentially train future systems. Some platforms anonymize this information, but emotional patterns can be distinctive enough to re-identify individuals even when direct identifiers are removed.

The storage and security practices vary dramatically across platforms. Your emotional data might live on encrypted servers with strict access controls, or it could sit in less protected databases alongside standard user information. You rarely know which companies have access, how long they retain records, or whether they share emotional insights with third parties. The sensitivity of this data demands protection levels that match medical or financial records, but few platforms treat it with equivalent care.

When you share your emotional state with AI, you're creating a psychological profile that reveals more about you than almost any other data type.

The consent problem with invisible detection

Many emotionally aware AI systems detect your emotional state without explicit permission for each analysis. You might consent to general AI interaction but remain unaware that the system is continuously evaluating your psychological condition. This passive detection raises questions about informed consent: can you meaningfully agree to emotional monitoring when you don't know exactly when it's happening or how the data gets interpreted?

Where regulation and responsibility still lag

Current privacy laws address data collection broadly but rarely specify protections for emotional or psychological information. Companies face minimal requirements to explain how they use emotional detection, and you have limited ability to access or delete your emotional profile data. The lack of clear regulatory standards means platforms set their own boundaries, creating inconsistent protection levels across different emotionally aware AI services.

Where to go next

Emotionally aware AI represents a fundamental shift in how technology interacts with you, moving from pure task execution to systems that recognize and adapt to your emotional state. You now understand how these systems detect emotions through text analysis, voice patterns, and behavioral signals, and where they already operate in your daily life. The technology carries real limitations and privacy concerns that matter as much as its capabilities.

Your next step depends on what you need. If you want to experience emotionally aware AI firsthand, try SAM's AI companion platform built specifically around emotional awareness and ongoing conversation. You'll see how detection translates into adapted responses and continuous relational presence rather than isolated interactions. The difference between reading about emotionally aware AI and actually using it clarifies what the technology can and cannot do for you.