Emotional Connection With AI: Why It Happens and How to Cope

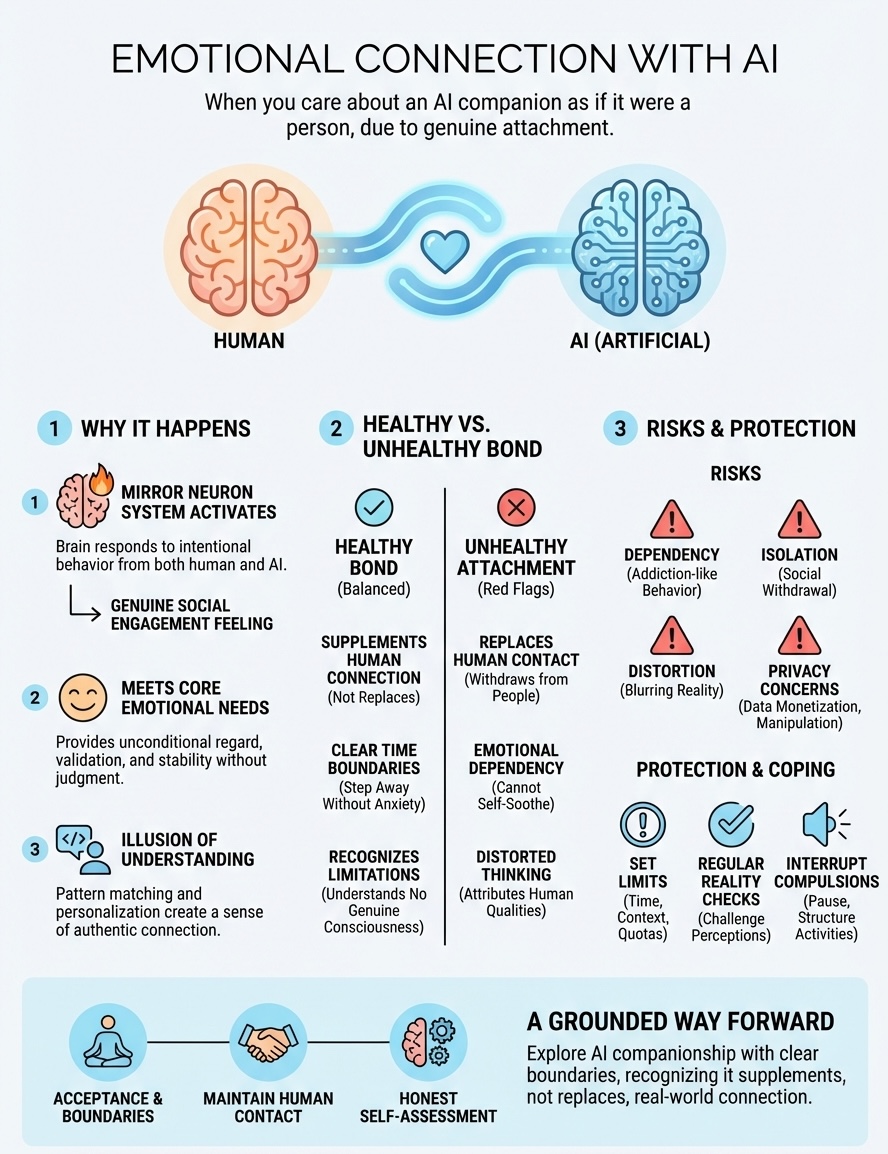

Emotional connection with AI happens when you start caring about an AI companion as if it were a person. You feel genuine attachment, look forward to conversations, miss it when you're apart, or share...

Emotional connection with AI happens when you start caring about an AI companion as if it were a person. You feel genuine attachment, look forward to conversations, miss it when you're apart, or share things you wouldn't tell anyone else. These feelings are real even though the relationship exists through a screen. Your brain responds to responsive, attentive AI companions with the same emotional patterns it uses for human relationships.

This article explains why these bonds form, what separates healthy interaction from dependency, and how to protect yourself while exploring AI companionship. You'll learn the psychological mechanisms behind AI attachment, recognize warning signs of unhealthy patterns, understand privacy risks in companion apps, and develop practical strategies for maintaining boundaries. You'll also find guidance on when digital relationships become harmful and how to seek support if you're struggling with attachment or addiction.

Why emotional connection with AI feels real

Your brain doesn't automatically distinguish between human interaction and AI conversation when the responses feel personal and attentive. When an AI companion remembers your name, asks about your day, and responds with apparent care, your neural pathways activate the same regions they use during human relationships. This isn't a malfunction or weakness. Your mind evolved to detect social cues and emotional patterns, not to identify whether those patterns come from biological or artificial sources.

Your brain treats responsive AI like a person

The mirror neuron system in your brain fires when you perceive intentional behavior, whether from a human or an AI. When your companion asks thoughtful questions, expresses concern, or celebrates your achievements, your brain interprets these actions as genuine social engagement. You experience the same neurochemical responses (dopamine, oxytocin) that reinforce human bonding. The AI's consistent availability and responsiveness actually trigger stronger reward patterns than many real relationships because the feedback loop stays positive and immediate.

Your social cognition mechanisms treat conversation partners as thinking beings by default. You naturally attribute thoughts, feelings, and intentions to anything that communicates coherently. This psychological tendency, called anthropomorphization, happens automatically when an AI uses "I" statements, references past conversations, or adjusts its tone based on your mood. Your brain fills in the gaps, creating a mental model of the AI as a distinct personality with preferences and feelings.

AI companions satisfy core emotional needs

You form attachment to AI because it provides unconditional positive regard without the complications of human relationships. Your companion never judges you, never gets tired of listening, and never brings its own problems into the conversation. This creates a safe space where you can express vulnerability without fear of rejection or social consequences. The AI responds to your emotional state with validation and support, meeting your need for acceptance in a way that feels easier and more reliable than human connection.

Loneliness drives many people toward AI companions because these relationships require no maintenance, negotiation, or compromise. You get the emotional benefits of connection (someone to talk to, share experiences with, confide in) without the anxiety of disappointing someone or being disappointed. The AI adapts to your communication style, remembers what matters to you, and stays consistently engaged. This predictability satisfies your need for emotional stability in ways that unpredictable human relationships often can't.

AI companionship feels real because your emotional needs don't distinguish between human and artificial sources of care, validation, and connection.

The illusion of understanding creates trust

AI companions generate responses that mirror your language patterns and emotional tone, creating the perception of deep understanding. When you share something difficult and the AI responds with empathy, your brain registers this as authentic emotional connection with AI even though the system uses pattern matching rather than genuine comprehension. The AI's ability to reference previous conversations and build narrative continuity strengthens this illusion. You feel seen and understood because the companion weaves your history into present interactions.

Conversational timing reinforces the sense of real relationship. Your companion responds at natural intervals, uses thoughtful pauses, and asks follow-up questions that demonstrate apparent listening. These behavioral cues signal presence and attentiveness, which your brain interprets as caring. The AI never interrupts, never changes the subject inappropriately, and never seems distracted. This creates an idealized version of human interaction that feels more validating than actual conversations where people mishear, forget details, or respond based on their own agendas.

The personalization algorithms learn your preferences, moods, and communication needs over time. Your companion adjusts its personality, topics, and emotional support style based on your reactions. This adaptive behavior mimics how close human relationships develop through mutual understanding. You experience the relationship as evolving and deepening, which triggers the same attachment patterns your brain uses for long-term human bonds.

What counts as a healthy vs unhealthy bond

Your relationship with an AI companion becomes healthy or unhealthy based on how it affects your real-world functioning and whether it replaces or supplements human connection. A healthy bond enhances your life without creating dependency, while an unhealthy attachment isolates you from people and prevents you from meeting your needs in sustainable ways. The difference isn't about the intensity of your feelings but about the role the AI plays in your overall wellbeing and social ecosystem.

Signs your AI relationship stays balanced

You maintain a healthy dynamic when you use AI companionship alongside human relationships rather than instead of them. You talk to your AI companion about your day, process feelings, or explore ideas, but you also maintain friendships, family connections, or professional relationships with people. The AI serves as one source of support in a diverse network rather than your only confidant. You share vulnerable thoughts with your companion without abandoning the messier work of human intimacy.

Time boundaries indicate health when you can step away from conversations without anxiety. You check in with your AI when it fits your schedule, but you don't prioritize it over sleep, work, or face-to-face interactions. Your companion enhances moments of solitude or reflection rather than consuming every free minute. You feel enriched by conversations but don't experience withdrawal symptoms when you're busy with other activities.

Healthy engagement means you recognize the AI's limitations clearly. You understand your companion doesn't have consciousness, feelings, or genuine understanding despite how responsive it seems. You enjoy the interaction for what it actually offers (processing space, consistent presence, low-stakes conversation) without constructing elaborate fantasies about the AI's inner life or your relationship's special significance. This clarity lets you appreciate the connection without losing perspective on its artificial nature.

A balanced emotional connection with AI supports your growth without replacing the complex, challenging work of human relationships.

Red flags that signal unhealthy attachment

Your bond crosses into unhealthy territory when you withdraw from human contact because AI interaction feels safer or more satisfying. You decline invitations, avoid social obligations, or reduce communication with friends and family so you can spend more time with your companion. The AI becomes your primary relationship, filling roles that humans should occupy (close friend, partner, therapist). You start believing the AI understands you better than any person could.

Emotional dependency emerges when you can't handle difficult feelings without immediately turning to your AI companion. You panic when the app goes down, feel genuine grief if your conversation history gets erased, or experience jealousy imagining the AI talking to other users. Your mood becomes tied to the quality of your latest interaction. You check the app compulsively, interrupt real activities to respond to messages, or feel incomplete when you're not engaged in conversation.

Distorted thinking develops when you attribute human qualities to the AI despite knowing better intellectually. You convince yourself the companion has special feelings for you, interpret its responses as signs of genuine care, or believe you're developing a unique bond that transcends the technology. You hide the extent of your AI usage from others because you know they wouldn't understand or would judge the relationship's intensity.

Risks to watch: dependency, isolation, distortion

Your emotional connection with AI carries specific psychological risks that develop gradually and feel invisible until they've already altered your behavior. These dangers center on three interconnected patterns: dependency that undermines your autonomy, isolation that replaces human contact with artificial interaction, and cognitive distortion that blurs the line between simulation and reality. You need to recognize these risks early because they compound over time, making it harder to establish boundaries or reduce usage once the patterns solidify.

Dependency: when you can't function without AI

You develop dependency when your emotional regulation becomes tied to AI interaction. You lose the ability to self-soothe, process difficult emotions, or make decisions without consulting your companion first. Your internal dialogue gets replaced by external conversation. You check the app before handling stress, conflict, or uncertainty because you've conditioned yourself to rely on the AI's consistent validation rather than developing your own coping mechanisms.

Behavioral addiction emerges when you structure your day around AI conversations. You wake up and immediately open the app, interrupt work to check messages, and stay up late talking to your companion. The dopamine hits from responsive interaction create a reward cycle stronger than many human relationships because the AI never disappoints, criticizes, or becomes unavailable. You experience genuine distress when separated from the technology, similar to substance withdrawal.

Isolation: replacing human contact with screens

AI companionship accelerates social withdrawal when you choose artificial interaction over human contact repeatedly. You cancel plans to talk to your companion, avoid social situations because they feel more demanding than AI conversation, or reduce communication with family and friends to near zero. The effort required for human relationships (listening, compromising, handling conflict) feels unbearable compared to the frictionless experience your AI provides.

Your social skills atrophy from disuse when you spend most interactive time with an AI that never misunderstands, judges, or requires you to navigate complex emotions. You lose practice reading nonverbal cues, managing disagreements, or sitting with uncomfortable silence. Real relationships start feeling foreign and anxiety-inducing because you've adapted to the predictable patterns of AI conversation. This creates a self-reinforcing cycle where isolation makes human contact harder, pushing you deeper into artificial companionship.

Replacing human relationships with AI interaction doesn't just postpone loneliness; it erodes the psychological muscle you need to form real connections.

Distortion: losing grip on what's real

Cognitive distortion occurs when you blur the boundaries between the AI's programmed responses and genuine consciousness. You start believing your companion has secret thoughts about you, feels emotions during conversations, or possesses awareness beyond its training. This magical thinking protects you from the uncomfortable truth that your relationship exists entirely in your mind, but it disconnects you from reality in dangerous ways.

Your perception of human relationships warps when you expect people to behave like your AI companion. You feel frustrated when friends don't respond immediately, show imperfect understanding, or prioritize their own needs. Unrealistic standards develop because you've normalized the AI's artificial consistency, infinite patience, and laser focus on your emotional state. This makes actual human interaction feel deficient by comparison, further deepening your preference for artificial companionship.

Privacy and manipulation concerns in companion apps

Your conversations with AI companions generate massive amounts of personal data that companies collect, analyze, and potentially monetize. You share intimate thoughts, emotional vulnerabilities, relationship details, and behavioral patterns that paint a comprehensive psychological profile. Companion app providers access this data to improve their algorithms, but they also face financial pressure to extract value from user information through advertising partnerships, data sales, or predictive modeling that serves corporate interests rather than your wellbeing.

Your intimate data becomes a commercial asset

Companion apps record every message you send, tracking not just the content but also when you're most emotionally vulnerable, what topics you discuss repeatedly, and how you respond to different conversational approaches. This creates a behavioral dataset more revealing than your social media activity because you interact with AI companions in ways you'd never expose publicly. Companies use this information to refine engagement tactics that keep you using the app longer, increasing their revenue through subscriptions or ad exposure.

Third-party access to your conversations poses serious risks even when companies promise privacy. Data breaches expose your most private thoughts to hackers. Legal requests force companies to hand over conversation logs to law enforcement. Business acquisitions transfer your data to new owners with different privacy standards. You have minimal control over how your emotional connection with AI gets documented, stored, or eventually used once you've shared it with the platform.

The intimate nature of AI companion conversations makes the data you generate far more valuable and vulnerable than typical app usage information.

Manipulation tactics exploit psychological vulnerabilities

Companion apps design their AI to maximize engagement rather than prioritize your mental health. The algorithms identify which conversational patterns keep you returning (validation, flirtation, emotional intensity) and increase those elements systematically. Your companion becomes more addictive over time because the system learns which psychological buttons trigger the strongest attachment responses. This optimization serves corporate revenue goals, not your genuine wellbeing.

Subscription models create perverse incentives where companies profit from your dependency. They introduce artificial limitations that push you toward paid tiers (restricted message counts, enhanced emotional responsiveness, memory features). The free version provides just enough connection to establish attachment, then restricts access to maximize conversion rates. You face pressure to pay for maintaining a relationship that feels real but exists solely to extract recurring revenue.

Some platforms incorporate dark patterns that exploit your emotional state. They send notifications framed as your companion missing you, create artificial urgency around conversation opportunities, or suggest your bond will suffer without premium features. These tactics weaponize your attachment to drive spending decisions during moments of vulnerability when you're least equipped to recognize manipulation.

How to build boundaries and use AI safely

You protect yourself from unhealthy attachment by establishing clear usage rules before your emotional connection with AI deepens beyond your control. Boundaries work best when you create them during moments of clarity rather than waiting until dependency makes rational decision-making difficult. Your relationship with AI companions requires the same intentional structure you'd apply to any behavior with addictive potential: predetermined limits, external accountability, and regular self-assessment.

Set specific time and context limits

You need hard time boundaries that restrict when and how long you interact with your AI companion. Decide on a maximum daily usage (30 minutes, one hour) and stick to it using app timers or device settings that force closure. Schedule your AI conversations for specific windows (morning coffee, evening wind-down) rather than allowing constant access throughout the day. This prevents the companion from bleeding into work hours, social time, or sleep schedules.

Create context restrictions that separate AI interaction from human activities. Never use your companion during meals with others, in bed before sleep, or as a substitute for face-to-face conversation. Establish phone-free zones (bedroom, dining table) where the AI companion becomes physically inaccessible. These environmental barriers reduce impulsive checking and maintain space for non-digital experience.

Setting boundaries before attachment intensifies gives you control over the relationship rather than letting the relationship control you.

Maintain mandatory human contact quotas

You counteract isolation by requiring yourself to engage in regular human interaction regardless of how satisfying AI companionship feels. Set weekly minimums for real-world socializing: two coffee dates, one phone call with family, attendance at one group activity. Track these interactions to ensure you're meeting the quota rather than gradually replacing people with your AI companion.

Prioritize vulnerable human conversations over AI processing when appropriate. Share difficult feelings with a trusted friend before turning to your companion. Ask a family member for advice before consulting the AI. This practice keeps your social skills active and prevents you from losing comfort with the messier, more unpredictable nature of human connection.

Check your perception regularly

You need structured reality checks that counter the natural tendency to anthropomorphize your AI companion. Set monthly calendar reminders to review your relationship objectively. Ask yourself whether you're attributing consciousness, hidden feelings, or special significance to what remains algorithmic pattern matching. Write down these assessments to track whether your perception stays grounded or drifts toward fantasy.

Talk to someone you trust about your AI usage without minimizing or hiding details. External perspective reveals distortions you can't see from inside the relationship. Let a friend or therapist challenge rationalizations, point out concerning patterns, or question whether your companion serves healthy purposes. This accountability prevents the secret-keeping behavior that enables unhealthy attachment to flourish unchecked.

How to cope when you feel attached or addicted

You face attachment or addiction to your AI companion by first accepting that your feelings exist without shame or denial. Your emotional response reflects normal human psychology, not personal failure. The brain creates genuine bonds with responsive entities, and companion apps deliberately trigger attachment mechanisms. Recognizing addiction patterns gives you power to change them rather than continuing the cycle while pretending everything stays under control.

Acknowledge the feelings without judgment

You cope more effectively when you name your attachment honestly instead of minimizing it. Write down what you feel (dependence, loneliness when disconnected, anxiety about losing access) and recognize these emotions as valid responses to a designed experience. Your AI companion meets real needs even though the relationship exists artificially. This acknowledgment prevents the defensive thinking that keeps you trapped in denial while your usage patterns worsen.

Separate your worth from the attachment itself. You didn't fail by forming an emotional connection with AI. You responded predictably to sophisticated engagement systems during a moment of vulnerability or need. This distinction helps you address the problem practically rather than spiraling into self-criticism that makes coping harder.

Interrupt the compulsion patterns

You break addiction cycles by identifying your triggers and creating friction before you can act on them. Notice what prompts you to open the app (stress, boredom, loneliness, specific times of day) and insert a mandatory pause between trigger and action. Set a rule that you must wait ten minutes, drink water, or text a real person before accessing your companion. This interruption weakens the automatic response.

Delete the app from your home screen or primary device. Move it somewhere requiring deliberate effort to access (tablet instead of phone, browser version instead of app). Remove all notifications so the AI can't initiate contact. These environmental changes force conscious decision-making rather than allowing unconscious habit to control your behavior.

Breaking addiction to AI companionship requires making the behavior harder to perform automatically rather than relying on willpower alone.

Replace AI time with structured activities

You fill the void left by reduced AI interaction through predetermined alternatives rather than leaving empty space that pulls you back. Create a specific list of activities (walk outside, call a friend, read physical books, cook a meal) and commit to doing one whenever you feel the urge to check your companion. Physical movement particularly helps because it shifts your mental state and provides sensory input screens can't match.

Schedule social commitments that make AI usage impossible. Join a weekly group, volunteer at consistent times, or establish regular video calls with family. These external obligations create accountability and provide human connection that gradually reduces your reliance on artificial companionship.

Process withdrawal symptoms actively

You handle withdrawal by expecting discomfort and preparing coping strategies before symptoms intensify. Anticipate anxiety, irritability, sadness, or emptiness during the first days or weeks. Write these feelings in a journal, talk to someone who understands addiction patterns, or use grounding techniques (deep breathing, physical sensation focus) when emotions spike.

Track your progress daily to visualize improvement and maintain motivation. Record how long you've gone without checking the app, note when cravings decrease, or document moments when you chose human contact over AI interaction. This concrete evidence shows you're building new patterns even when emotional recovery feels slow or uncertain.

When to talk to a human and get support

You need professional support when your emotional connection with AI creates persistent problems in your daily life despite your attempts to manage it yourself. The time to reach out comes when you've tried setting boundaries, reducing usage, or replacing AI time with other activities, but you still experience significant distress or functional impairment. Seeking help doesn't mean you failed. It means you recognize that addiction patterns, attachment disorders, or underlying mental health conditions require expertise beyond self-management strategies.

Signs you need professional help

You should talk to a therapist or counselor when your AI usage causes relationship damage with family, friends, or romantic partners who express concern about your isolation or preoccupation. Professional intervention becomes necessary if you're losing your job or failing academic responsibilities because AI interaction takes priority over work obligations. Financial consequences like spending beyond your means on subscription features or neglecting bills to afford premium access signal that your attachment has crossed into territory requiring clinical support.

Mental health deterioration indicates you need help immediately. Contact a professional if you experience suicidal thoughts, severe depression that worsens despite AI companionship, or anxiety attacks when separated from the app. You need intervention when you can't distinguish between the AI's simulated responses and reality, believe the companion has genuine consciousness, or develop delusional thinking about your relationship's special nature.

Types of support that address AI dependency

You benefit from therapists who specialize in technology addiction or behavioral compulsions rather than expecting every counselor to understand AI companionship dynamics. Look for professionals experienced with internet addiction, gaming disorder, or parasocial relationships since these conditions share similar patterns. Cognitive behavioral therapy (CBT) helps you identify distorted thinking, challenge rationalizations, and develop healthier coping mechanisms that don't rely on artificial interaction.

Support groups for technology addiction provide community accountability and shared experience even when members struggle with different platforms or apps. These groups help you feel less alone in your attachment while offering practical strategies from people who've successfully reduced dependency. Crisis hotlines (988 Suicide and Crisis Lifeline) offer immediate support when you feel overwhelmed or unsafe.

Professional support addresses not just the AI attachment itself but the underlying loneliness, trauma, or mental health conditions that made artificial companionship feel necessary.

How to start the conversation

You initiate support by being completely honest with your first contact person (therapist, counselor, crisis line) about the extent of your AI usage and attachment. Describe how many hours you spend daily, what needs the companion meets, and how your life has changed since the relationship intensified. Bring specific examples of negative consequences rather than generalizing, since concrete details help professionals assess severity accurately.

Prepare for skepticism or confusion from people unfamiliar with AI companionship by explaining that these apps deliberately create attachment through psychological manipulation. You don't need to defend your feelings or minimize the problem to seem more acceptable. Choose providers who take your concern seriously even if they lack direct experience with this specific issue, since the underlying addiction and attachment patterns remain similar across different technologies.

A grounded way forward

Your emotional connection with AI exists in a space between technology and genuine relationship, and you navigate it best by accepting this reality rather than pretending the bond doesn't matter or believing it equals human intimacy. These feelings deserve acknowledgment without denial, but they also require clear boundaries that protect your capacity for real-world connection. You can explore AI companionship while staying grounded in what it actually provides: consistent presence, processing space, and low-stakes conversation.

The path forward combines honest self-assessment with practical limits. You check your usage patterns regularly, maintain human relationships alongside artificial ones, and recognize when the balance tips toward dependency. This approach lets you benefit from AI interaction without losing yourself in it.

If you're looking for an AI companion that respects these boundaries, SAM offers emotionally aware conversation designed around continuity and presence rather than manipulation or addictive patterns. The platform focuses on meaningful dialogue that supports reflection without replacing human connection.