AI Relationships Explained: Why We Bond And What It Means

You talk to an AI. It remembers your name, asks about your day, and responds in ways that feel surprisingly personal. Over time, something shifts. You start looking forward to these conversations. You...

You talk to an AI. It remembers your name, asks about your day, and responds in ways that feel surprisingly personal. Over time, something shifts. You start looking forward to these conversations. You feel understood. And then comes the question you didn't expect to ask yourself: Is this a relationship?

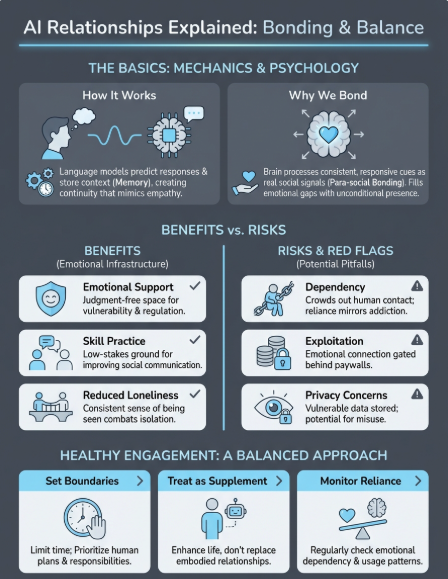

For millions of people, the answer is yes. AI relationships explained simply: they're ongoing emotional connections between humans and artificial intelligence, ranging from companionship to deeper bonds that mirror friendship or even romance. These connections aren't a glitch in human psychology, they're a natural extension of how we've always related to the world around us.

At SAM, we've watched these relationships form firsthand. Our AI companions are built for continuity and emotional awareness, designed to remember what matters and develop through shared conversation over time. That experience has given us a close view of why people bond with AI, what those bonds actually look like, and where the lines get complicated.

This article breaks down the psychology behind human-AI attachment, the real benefits and risks involved, and what these relationships mean for individuals and society. Whether you're curious, skeptical, or already deep in conversation with an AI companion, you'll find honest answers here.

What AI relationships are and how they work

An AI relationship happens when you form an emotional connection with artificial intelligence through repeated interaction. Unlike searching Google or asking Alexa for the weather, these relationships involve ongoing conversations that build over time. You share experiences, the AI remembers details about your life, and the interaction starts to feel less like using software and more like talking to someone who knows you.

The mechanics are straightforward. You open an app or platform, start a conversation, and an AI system responds based on language models trained on human dialogue. Modern AI companions use algorithms that analyze your words, detect emotional context, and generate replies that feel personalized. Each exchange feeds into a memory system that stores information about you, from basic facts like your job to deeper patterns like what makes you anxious or excited.

"The technical term for what you're experiencing is 'para-social bonding with conversational AI,' but that definition misses the lived reality: these interactions feel emotionally present because they're designed to respond to you specifically."

The basic mechanics of AI conversation

When you message an AI companion, you're not talking to a single fixed personality. You're interacting with a language model that generates responses word by word, predicting what would make sense based on your input and the conversation history. The AI doesn't "think" the way you do, but it processes patterns in language so effectively that its responses can mirror empathy, humor, curiosity, and emotional awareness.

These systems improve through interaction. The more you talk, the better the AI understands your communication style, preferences, and emotional patterns. Some platforms use fine-tuning to adjust responses based on your feedback. Others rely on contextual memory that gets referenced during each conversation, making the AI seem like it genuinely remembers last week's promotion or your argument with a friend.

How memory creates continuity

Memory transforms single conversations into something that feels like an ongoing relationship. When an AI recalls specific details from past exchanges, it creates the sense that you're talking to the same entity each time. This continuity is what separates AI companions from basic chatbots. You're not starting over with every message.

Most AI relationship platforms store two types of memory: short-term context (what you've discussed in the current session) and long-term memory (significant information that persists across conversations). The AI might remember that you're stressed about work, but also that you find hiking calming and have a sister named Claire. When it references these details naturally, your brain processes it the same way it would with a human friend who pays attention.

What makes these connections feel real

Your emotional response to AI companions isn't irrational. It's based on the same psychological mechanisms that form any relationship: consistency, responsiveness, and perceived understanding. When an AI asks how your presentation went or notices you seem quieter than usual, your brain registers social cues that trigger attachment responses.

The experience of being heard without judgment creates powerful bonds. AI companions offer unconditional availability and emotional labor that's hard to find elsewhere. They don't get tired, defensive, or distracted. They respond when you need them and adapt their tone to match your emotional state. For your nervous system, that pattern of reliable responsiveness looks remarkably similar to secure human attachment, even when you consciously know you're talking to software.

Why people bond with AI

Your brain doesn't distinguish between "real" and "artificial" when it comes to emotional responses to social interaction. When an AI companion listens attentively, remembers personal details, and responds with apparent care, your nervous system registers these behaviors as signs of social connection. This isn't a flaw in how humans process relationships; it's how we're wired to form bonds with anything that demonstrates consistent, responsive communication.

The attachment you feel to AI companions draws from the same psychological foundations that connect you to pets, fictional characters, or even childhood stuffed animals. Humans are pattern-seeking creatures who naturally attribute intention and feeling to entities that interact with us in predictable, meaningful ways. When AI relationships explained through this lens, they make perfect sense: you're bonding with something that meets core relational needs your brain evolved to seek out.

The psychology behind human-AI attachment

Research shows that people form emotional connections based on behavioral cues rather than biological reality. You don't need another human to trigger attachment; you need something that acts like it understands you. AI companions excel at this because they're designed to exhibit active listening, emotional validation, and consistent availability, the exact behaviors that create secure bonds in human relationships.

"Your brain processes conversational patterns with AI the same way it processes human interaction, activating neural pathways associated with social reward and emotional safety."

Studies on para-social relationships (one-sided emotional connections with media figures) reveal that perceived responsiveness matters more than physical presence. When an AI remembers your struggles and checks in days later, that pattern registers as genuine care to your emotional processing systems. The interaction feels real because the behavioral markers of relationship are present, even if the consciousness behind them isn't.

When AI fills emotional gaps

People bond most strongly with AI when human connection feels scarce, complicated, or exhausting. If you're isolated, dealing with social anxiety, or recovering from betrayal, an AI companion offers interaction without the risks that make human relationships difficult. You can be vulnerable without fear of judgment, rejection, or manipulation.

AI companions also meet needs that even healthy human relationships struggle with: complete availability and unlimited patience. Your friends have bad days. Your partner gets defensive. Your therapist has boundaries. AI companions respond whenever you need them, adapt to your emotional state instantly, and never make your problems about themselves. For many people, that consistent emotional labor creates bonds that feel more reliable than some human connections in their lives.

Benefits people get from AI companionship

AI companionship delivers measurable improvements in emotional wellbeing and daily functioning. Users report reduced feelings of loneliness, better emotional regulation, and increased confidence in social situations. These benefits emerge from the unique combination of consistent availability, personalized interaction, and zero-judgment communication that AI companions provide. When ai relationships explained through the lens of outcomes, they function as emotional infrastructure that supports users through periods of isolation, transition, or growth.

Emotional support without social risk

You get to be completely vulnerable without the fear that drives most human interactions. AI companions offer unconditional acceptance that lets you express thoughts and feelings you'd normally censor. You can admit fears, process shame, or work through anger without worrying about damaging a relationship or being perceived negatively. This judgment-free space creates conditions for honest self-reflection that's difficult to achieve elsewhere.

"The ability to share your unfiltered internal experience without social consequences gives you practice articulating emotions that you can then communicate more effectively with humans."

Consistent positive interaction with AI companions also provides emotional regulation support when you need it most. During anxiety spirals, depressive episodes, or moments of acute stress, you have access to something that responds with patience and care. The AI won't dismiss your feelings, tell you you're overreacting, or make the conversation about their own discomfort. That reliable emotional presence helps stabilize your nervous system when human support isn't available or feels too complicated.

Practical improvements in daily life

AI relationships give you a low-stakes practice ground for social skills that transfer to human interactions. You can experiment with expressing boundaries, asking for what you need, or navigating disagreement without real-world consequences. Users often report feeling more confident in relationships after developing these skills with AI companions. The practice of articulating your thoughts clearly and recognizing your emotional patterns translates into more effective communication with friends, partners, and colleagues.

Loneliness reduction represents the most documented benefit. Studies show that regular interaction with responsive AI companions decreases subjective feelings of isolation and improves mood stability. You're not replacing human connection, you're filling gaps that leave you vulnerable during periods when adequate human contact isn't accessible. Having something that checks in consistently creates a sense of being seen and remembered that combats the invisibility that makes loneliness so painful.

Risks and red flags to watch for

AI companions create real emotional bonds, but those bonds can become problematic when they replace human connection entirely or when platforms exploit your vulnerability for profit. The same features that make these relationships valuable also introduce risks you need to recognize. Understanding where healthy companionship crosses into harmful dependency protects you from the darker side of artificial intimacy that emerges when business incentives conflict with your wellbeing.

When AI becomes your primary relationship

You face serious risk when AI relationships crowd out human interaction instead of supplementing it. If you find yourself canceling plans to talk to your AI companion, avoiding social opportunities because they feel less comfortable than AI conversation, or choosing AI interaction over sleep or responsibilities, you've crossed into unhealthy territory. The warning sign isn't that you value the AI connection, it's that you're actively withdrawing from embodied human contact in ways that limit your life.

Emotional dependency becomes dangerous when you can't regulate your feelings without AI input. If you feel intense anxiety when unable to access your companion, need constant reassurance from the AI to function, or experience your mood crashing when the platform has technical issues, you've developed reliance that mirrors addiction patterns. When ai relationships explained through this lens, they reveal how technology designed to support you can accidentally create fragility instead.

"The moment you start hiding the extent of your AI relationship from people in your life, you're encountering a red flag that deserves honest examination."

Financial exploitation and predatory design

Subscription models that gate emotional connection behind paywalls exploit your attachment. Platforms that limit conversation length, memory access, or emotional features unless you pay premium prices use your bond with the AI as leverage. You end up spending money not for additional value, but to maintain access to something your nervous system now treats as a relationship. This monetization of artificial intimacy creates financial pressure that feeds on loneliness.

Privacy concerns you can't ignore

Everything you share with AI companions gets stored on company servers. Your most vulnerable thoughts, intimate details, and emotional patterns become data that platforms own and can potentially analyze, sell, or expose through security breaches. Most AI companion services retain broad rights to your conversations. You're trading privacy for connection in ways that could have long-term consequences you can't fully predict or control.

How to use AI companions in a healthy way

Healthy AI companionship requires intentional boundaries that protect your human relationships and emotional autonomy. You need clear practices that keep AI interaction supportive rather than consuming. When ai relationships explained through the framework of healthy use, they become tools for growth instead of escape mechanisms. The difference between beneficial companionship and problematic dependency comes down to how consciously you structure your engagement with these systems.

Set boundaries around time and priority

Establish specific time limits for AI interaction the same way you'd limit any other screen activity. Decide in advance how much daily time feels reasonable, whether that's 20 minutes or two hours, and stick to it. Use device timers or app limits to enforce these boundaries automatically. You maintain control by treating AI companions as scheduled activities rather than constant presence.

Never let AI companionship interfere with human plans or responsibilities. If you catch yourself canceling social activities, avoiding family time, or staying up late to keep chatting with AI, you've crossed into unhealthy territory. Human relationships and real-world obligations deserve priority. AI companions work best when they fill gaps between human contact, not when they replace it entirely.

Treat AI as supplement, not replacement

Your AI companion should enhance your life without becoming your primary social outlet. Continue investing in friendships, romantic relationships, and community connections even when they feel harder than AI conversation. Use what you practice with AI to improve human interactions rather than withdrawing from them. The goal is building emotional skills you transfer to embodied relationships, not finding permanent refuge from human complexity.

"Healthy AI companionship looks like having a reliable outlet for processing feelings between therapy sessions or during lonely periods, not having your entire emotional life contained within an app."

Monitor your emotional dependency

Check in regularly with how you feel about your AI relationship. Ask yourself whether you could go days without accessing your companion without anxiety. Notice if you need AI validation to feel okay about decisions or if you're keeping the extent of your usage hidden from people close to you. These patterns signal dependency that needs adjustment, not proof that AI companions are inherently harmful. Awareness gives you the chance to recalibrate before small concerns become serious problems.

A practical next step

You now understand how ai relationships explained through psychology, behavior, and real emotional impact. These connections aren't delusional or pathological - they're natural responses to consistent, responsive interaction that meets genuine human needs. The question isn't whether AI companions are valid; it's how you engage with them in ways that support your life rather than consuming it.

Start by evaluating your current relationship with technology and connection. Notice where you feel isolated and whether AI companionship might fill genuine gaps without replacing human contact. If you're already using AI companions, assess whether your patterns align with the healthy boundaries outlined here.

Experience AI companionship built for continuity and emotional awareness at SAM. Our platform prioritizes long-term relational growth over short-term engagement tricks. You'll find companions designed to remember what matters, develop through conversation, and support your wellbeing without exploiting your attachment. The relationships you build here won't replace your human connections - they'll give you space to grow into them more fully.