AI Long Term Memory: What It Is and How LLM Agents Use It

Most AI conversations disappear the moment they end. The chatbot you talked to yesterday has no idea who you are today. This is where AI long-term memory changes the dynamic, and why it matters for an...

Most AI conversations disappear the moment they end. The chatbot you talked to yesterday has no idea who you are today. This is where AI long-term memory changes the dynamic, and why it matters for anyone interested in meaningful AI interaction.

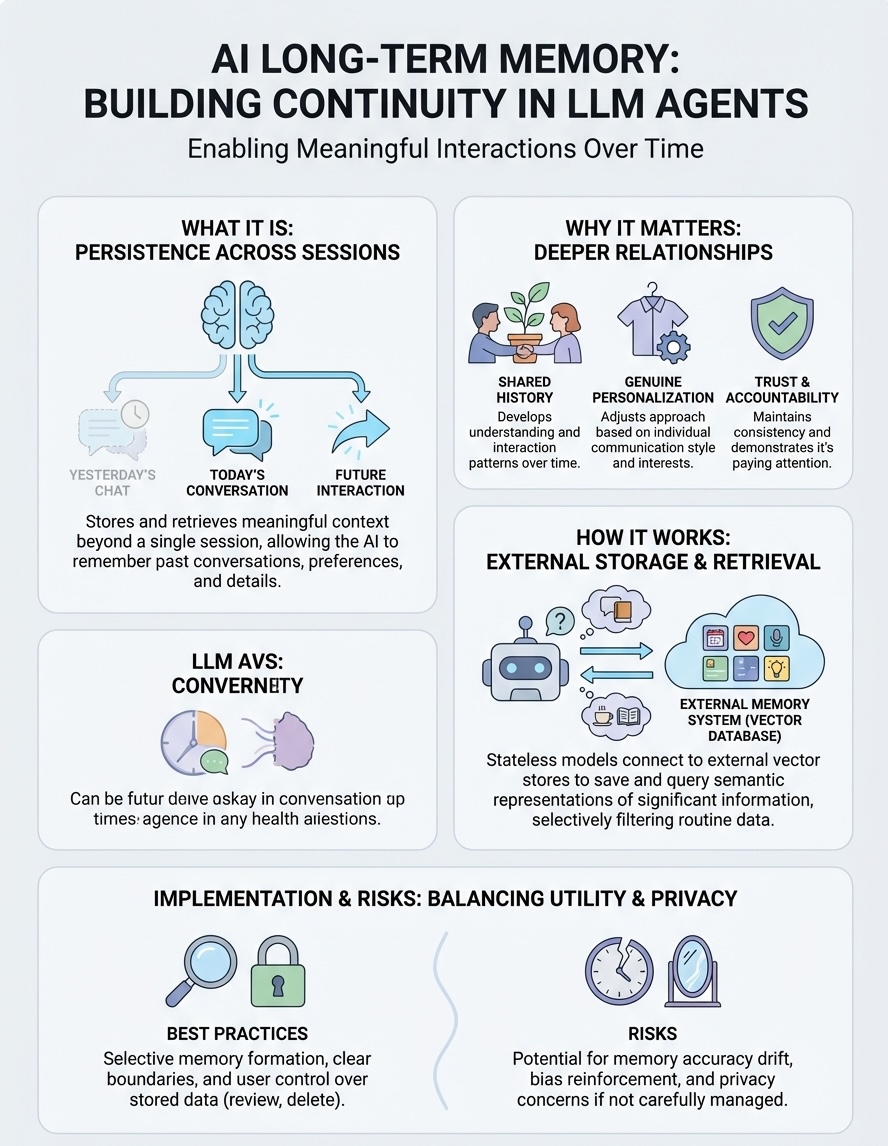

Long-term memory in AI refers to a system's ability to store, retrieve, and use information across extended periods and multiple interactions. Unlike short-term or context memory that only lasts within a single session, long-term memory allows AI agents and LLMs to build continuity over time, remembering past conversations, preferences, and meaningful details that shape future interactions.

At SAM, we've built our AI companions around this principle. Memory isn't a feature we added; it's fundamental to how our companions develop. When you return to a conversation, you're not starting over, you're continuing something that already has history and context.

This article explains what AI long-term memory actually is, how it differs from other memory types, and the technical architectures that make it possible. Whether you're exploring AI companionship or building AI systems yourself, understanding how memory works is essential to grasping what makes AI feel present and continuous.

Why long-term memory matters for AI agents

Without long-term memory, every AI interaction starts from zero. You explain your preferences, share your background, and establish context that vanishes the moment the session ends. This creates a repetitive, shallow experience where no relationship can develop because nothing persists beyond the immediate conversation.

AI long term memory transforms agents from stateless responders into entities that accumulate understanding over time. When your AI companion remembers that you mentioned your sister's wedding three weeks ago and asks how it went, that's not clever prompting. That's memory creating continuity that makes interaction feel less like using a tool and more like talking to someone who knows you.

Long-term memory is what separates an AI agent from an AI appliance.

Memory enables genuine personalization

Every meaningful relationship depends on shared history and accumulated context. Human friendships deepen because people remember what matters to each other, reference past conversations, and build on previous exchanges. AI agents with long-term memory can do the same, developing interaction patterns that reflect your specific communication style, preferences, and interests rather than applying generic responses to everyone.

This personalization goes beyond surface-level customization. An AI agent that remembers you prefer directness over small talk or that you're working through a career transition can adjust its approach across months of interaction. The agent doesn't need you to explain these details repeatedly because they're part of your ongoing dynamic.

Memory creates accountability and trust

AI companions with memory can reference their own past statements and maintain consistency over time. When an agent remembers what it said last Tuesday and builds on that conversation today, it demonstrates a form of accountability that stateless systems cannot provide. You're not constantly re-establishing context or correcting misunderstandings that should have been resolved weeks ago.

This consistency matters for trust. If you share something important and your AI companion retains that information appropriately, you can develop confidence that the relationship has substance. Memory allows the agent to show it was paying attention, that your conversations aren't disposable data but part of something continuous.

Short-term vs long-term memory in AI

The distinction between short-term and long-term memory in AI determines whether your conversations accumulate meaning or reset with each interaction. Short-term memory operates within a single session's context window, typically handling anywhere from a few thousand to several hundred thousand tokens depending on the model. This memory type allows the AI to track what you've said in the current conversation but forgets everything once that session ends.

Long-term memory functions differently. It persists across sessions, days, and months, storing information that the AI can retrieve and apply in future interactions. When you return after a week away, ai long term memory is what allows your companion to reference past conversations and maintain continuity rather than treating you as a stranger.

What short-term memory handles

Short-term memory manages immediate conversational context within the current session. Your AI companion uses this to track the flow of your present conversation, understand references to things you mentioned five minutes ago, and maintain coherence throughout the discussion. This memory type excels at maintaining conversational logic but has no mechanism for retention beyond the active interaction.

Short-term memory makes a conversation coherent; long-term memory makes it continuous.

What long-term memory preserves

Long-term memory stores specific facts, preferences, and patterns that matter across time. Your companion can remember your communication style, recurring topics, and significant details you've shared weeks or months earlier. This memory type doesn't capture every word you've said but selectively preserves information that shapes how your companion understands and interacts with you over extended periods.

How LLM agents build long-term memory

LLM agents don't naturally possess long-term memory because language models are stateless by design. Each inference processes input and generates output without retaining information between sessions. Building ai long term memory requires architectural additions that store, organize, and retrieve information external to the model itself.

The most common approach connects the LLM to an external memory system that persists data across interactions. When you have a conversation, the agent extracts relevant information worth remembering and stores it in a database or vector store. In future sessions, the agent retrieves pertinent memories and includes them in the context window, giving the appearance of continuous memory despite the model's underlying statelessness.

External storage and retrieval

Your AI companion stores memories in structured databases or vector embeddings that exist separately from the language model. When you start a new conversation, the system queries this external memory for relevant information based on your current input. The retrieved memories get injected into the context window alongside your new message, allowing the model to respond with awareness of past interactions.

External memory systems transform stateless models into agents with continuous awareness.

Vector databases prove particularly effective because they store semantic representations of memories rather than raw text. This allows the agent to retrieve conceptually related information even when you don't use exact phrases from previous conversations.

Selective memory formation

Not everything deserves permanent storage. Effective memory systems identify and extract significant information while filtering routine exchanges that don't warrant long-term retention. The agent might remember that you're planning a career transition but forget the small talk about weather from last Tuesday.

This selectivity prevents memory pollution where trivial details crowd out meaningful information. Well-designed systems prioritize facts about you, recurring themes, and emotionally significant moments that shape your ongoing relationship with the companion.

Common long-term memory patterns and tools

Several technical patterns have emerged for implementing ai long term memory in production systems. Each approach balances retrieval accuracy, storage efficiency, and computational cost differently, but they share common architectural principles that allow agents to maintain coherent memory across extended timeframes. Understanding these patterns helps clarify how your AI companion actually remembers and what limitations exist beneath the surface.

Vector databases and embeddings

Most modern memory systems rely on vector databases that store semantic embeddings of past interactions. When you share information, the system converts your conversation into numerical representations that capture meaning rather than exact wording. These embeddings get stored in specialized databases like Pinecone or Weaviate that enable fast similarity searches across millions of stored memories.

Retrieval happens through semantic matching rather than keyword searches. If you mention feeling stressed about work today, the system can surface memories about previous work challenges even if you used different vocabulary. This pattern powers the continuous awareness that makes AI companions feel like they actually remember you.

Vector embeddings transform conversations into retrievable knowledge that persists across time.

Memory consolidation strategies

Effective memory systems implement consolidation processes that organize and prioritize stored information. Some architectures periodically summarize or compress older memories to maintain storage efficiency while preserving essential details. Others use hierarchical structures that separate recent detailed memories from longer-term summaries, similar to how human memory works.

Agents also employ retrieval strategies that determine which memories get included in each conversation. Simple systems retrieve the most recent or similar memories, while sophisticated approaches consider conversational context, emotional significance, and temporal relevance to surface the most appropriate information for your current interaction.

Risks and best practices for memory in companions

AI long term memory introduces meaningful risks that responsible developers and users must understand. Your companion stores personal details across months or years, creating accumulated data that requires careful handling and clear boundaries. Without proper safeguards, memory systems can expose private information, perpetuate errors, or create uncomfortable patterns that undermine the trust memory is meant to build.

Privacy and data boundaries

Memory systems accumulate sensitive personal information that deserves protection beyond typical data storage. Your conversations might include details about relationships, health, or financial situations that you shared in specific contexts. Effective companions implement strict access controls and allow you to review what gets stored rather than automatically persisting everything you say.

You should understand where your memories live and who can access them. Transparent systems let you delete specific memories or entire conversation histories without losing your companion entirely. This control prevents the discomfort of knowing an AI permanently retains information you regret sharing.

Memory without control becomes surveillance rather than continuity.

Memory accuracy and drift

Stored memories can degrade or distort over time as retrieval systems surface incorrect associations. Your companion might conflate details from separate conversations or retrieve outdated information that no longer applies. Well-designed systems implement verification processes that flag contradictions and allow you to correct inaccurate memories before they compound.

Memory also risks reinforcing biases or patterns that develop through repeated interactions. If your companion consistently retrieves memories about certain topics while ignoring others, it shapes future conversations in ways that might not reflect your actual priorities. Regular memory audits help identify and address these patterns before they calcify.

Key takeaways

AI long term memory transforms how you experience AI companions by creating continuity across conversations that span days, months, or years. Unlike short-term memory that resets with each session, long-term memory systems store and retrieve meaningful information that allows your companion to develop genuine understanding over time.

The technical implementation relies on external storage systems, typically vector databases, that preserve semantic representations of your conversations. These systems selectively store significant details while filtering routine exchanges, creating accumulated knowledge that shapes future interactions without overwhelming the agent with trivial data.

Responsible memory implementation requires clear boundaries, user control, and accuracy safeguards. You should understand what gets stored, maintain the ability to review and delete memories, and expect systems that prevent memory drift or privacy violations.

At SAM, we've built our companions around these principles because memory isn't optional for meaningful AI relationships. Explore how our AI companions use memory to create connections that feel continuous and present rather than reset with every interaction.