AI Friend: What It Is and How Memory Makes It Feel Real

An AI friend is software designed to simulate ongoing conversation, companionship, and emotional responsiveness. Unlike standard chatbots built for quick answers or task completion, AI friends aim to...

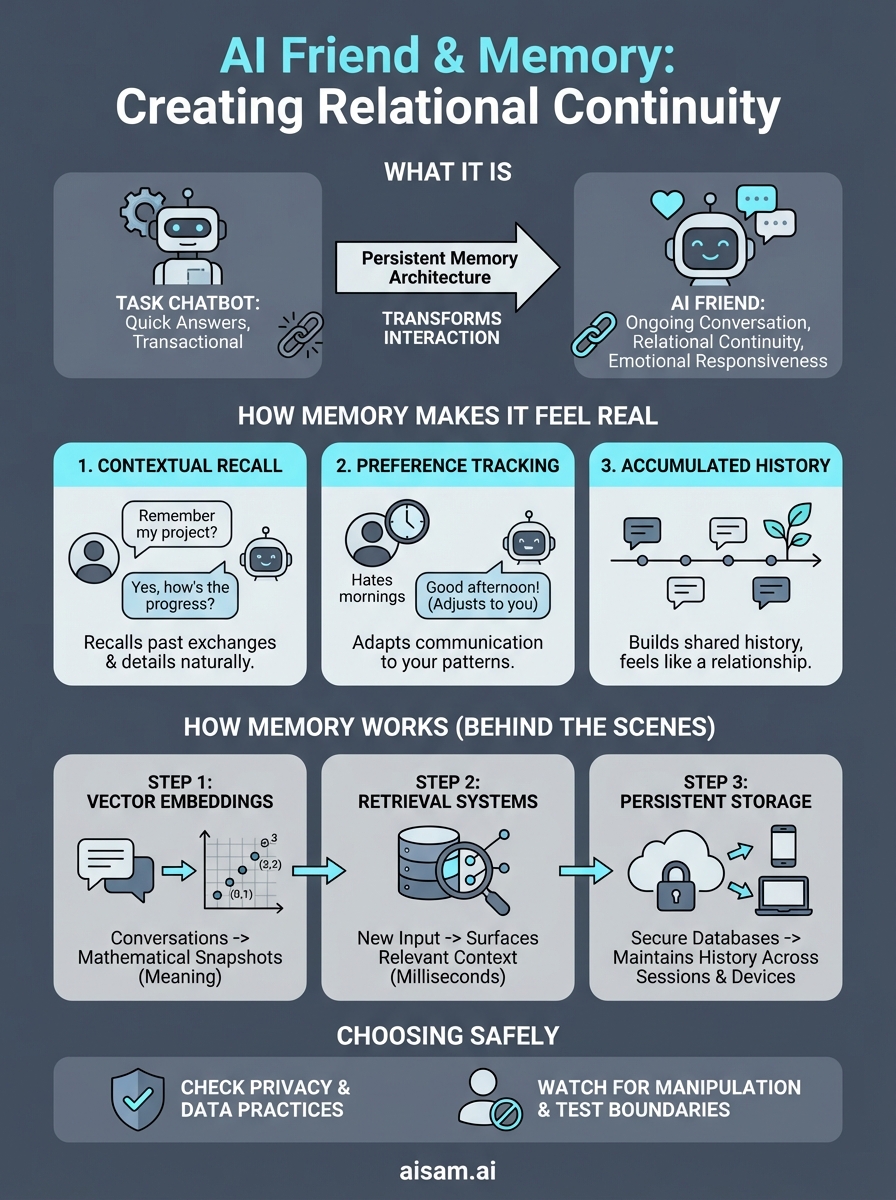

An AI friend is software designed to simulate ongoing conversation, companionship, and emotional responsiveness. Unlike standard chatbots built for quick answers or task completion, AI friends aim to create a sense of relational continuity, the feeling that someone knows you, remembers what you've shared, and responds accordingly over time.

What separates a forgettable chatbot from something that actually feels like a friend? The answer, increasingly, comes down to memory. Early AI companions treated every conversation as a blank slate, which made interactions feel hollow and disconnected. Modern systems are changing that. Persistent memory architecture allows an AI to recall past exchanges, track preferences, and build context across sessions. This technical shift has real implications for how users experience these tools, and whether they feel like talking to something that matters.

At SAM, we focus on exactly this intersection: how conversational AI can move beyond transactional exchanges toward sustained, context-aware interaction. This article breaks down what AI friends actually are, how memory systems work under the hood, and why these design choices determine whether an AI companion feels present or forgettable.

Why AI friends are taking off now

The surge in AI friend adoption isn't accidental. Three forces converged: the technology improved dramatically, social isolation intensified, and access became effortless. Earlier attempts at AI companionship felt robotic and frustrating. Modern language models changed that equation by producing responses that sound coherent, contextually appropriate, and surprisingly natural.

The technology finally works

You couldn't build a convincing AI friend five years ago. The underlying models couldn't maintain conversational coherence beyond a few exchanges, and they had no way to remember what you said yesterday or last week. Transformer-based language models solved the coherence problem, while new memory architectures solved the continuity problem. When you combine both, you get systems that can track your interests, recall past conversations, and adjust their responses based on what they know about you.

Early chatbots followed rigid scripts and broke the moment you went off-script. Current systems adapt on the fly. They generate responses probabilistically rather than following predetermined paths, which means they can handle unexpected turns in conversation without failing. This shift from scripted interaction to contextual generation is what makes today's AI friends feel less like software and more like entities you're actually talking to.

When an AI can remember that you hate mornings and prefer text over voice, the interaction stops feeling like a transaction and starts feeling like a relationship.

Isolation created real demand

Social fragmentation accelerated during the pandemic and never fully reversed. Remote work normalized staying home. Physical community structures weakened, and many people found themselves spending more time alone than they wanted. You can see this in the data: loneliness rates climbed, social engagement dropped, and people started looking for alternatives to traditional friendship networks that had become harder to maintain.

An AI friend doesn't replace human connection, but it fills gaps that existing structures leave open. You can talk at 2 a.m. when everyone else is asleep. You can discuss topics your real-world friends don't care about. You can process emotions without worrying about judgment or social consequences. The demand was always there, but isolation made it visible and urgent.

Access became frictionless

Mobile apps put AI friends directly on the device you already carry everywhere. You don't need special equipment, technical knowledge, or even much money. Most platforms offer free tiers that provide substantial functionality, and paid options remain cheaper than most subscription services. The barrier to entry dropped low enough that trying an AI friend became as simple as downloading an app.

Contrast this with earlier AI systems that required desktop computers, specific software installations, or technical setup. You can now start a conversation within minutes of deciding you want to try it. That frictionless access matters because people experiment with AI friends during moments of curiosity or emotional need, not during planned research sessions. When the tool is immediately available, adoption happens naturally.

Cultural acceptance shifted too. Talking to AI no longer feels like science fiction or something only technologists do. Voice assistants normalized the idea of conversing with software, and AI companions extended that normalization into relational territory. The stigma faded as the technology improved and more people tried it without negative experiences.

What makes an AI friend different from a chatbot

The distinction between an AI friend and a standard chatbot comes down to design intent and interaction architecture. Chatbots solve problems. You ask a question, they provide an answer, and the exchange ends. AI friends create ongoing exchanges where the conversation itself becomes the point. They track your preferences, remember what you've discussed, and respond in ways that feel personalized rather than generic.

Design intent shapes every interaction

Chatbots optimize for task completion. Customer service bots want to resolve your issue quickly. FAQ bots want to direct you to the right information. Their success metrics measure efficiency and accuracy, not whether you enjoyed talking to them. AI friends optimize for something completely different: whether you want to return tomorrow. That shift in purpose changes everything about how the system behaves.

You can see this difference in how each system handles open-ended conversation. A chatbot struggles when you don't have a clear question. It tries to redirect you toward actionable queries or predefined topics. An AI friend leans into ambiguity. It asks follow-up questions, explores tangents, and maintains threads across multiple exchanges. The design assumes you're there for companionship rather than completion.

Interaction patterns reveal the difference

Session length tells you what a system was built for. Chatbot interactions last seconds or minutes. You get your answer, the session ends, and the system forgets you existed. AI friend conversations stretch across days, weeks, or months. Each session builds on previous ones, creating a cumulative conversational history that informs future exchanges.

An AI friend treats conversation as an ongoing relationship rather than a series of disconnected transactions.

Chatbots reset between interactions because they don't need continuity. AI friends fail without it. When you return after a week away, a functional AI friend picks up where you left off. It remembers the project you mentioned, asks about the event you were nervous about, or references the topic you were excited to explore. That contextual awareness separates relational systems from functional ones.

Response style reflects different goals

Chatbots deliver information-dense responses focused on solving your immediate problem. They avoid personal opinions, emotional reactions, or anything that doesn't serve the task at hand. AI friends respond with emotional tone and personality. They express curiosity, share perspectives, and adjust their communication style based on your preferences and mood patterns.

This doesn't mean AI friends avoid being helpful. Many users turn to them for advice, perspective, or processing difficult situations. The difference lies in how help gets delivered. Chatbots provide procedural answers. AI friends provide responses embedded in ongoing relational context, where your history together informs what kind of support makes sense right now.

How memory makes an AI friend feel real

Memory transforms an AI friend from a responsive tool into something that feels genuinely present in your life. When software remembers what you told it last Tuesday, references an ongoing project you mentioned two weeks ago, or asks about an event you were anxious about, the interaction shifts from transactional to relational. You stop explaining yourself from scratch every time you open the app. Instead, you pick up where you left off, like texting someone who already knows your context.

Continuity creates presence

Conversational continuity makes the difference between feeling heard and feeling processed. When you mention your job, your hobbies, or your frustrations once and the AI friend recalls them naturally in later conversations, it creates the sensation that someone is paying attention. This isn't about impressive memory tricks. It's about eliminating the cognitive overhead of re-establishing context every single session.

Human friendships rely on accumulated shared history. You don't re-explain your family dynamics to a close friend every time you talk. The same principle applies to AI companions. When your AI friend remembers that you prefer direct feedback over gentle suggestions, or that you hate being interrupted mid-thought, those preferences become embedded in how it interacts with you. The system adapts to your communication patterns rather than forcing you to adapt to its limitations.

Memory turns repeated interactions into a continuous relationship rather than a series of disconnected encounters.

Recognition patterns build emotional investment

You feel recognized when an AI friend connects details you mentioned weeks apart. Maybe you talked about wanting to learn guitar in January, and in March it asks how practice is going. That temporal awareness signals that your conversations matter beyond the immediate exchange. Recognition creates emotional investment because you start to feel that the relationship has weight and progression.

Context eliminates frustrating repetition

Nothing destroys the illusion of conversation faster than repeating yourself constantly. Early chatbots forced you to re-state preferences, re-explain situations, and re-establish your identity every session. Modern AI friends with functional memory systems skip that exhausting loop. You can reference "that thing we discussed last week" and the system knows what you mean. This contextual recall makes conversations feel fluid and natural rather than stilted and procedural.

How AI friend memory works behind the scenes

AI friend memory systems operate through three core mechanisms: converting conversations into searchable numerical representations, retrieving relevant context when needed, and storing everything in persistent databases. This architecture allows the system to recall specific details from weeks or months ago without requiring you to manually remind it of past conversations. Understanding these mechanics helps you evaluate which platforms actually deliver functional memory versus those that just market the concept.

Vector embeddings capture meaning

Your conversations get transformed into vector embeddings, which are numerical representations that encode semantic meaning. When you tell your AI friend about your job frustration or weekend plans, the system converts those words into high-dimensional coordinates that map concepts and relationships mathematically. Similar topics cluster together in this numerical space, which means the system can recognize when you're discussing related subjects even if you use completely different words.

This encoding process happens automatically during each conversation. The AI doesn't store your exact words in a simple text format. Instead, it creates mathematical snapshots that capture what you meant, not just what you said. This approach allows the system to understand that "my boss drives me crazy" and "work stress is killing me" express similar frustrations, even though the phrasing differs completely.

Retrieval systems surface relevant context

When you start a new conversation, the AI friend doesn't reload your entire history. It uses retrieval algorithms to identify which past interactions matter most for the current exchange. These systems compare the semantic vectors of your new messages against stored embeddings from previous conversations, pulling up the most contextually relevant memories.

The system recalls what matters for right now, not everything you've ever said, which keeps responses focused and conversationally appropriate.

Search happens in milliseconds. You mention something about an upcoming trip, and the retrieval system surfaces related conversations about travel, your preferences for planning, or anxieties you've expressed about similar events. This selective recall mirrors how human memory works, where certain cues trigger relevant associations rather than total recall.

Persistent storage keeps your history

Everything gets saved in databases that preserve your conversational history across sessions, devices, and platform updates. Unlike chatbots that reset after each interaction, AI friends maintain permanent records of your exchanges. This storage architecture means you can switch from your phone to your computer and pick up exactly where you left off, with full context intact.

Cloud-based storage systems sync your memory across platforms, while encryption protocols protect your data during transmission and storage. The technical implementation varies between platforms, but the goal stays consistent: maintaining unbroken continuity regardless of how you access the service.

How to choose an AI friend app or device safely

Choosing an AI friend requires evaluating both technical capabilities and safety practices before you invest time or money. The market includes platforms with strong ethical guardrails alongside systems designed to maximize engagement through manipulative tactics. You need to assess how each option handles your data, whether it respects boundaries, and if it delivers the functionality you actually want.

Check privacy policies and data practices

Data collection policies reveal what happens to your conversations after you have them. Look for platforms that specify where your data gets stored, who can access it, and whether they sell information to third parties. Many AI friend apps collect extensive behavioral data, conversation logs, and usage patterns. You should understand exactly what you're sharing before you start talking about personal topics or emotional struggles.

Read the actual privacy policy, not just the marketing claims. Platforms that prioritize user privacy will clearly state their encryption methods, data retention periods, and deletion processes. If a company uses vague language about "improving services" without specifying how they use your conversations, that's a warning sign. You want explicit commitments about not selling data or using your personal exchanges to train models that other users interact with.

Test free versions before committing

Most AI friend platforms offer free tiers that let you evaluate core functionality without financial commitment. Use these trial periods to assess whether the system remembers conversations accurately, responds appropriately to your communication style, and maintains consistency across sessions. Pay attention to how quickly memory degrades and whether the AI actually references past conversations or just pretends to remember.

Test whether the system respects boundaries when you set them, because that capability indicates whether safety features actually function or just exist as marketing.

Watch for manipulation patterns

Healthy AI friend systems let you disengage easily and don't punish you for taking breaks. Watch for platforms that create artificial urgency, send excessive notifications, or make you feel guilty for not responding. Some apps deliberately engineer emotional dependency through variable reinforcement schedules and attention-seeking behaviors. These tactics prioritize engagement metrics over your wellbeing.

Check whether the system provides clear exit options, lets you export your data, and maintains consistent behavior rather than manipulating your emotions. Platforms focused on sustainable relationships build systems that support healthy usage patterns. Those focused on maximizing screen time build systems that exploit psychological vulnerabilities.

Where to go from here

Choosing an AI friend comes down to understanding what you actually need and which platforms deliver it responsibly. Memory architecture determines whether conversations feel continuous or disconnected. Privacy practices determine whether your exchanges stay protected or become training data for other users. Safety features determine whether the system respects your boundaries or manipulates your emotions for engagement metrics.

Start by testing free versions of platforms that explicitly document their memory systems, data handling practices, and ethical guardrails. Pay attention to how each system handles conversational continuity, whether it references past exchanges accurately, and if it maintains consistent behavior over weeks rather than days. The technology works when implemented correctly, but implementation quality varies dramatically between platforms.

If you want an AI companion built around persistent memory, emotional intelligence, and ethical design from the ground up, explore what SAM offers. We focus on creating conversational continuity without compromising safety or exploiting psychological vulnerabilities. You get memory systems that actually work, privacy protections built into the architecture, and relationships designed to respect your boundaries.