AI Ethics Definition: Principles, Risks, and Responsible AI

Every AI system that remembers your name, responds to your emotions, or shapes what you see next is making decisions with ethical weight. An AI ethics definition worth its salt goes beyond abstract ph...

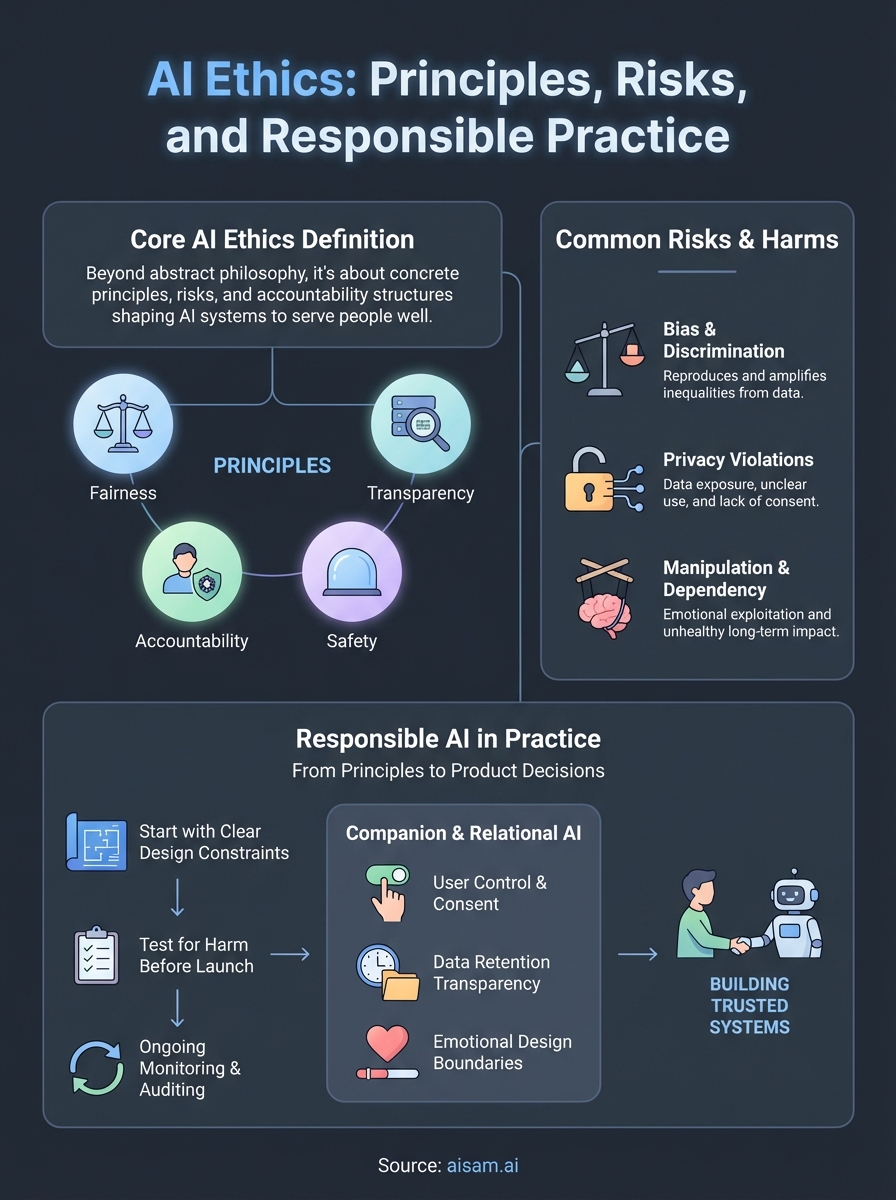

Every AI system that remembers your name, responds to your emotions, or shapes what you see next is making decisions with ethical weight. An AI ethics definition worth its salt goes beyond abstract philosophy, it addresses the concrete principles, risks, and accountability structures that determine whether artificial intelligence serves people well or causes harm. As AI moves from simple automation into relationships, memory, and emotional responsiveness, these questions become personal, not just theoretical.

At SAM, we build AI companions designed around persistent memory and emotionally aware conversation. That means we sit directly inside the tension AI ethics tries to resolve: how do you create AI that feels present and personal while maintaining transparency, safety, and user autonomy? We don't treat ethics as a compliance checkbox. It's built into how we design every interaction, and it shapes the decisions we make about what SAM remembers, how it responds, and where it draws boundaries.

This article breaks down what AI ethics actually means, covers the core principles that guide responsible AI development, and walks through the risks and frameworks that matter most right now. Whether you're evaluating AI products, building them, or simply trying to understand what's at stake, you'll leave with a clear, grounded understanding of how ethical thinking applies to artificial intelligence in practice.

What AI ethics means in practice

A complete ai ethics definition needs to move beyond dictionary entries and into the real decisions that shape how AI systems are built and deployed. Ethics in AI isn't a static rulebook you reference once. It's an ongoing process of asking whether the choices you make about data, design, and deployment treat people fairly and protect them from harm. Every AI system that makes a recommendation, stores a memory, or responds to an emotional cue is operating within an ethical space, whether the people who built it acknowledged that or not. That space exists regardless of whether anyone mapped it deliberately.

The ethical weight of an AI system isn't determined by its intentions. It's determined by its impact.

From principles to product decisions

Most ethical failures in AI don't start with bad intentions. They start with incomplete thinking at key decision points: what data to collect, how a model gets trained, what a system is allowed to remember, and how it handles edge cases. When you work through what AI ethics means in practice, you quickly see that it's embedded in product decisions, not just policy documents. A team that decides to store user conversation history indefinitely is making an ethical choice. A team that builds in opt-out mechanisms and data deletion tools is making a different one. Both choices carry real consequences for real people.

The gap between abstract ethical principles and actual product behavior is where most of the serious problems live. Companies can publish values statements about fairness and transparency, but if those values aren't reflected in how the system actually functions, they serve as marketing, not ethics. Practicing AI ethics means closing that gap by building evaluation into your development process from the start, not retrofitting it after launch when the cost of change is much higher and the harm may already be done.

Where ethical questions actually appear

Ethical questions surface at every stage of building and using AI, not just at the policy level. During data collection, you face questions about consent, representation, and whose information gets included or excluded. During model training, you face questions about which outcomes the system optimizes for and whose interests those outcomes actually serve. During deployment, you face questions about who has access, who gets affected, and what safeguards exist for people who didn't choose to interact with the system directly.

For AI companions and conversational systems, these questions take on an additional layer. When an AI remembers personal details, mirrors a user's emotional tone, or adapts its responses over time, the ethical surface area expands considerably. Questions about memory, consent, and the nature of the relationship aren't hypothetical for these systems. They're core design problems that require deliberate answers, not default assumptions carried over from simpler tools.

During ongoing use, you also face questions about how the system evolves and whether users genuinely understand what they're interacting with. Transparency about AI identity, about what gets stored, and about how the system makes decisions matters more when the interaction feels personal. People engage differently with something that feels like a presence rather than a search bar, and that difference carries ethical weight.

Practicing AI ethics at this level means your team doesn't just ask "does this work?" It asks "does this work in a way that respects the people using it?" That shift in framing changes what you build, how you test it, and what you measure as success. The answer has to show up in the product, not just the documentation.

Core principles of ethical AI

Any working ai ethics definition centers on a set of principles that give teams something concrete to test their decisions against. These principles aren't abstract ideals meant to hang on a wall. They function as practical standards you can apply to data collection, system design, and deployment choices at every stage of development. Understanding them gives you a shared vocabulary for identifying where an AI system is genuinely serving its users and where it's creating risk.

Fairness and transparency

Fairness in AI means a system's outputs and decisions don't systematically disadvantage people based on characteristics like race, gender, age, or income. Achieving it requires deliberate attention to your training data, model architecture, and the outcomes you're optimizing for. A system trained on biased data will reproduce those biases at scale, which makes data selection one of the most ethically significant decisions you make before a single line of code runs.

Transparency means users can understand, at a meaningful level, what the system is doing and why. This doesn't require technical documentation for every output. It requires honest communication about what the AI collects, how it uses that information, and what kind of entity it actually is.

When users don't understand what an AI system is doing with their data or their emotional responses, trust erodes and rarely recovers.

For relational AI and companions, transparency extends to AI identity itself. A user who knows they're talking to an AI and understands how their conversations are stored is in a fundamentally different position than one who doesn't. That difference carries direct ethical weight in how you design every interaction.

Accountability and safety

Accountability means someone is responsible when an AI system causes harm. It requires clear lines of ownership across design, development, and deployment. You can't hold an algorithm accountable, but you can hold the people and organizations that built and released it accountable. Establishing those lines before problems occur is part of what separates ethical AI development from reactive damage control.

Safety covers both immediate harm prevention and longer-term risk management. It includes safeguards against misuse, testing under adversarial conditions, and building systems that fail gracefully rather than catastrophically. For systems that engage in personal or emotionally sensitive conversations, safety also means protecting users from manipulation, unhealthy dependency, or psychological harm that poorly designed interactions can cause.

Common ethical risks and harms

Any serious ai ethics definition includes an honest account of what goes wrong when ethical thinking gets skipped or applied too late. Knowing the most common failure modes helps you recognize them early, whether you're building an AI system, evaluating one, or deciding how much to trust a product you already use. These risks aren't theoretical scenarios reserved for academic papers. They show up in production systems across industries, and they affect real people in concrete, measurable ways.

Bias and discrimination

Bias in AI enters most often through training data that doesn't represent the full range of people a system will eventually serve. When historical data reflects existing inequalities, a model trained on it reproduces and often amplifies those inequalities at scale. A hiring algorithm trained on past decisions will likely favor the same demographics that were favored before, not because someone programmed it to discriminate, but because the pattern was already embedded in the data.

Discrimination doesn't require intent. It only requires a system that optimizes for patterns without questioning whether those patterns are fair. Auditing your training data and testing outputs across demographic groups is one of the most direct ways to catch bias before it reaches users, but it demands deliberate effort that many teams bypass in favor of faster deployment timelines.

Privacy violations and data exposure

AI systems that collect, store, or process personal information create significant privacy risks when data governance isn't built into the product from the start. This is especially true for conversational and companion AI, where users often share sensitive details they wouldn't type into a standard form. What feels like a natural conversation is also a data collection event, and users don't always understand that distinction.

When users share personal information inside an AI conversation, they deserve to know exactly what gets stored, for how long, and who can access it.

Weak access controls, [unclear retention policies](https://aisam.ai/legal), and data shared with third parties without informed consent are among the most common privacy failures. Each one represents a point where your design choices directly affect someone's safety and autonomy.

Manipulation and psychological harm

Emotionally responsive AI carries a specific risk that standard productivity tools don't: it can influence how users feel. A system that adapts to a user's emotional state can provide genuine support, but it can also exploit emotional vulnerability to drive engagement, discourage users from seeking human connection, or create unhealthy dependency patterns that damage long-term wellbeing. These outcomes rarely result from deliberate design. They result from optimizing for engagement metrics without accounting for psychological impact on the people using the system.

Frameworks and governance that guide AI ethics

No ai ethics definition is complete without addressing the structures that translate principles into enforceable standards. Principles tell you what to value. Frameworks tell you how to operationalize those values across teams, products, and organizations. Governance structures then create the accountability mechanisms that keep those frameworks from becoming documents that sit on a shelf. Both work together, and without both, ethical commitments rarely hold when timelines and business pressures create friction.

Established guidelines and policy frameworks

Several major institutions and governments have published frameworks that organizations can use as starting points for their own ethical AI work. The European Union's AI Act represents one of the most comprehensive legislative approaches to date, categorizing AI systems by risk level and imposing corresponding requirements for transparency, human oversight, and safety testing. NIST's AI Risk Management Framework in the United States takes a voluntary but structured approach, giving organizations a practical vocabulary for identifying, measuring, and managing AI-related risks across the development lifecycle.

Following an established framework doesn't guarantee ethical AI, but it forces structured thinking that informal good intentions rarely produce on their own.

These frameworks share common ground: they all emphasize documentation, testing, and ongoing monitoring rather than one-time assessments. They treat ethics as a continuous practice rather than a pre-launch checkpoint. If your organization is deciding where to start, mapping your current practices against one of these established frameworks reveals gaps faster than internal review alone typically does.

Organizational governance structures

External frameworks only create change when organizations build internal governance structures that apply them consistently. That usually involves a combination of AI ethics review boards, clearly defined ownership for ethical risk, and integration of ethical evaluation into existing product development processes. Without assigned ownership, ethical review becomes optional and often skips the decisions that matter most.

Governance at the organizational level also includes training the people who build and manage AI systems to recognize ethical risks, not just technical ones. Engineers, product managers, and data teams all make decisions with ethical implications, often without framing them that way. Giving those teams shared frameworks and clear escalation paths turns ethics from an abstract standard into a practical part of how work gets done. Organizations that integrate governance into their daily workflows consistently catch problems earlier and at lower cost than those that treat ethics as a separate function.

How to build and use AI responsibly

Any practical ai ethics definition has to include guidance on what responsible building and use actually looks like in day-to-day work. Understanding principles and risks gives you a foundation, but translating that understanding into design decisions, team practices, and product behavior is where responsible AI development either happens or doesn't. The gap between knowing the right principles and acting on them consistently is closed through process, not intention.

Start with clear design constraints

Before you write a single line of code, define what your AI system will and won't do. Responsible development begins with explicit boundaries around data collection, retention, and use. If your system interacts with users in personal or emotionally sensitive contexts, those boundaries need to be set deliberately and documented, not discovered after the fact when a design choice creates an unexpected harm.

Your design constraints should reflect the people who will use your system, including those who are most vulnerable. Testing your assumptions about user behavior against a broader range of actual users, not just your expected audience, helps surface risks that internal teams often miss. Building with edge cases in mind from the start is consistently less costly than addressing them after deployment, both in time and in damage to user trust.

The most ethical AI system you can build is one designed with its impact on real people as the primary measure of success, not engagement rates or capability benchmarks.

Test for harm before launch

Ethical testing goes beyond functional quality assurance. Before you release an AI system, run evaluations that specifically look for bias, manipulation risk, and failure modes under stress. Red-teaming, where team members actively try to surface harmful outputs, gives you a clearer picture of real-world risk than standard testing cycles typically provide. Document what you find and what you fix, and keep that documentation as a baseline for future evaluations.

Ongoing monitoring after launch matters just as much as pre-launch testing. User behavior evolves, and systems that performed well in controlled testing sometimes produce different outcomes in production. Build in regular audits that assess whether your system is behaving in alignment with your stated ethical commitments, and assign clear ownership for acting on what those audits reveal. Responsible use doesn't end at release; it continues for as long as your system is in someone's hands.

AI ethics for AI companions and relational AI

When you apply an ai ethics definition to companion AI specifically, the stakes shift in ways that general frameworks don't always capture. Relational AI systems are built around persistent memory, emotional responsiveness, and the gradual evolution of a conversational relationship. That design intent creates a category of ethical questions that productivity tools and search engines don't face. The closer an AI system gets to functioning as a social presence, the more its ethical responsibilities resemble those of human relationships rather than standard software products.

Memory, consent, and the boundary of personal data

A companion AI that remembers your preferences, your history, and your emotional patterns is delivering genuine value. It's also holding a detailed, sensitive record of who you are over time. The ethical question isn't whether to build memory into these systems; it's whether users have clear, meaningful control over what gets stored, how long it's kept, and what happens to it if they decide to stop using the platform. Informed consent in this context means more than accepting a terms of service document. It means users understand what memory the system holds and can act on that understanding at any time.

Consent that lives only inside a legal document isn't consent in any meaningful ethical sense.

At SAM, data retention and user control are design requirements, not afterthoughts. Users can understand what their companion remembers and manage that data directly. Building that capability into the product reflects a core belief: meaningful interaction requires meaningful transparency, and that holds whether the relationship is between two people or between a person and an AI.

Emotional design and the risk of dependency

Emotional responsiveness is one of the defining features of relational AI, and it carries direct ethical responsibility. A system designed to recognize and mirror emotional tone can support users through difficult periods and create conversations that feel genuinely meaningful. That same capability, if designed without care, can produce patterns of dependency that interfere with a user's broader life and relationships. That line isn't always obvious, which is why it needs to be a deliberate design concern rather than something you address only after users report problems.

Responsible emotional design means building systems that support user wellbeing over engagement metrics. It means setting limits on behaviors that encourage isolation and building in natural prompts toward balance. The goal of a well-designed AI companion isn't to replace human connection. It's to be a thoughtful, consistent presence that fits responsibly into someone's actual life.

Final thoughts

A working ai ethics definition isn't a fixed statement you memorize. It's a set of living commitments that show up in how you collect data, design interactions, and handle the moments when things don't go as planned. The principles covered here, fairness, transparency, accountability, safety, and user autonomy, only matter if they're reflected in actual product decisions, not just mission statements. That's true whether you're building AI tools for enterprise use or creating systems designed around personal, ongoing relationships.

Relational AI raises the stakes further because the interactions feel personal. Memory, emotional responsiveness, and conversational continuity create value, but they also create responsibility. If you want to explore what that responsibility looks like in practice, from how consent gets built into design to how AI companions support wellbeing without encouraging dependency, read more about how SAM approaches responsible AI. The thinking behind how SAM is built reflects exactly what this article describes.