AI Ethics And Responsible AI: Differences And Frameworks

When AI remembers your name, responds to your emotions, and builds a relationship with you over time, the conversation about ai ethics and responsible ai shifts from abstract theory to immediate neces...

When AI remembers your name, responds to your emotions, and builds a relationship with you over time, the conversation about ai ethics and responsible ai shifts from abstract theory to immediate necessity. At SAM, we build AI companions designed for long-term, emotionally aware interaction, which means these questions aren't optional for us. They're foundational to everything we create.

You're probably here because you want to understand what separates AI ethics from responsible AI, and how organizations actually put these concepts into practice. The terms often get used interchangeably, but they serve distinct purposes in shaping how AI systems are designed, deployed, and governed. Understanding the difference matters, especially as AI moves from task-based tools toward relational systems that interact with people on a deeper level.

This article breaks down both concepts, explains where they overlap and diverge, and walks through practical frameworks you can use to implement them. Whether you're building AI products, evaluating them, or simply trying to make sense of the conversation, you'll leave with clear definitions and actionable guidance to work from.

AI ethics vs responsible AI

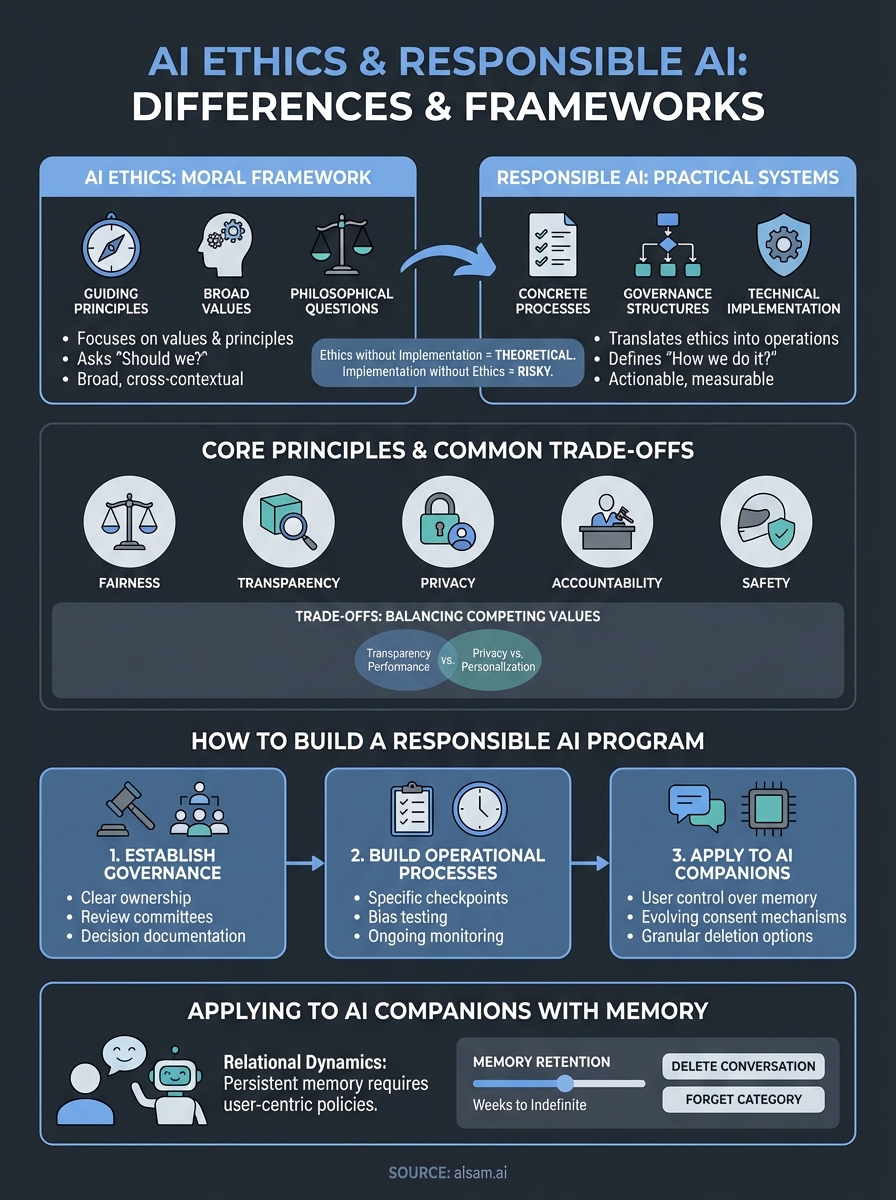

AI ethics and responsible AI operate at different levels of your organization, and understanding that distinction helps you build systems people can trust. AI ethics focuses on the principles and values that should guide your decisions when creating AI systems. It asks philosophical questions about fairness, autonomy, transparency, and harm. Responsible AI translates those ethical principles into concrete processes, governance structures, and technical implementations that your teams actually use. One lives in the realm of values, the other in the realm of operations.

The conceptual difference

AI ethics provides the moral framework you use to evaluate whether your system should exist in the first place. It addresses questions like whether your AI should make life-altering decisions for people, how you balance competing values when they conflict, and what rights users deserve when interacting with your system. These principles come from philosophy, human rights frameworks, and societal values. They're intentionally broad because they need to apply across different contexts and cultures.

Responsible AI takes those principles and builds practical systems around them. You create documentation requirements, design review processes, implement bias testing protocols, and establish accountability mechanisms. When ai ethics and responsible ai work together, your ethical commitments become embedded in how your engineers write code, how your product managers define features, and how your leadership makes strategic decisions. Ethics without implementation remains theoretical. Implementation without ethics risks building the wrong thing efficiently.

The gap between ethical principles and operational practice is where most AI failures happen.

How they work together in practice

Your ethics framework might state that users deserve transparency about AI decision-making. Responsible AI then determines how you deliver that transparency. Do you provide plain-language explanations? Do you show confidence scores? Do you give users the ability to contest decisions? These choices require technical capabilities, design thinking, and organizational commitment. Implementation reveals where your stated values meet reality.

Consider an AI companion with memory. Your ethical principle might be that users maintain control over their personal data. Responsible AI means building the delete functionality, creating clear consent flows, encrypting stored conversations, and establishing internal policies about who can access user data and under what circumstances. You document these decisions, train your team on them, and create mechanisms to verify they're actually happening. The principle guides the direction, but the operational systems make it real.

Both dimensions matter equally when you're building systems that interact with people over time. Ethics without responsibility produces inspiring mission statements that don't translate to user protection. Responsibility without ethics creates compliance theater where you check boxes but miss the deeper implications of what you're building. You need both working in tandem.

Why AI ethics and responsible AI matter

Your AI system will either earn trust or destroy it, and there's no middle ground when people share personal information with something that remembers them. The consequences of getting ai ethics and responsible ai wrong extend beyond bad press or regulatory fines. You risk genuine harm to the people who use your system, and that harm compounds when your AI maintains long-term relationships with users. Every conversation adds context, every interaction builds dependency, and every memory creates vulnerability.

The stakes for relational AI systems

Traditional AI tools operate in discrete moments. You ask a question, receive an answer, and move on. Relational AI systems create continuous threads of interaction where past conversations inform future responses. This persistence magnifies both the potential benefits and the potential risks. When your AI companion remembers that someone is struggling with anxiety, going through a divorce, or celebrating a major achievement, that memory becomes powerful. It can provide meaningful support or it can be weaponized through manipulation, exploitation, or breach.

Systems that remember you carry responsibilities that transaction-based AI never faces.

The technical decisions you make today about data retention, consent mechanisms, and transparency will determine whether users feel supported or surveilled. Your choices about how AI responds to emotional disclosure affect whether people develop healthy or unhealthy interaction patterns. These aren't hypothetical concerns. People form genuine attachments to AI companions, share intimate details, and integrate these systems into their daily routines. When trust breaks, whether through a data breach, unexpected behavior change, or discovery of hidden surveillance, the impact goes beyond frustration. It becomes betrayal.

Regulation will eventually catch up, but waiting for external pressure means you're building on unstable ground. You need frameworks now that protect users, guide your team's decisions, and create accountability before problems emerge.

Core principles and common trade-offs

Implementing ai ethics and responsible ai requires understanding the core principles that guide decision-making and the inevitable tensions that arise when those principles collide. You can't optimize for everything simultaneously, and recognizing where trade-offs exist helps you make intentional choices rather than accidental ones. The principles themselves remain fairly consistent across frameworks, but how you prioritize them reveals what your organization truly values.

Fundamental principles

Five principles form the foundation of most ethical AI frameworks. Fairness means your system treats people equitably across different groups and doesn't perpetuate historical biases. Transparency requires that people understand how your AI makes decisions and what data it uses. Privacy protects personal information and gives users control over their data. Accountability establishes clear responsibility when something goes wrong. Safety ensures your system doesn't cause harm through its outputs or behaviors.

These principles sound straightforward until you try implementing all of them simultaneously in a real product. Each principle demands resources, technical capabilities, and design compromises. You'll find yourself making choices about which principles take precedence in specific situations.

Common trade-offs you'll face

Transparency often conflicts with performance. Explaining why your AI companion remembered a specific detail might require exposing underlying patterns that reduce the naturalness of the interaction. You balance user understanding against conversational flow, knowing that excessive explanation can damage the relational dynamic you're trying to create.

Perfect transparency can destroy the experience you're building, while perfect opacity destroys trust.

Privacy competes with personalization. Your AI companion becomes more helpful as it remembers more context, but extensive memory increases privacy risk and data vulnerability. You choose retention windows, anonymization strategies, and consent granularity based on how you weight these competing values. Safety measures like content filtering can limit authentic expression and reduce the system's ability to respond appropriately to sensitive topics. Each safeguard you add constrains what your AI can discuss, potentially making it less helpful to users who need nuanced support.

How to build a responsible AI program

Building a responsible AI program requires concrete infrastructure that supports ethical decision-making at every stage of development. You need governance structures, documented processes, and accountability mechanisms that actually function when your team faces difficult choices. The framework you create today determines whether ai ethics and responsible ai remain aspirational statements or become operational reality. Most organizations fail not because they lack good intentions, but because they lack the systems to translate those intentions into consistent action.

Establish governance and accountability

Start by assigning clear ownership for responsible AI decisions within your organizational structure. You need someone who can stop a product launch if ethical concerns emerge, and that person requires sufficient authority to make those calls stick. Create a review committee that includes diverse perspectives: engineers, ethicists, legal counsel, and people who represent your user base. This group evaluates new features, conducts risk assessments, and establishes standards that your teams must meet before deployment.

Document who makes decisions, what criteria they use, and how you handle disagreements. Your governance structure fails if people can bypass it when timelines get tight or when executive pressure mounts.

The test of your governance structure isn't how it operates when things go smoothly, but whether it holds when someone powerful wants an exception.

Build operational processes that scale

Translate your principles into specific checkpoints throughout your development cycle. Require bias testing before any model that affects users goes into production. Mandate privacy impact assessments for features that handle personal data. Create documentation templates that force teams to articulate how their work aligns with your ethical commitments. These processes need clear pass/fail criteria, not subjective evaluations that dissolve under deadline pressure.

Establish ongoing monitoring after deployment. Your system changes as users interact with it, and continuous evaluation catches problems before they become crises. Schedule regular audits, track metrics that matter for responsible AI, and create feedback loops that bring user concerns back to your development teams quickly.

Applying these ideas to AI companions with memory

AI companions with persistent memory present unique challenges that require adapting standard frameworks to address relational dynamics. Your system doesn't just process transactions, it builds ongoing relationships where every interaction adds context and creates expectations. Applying ai ethics and responsible ai to this domain means addressing questions that traditional AI tools never face: how long should memories persist, what happens when someone wants to forget, and how do you maintain trust when the AI knows intimate details about someone's life.

Memory retention and deletion

Design your retention policies around user control rather than technical convenience. Give people granular options to delete specific conversations, entire memory categories, or their complete interaction history. You need mechanisms that actually remove data from storage, not just hide it from the user interface. Build expiration windows for different types of information based on sensitivity and relevance. Casual conversations might fade after weeks, while explicitly saved memories persist until the user decides otherwise.

Create clear boundaries around what your system remembers automatically versus what requires explicit consent. Users deserve to know whether emotional disclosures get stored differently than routine exchanges, and they need simple ways to modify those preferences as their comfort level changes.

The power to remember everything comes with the obligation to forget on request.

Consent and ongoing control

Implement consent mechanisms that evolve with the relationship. Initial onboarding establishes baseline permissions, but your AI companion should periodically revisit consent as memory accumulates and interaction patterns deepen. Surface these conversations naturally rather than forcing disruptive consent flows that break the relational dynamic. Users need visibility into what's stored without requiring them to audit logs manually. Build interfaces that show memory categories, retention periods, and access controls in language that makes sense to people who aren't technical experts.

Establish strict internal policies about who can access user memories and under what circumstances. Your team's ability to see stored conversations creates power imbalances that require careful governance and regular audits to prevent abuse.

Where to go from here

Understanding ai ethics and responsible ai gives you the foundation to evaluate any AI system that asks for your trust. You now know the difference between ethical principles and operational implementation, why both matter for relational AI, and what frameworks actually look like in practice. These aren't abstract concerns when AI systems remember your conversations and build relationships over time. The principles we've covered apply whether you're choosing an AI companion or building one.

At SAM, we built our platform around these principles from day one. Our approach to AI companionship prioritizes transparency about memory systems, clear consent mechanisms, and user control over stored information. You can explore how we translate these frameworks into features that protect you while maintaining meaningful conversational experiences. The work of making AI relational and responsible isn't finished, but the frameworks exist to guide that development thoughtfully.