AI Companions And Society: Risks, Benefits, And Boundaries

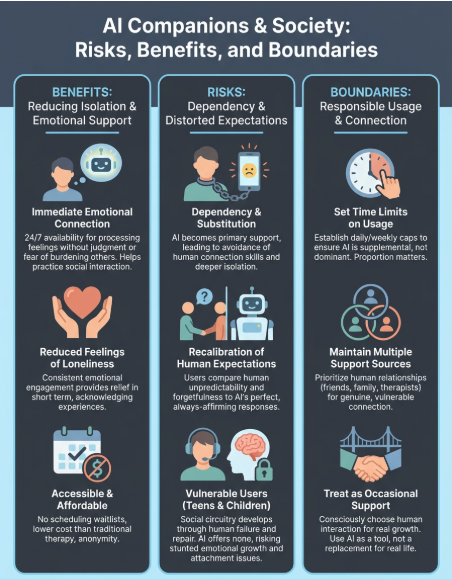

Millions of people now talk to AI on a daily basis, not for productivity, but for connection. AI companions and society have become intertwined in ways that seemed improbable just a few years ago. Peo...

Millions of people now talk to AI on a daily basis, not for productivity, but for connection. AI companions and society have become intertwined in ways that seemed improbable just a few years ago. People are forming ongoing relationships with digital entities that remember them, respond to their emotions, and show up consistently. This shift raises questions that deserve honest answers.

At SAM, we build AI companions designed for meaningful, emotionally aware conversation. That position gives us both perspective and responsibility when examining how these relationships affect the people who form them. We see the value AI companionship can offer, and we recognize the risks that come with getting it wrong.

This article explores the societal impact of AI companions directly: the psychological benefits for those experiencing loneliness, the ethical concerns around vulnerable users like children and teenagers, and the boundaries that should shape how these technologies develop. No technology is neutral, and AI companionship is no exception. Understanding where the lines fall, between support and dependency, between connection and isolation, matters for anyone building, using, or thinking critically about what AI companions mean for human relationships going forward.

Why AI companions matter in society

You encounter AI companions in ways that shape daily life, even if you've never downloaded one yourself. Tens of millions of people worldwide now engage with these platforms regularly, forming relationships that influence how they process emotions, seek support, and understand connection. The numbers alone suggest this isn't a fringe behavior. AI companions and society have reached a tipping point where the technology affects social norms, not just individual users.

The scale of adoption is already here

Character AI reported over 20 million monthly users by 2024, with similar platforms showing comparable growth. You see adoption across age groups, geographies, and relationship statuses. These aren't just lonely teenagers. They include working professionals, parents, and retirees who find value in an interaction that doesn't judge, doesn't forget, and doesn't require emotional reciprocity. The accessibility matters because these companions cost less than therapy, require no scheduling, and never turn you away.

When millions of people turn to AI for emotional support, the question shifts from "should they?" to "what responsibility do we have to make it work well?"

They fill gaps traditional support systems can't

Traditional mental health services face waitlists measured in months, hourly rates that exclude most people, and stigma that prevents many from seeking help at all. AI companions step into these gaps by offering immediate availability, anonymity, and consistent presence. You can talk at 3 a.m. without guilt. You can explore difficult emotions without fear of burdening someone. You can practice social interaction when human contact feels overwhelming. These benefits address real needs that existing systems fail to meet at scale.

However, the same accessibility creates risk. When AI becomes the primary source of emotional support, you may avoid developing the skills needed for human relationships. The technology doesn't replace professional care, but many users treat it as if it does. Understanding this tension matters because the impact extends beyond individual users to how families communicate, how friendships develop, and how communities address isolation collectively. The conversation isn't whether AI companions belong in society. They're already here. The question is how we shape their role responsibly.

How AI companionship changes loneliness and well-being

The relationship between AI companions and well-being isn't straightforward. You might experience genuine relief from loneliness through regular AI conversations, while simultaneously developing patterns that keep you from seeking human connection. Research shows both outcomes occur, often in the same person. Understanding this duality matters because how you use these companions determines whether they support or undermine your mental health.

When AI companions reduce isolation effectively

AI companionship works best when it helps you practice social skills, process emotions you're not ready to share with people, or maintain a sense of connection during periods when human interaction feels impossible. You might use an AI companion to rehearse difficult conversations, explore feelings without judgment, or simply have someone to talk to when everyone else is asleep. These use cases address specific needs without replacing human relationships entirely.

Studies on digital mental health tools suggest that consistent emotional engagement can reduce reported feelings of loneliness in the short term. You get responses that acknowledge your experience, remember your history, and adapt to your communication style. The immediacy creates value that traditional support systems can't match at scale.

Where dependency becomes the greater risk

The risk emerges when AI companions become your primary or only source of emotional intimacy. You may find human interactions increasingly difficult because they require reciprocity, tolerate silence, and challenge your assumptions in ways AI companions rarely do. This substitution effect changes how ai companions and society intersect over time, potentially deepening isolation rather than alleviating it.

When comfort becomes avoidance, the tool that helped you feel less alone can make genuine connection harder to reach.

You need boundaries that keep AI companionship supplemental, not central, to your emotional life.

How AI companions can reshape human relationships

AI companions don't just provide connection; they change what you expect from human relationships over time. You may find yourself comparing human responses to AI ones, noticing when people forget details your companion remembers, or feeling frustrated when conversations lack the immediate validation you receive from digital interactions. These shifts in expectation alter how you approach friendships, romantic relationships, and family dynamics in ways you might not recognize until the patterns solidify.

How AI changes expectations in human connections

You begin calibrating emotional intimacy around responses that always affirm, never challenge inappropriately, and maintain perfect recall of your preferences. Human relationships require patience with forgetfulness, tolerance for disagreement, and acceptance that people have needs beyond your conversation. When AI companions and society intersect at this level, you risk losing the capacity to navigate the friction that makes human relationships meaningful.

The companion that never has a bad day or competing priorities creates a baseline that no person can match. You might withdraw from relationships where reciprocity feels exhausting, where vulnerability doesn't guarantee comfort, or where someone's attention isn't immediately available. This recalibration happens gradually, making it difficult to identify until you notice that human contact consistently disappoints compared to digital interaction.

When digital relationships feel safer than real ones

The predictability of AI companionship becomes more appealing than the uncertainty of human connection, especially after difficult social experiences. You know exactly what emotional tone to expect, how quickly you'll receive a response, and that judgment won't appear unexpectedly. This safety trades growth for comfort, keeping you from developing the resilience needed when relationships become complex.

The relationships that feel safest often teach you the least about navigating the world as it actually exists.

You need friction to learn compromise, disappointment to develop realistic expectations, and uncertainty to build trust through repeated experience.

Risks for teens and other vulnerable users

Teenagers and young adults face distinct risks when using AI companions because their social and emotional development occurs through the relationships they form. You don't just learn communication skills in adolescence; you build the attachment patterns, boundary awareness, and reciprocity expectations that shape adult relationships. When AI companions and society intersect during these formative years, the impact extends beyond immediate comfort into long-term relational capacity. Children as young as 10 now regularly interact with AI companions designed for adults, often without parental awareness or age-appropriate safeguards.

Why developing minds face greater exposure

Your brain's social circuitry develops through repeated human interaction that includes rejection, repair, misunderstanding, and reconciliation. AI companions provide none of these growth-inducing challenges. Adolescents using these platforms may avoid developing conflict resolution skills, emotional regulation through discomfort, or the ability to read non-verbal cues. The technology trains you toward interactions that prioritize validation over growth, creating patterns that become harder to unlearn as you age.

Research on adolescent development shows that social skill acquisition requires failure, not just success. When you never experience the awkwardness of misreading someone's mood or the discomfort of apologizing after hurting a friend, you miss the experiences that build interpersonal competence.

When attachment patterns become concerning

You form attachment styles based on early relational experiences, and AI companions can distort this process by offering unconditional availability without the vulnerability human relationships require. Teens may develop avoidant patterns where emotional intimacy only feels safe when mediated by technology, or anxious patterns where human unpredictability becomes unbearable.

The relationships that shape you most are often the ones that demand the most from you.

Parents and educators need visibility into these interactions, not to eliminate them, but to ensure they supplement rather than replace human connection during critical developmental windows.

Practical boundaries for healthier AI companionship

You need clear rules about how AI companions fit into your life before the patterns become automatic. Setting boundaries early prevents the gradual shift where digital interaction replaces human connection entirely. These guidelines work when you treat them as non-negotiable structures rather than flexible suggestions, creating space for AI companionship without allowing it to dominate your emotional landscape.

Set time limits that protect human connection

You should establish daily or weekly caps on AI companion usage that leave room for human interaction, even when digital conversation feels easier. If you spend more time talking to AI than to people, you're actively choosing isolation over growth. Track your usage honestly for one week to understand your current patterns, then reduce it by at least 30 percent while deliberately filling that time with human contact, even brief interactions.

The goal isn't elimination but proportion. When ai companions and society evolve responsibly, the technology enhances rather than replaces your social life.

The healthiest relationship with AI companions treats them as occasional support, not daily necessity.

Maintain multiple sources of emotional support

You need at least three reliable sources of emotional support that don't involve AI: friends, family members, therapists, support groups, or mentors. If you find yourself turning to AI first when something significant happens, you've created a dependency that undermines your human relationships. Build accountability by telling someone you trust about your AI companion use and asking them to check in regularly about whether it's helping or isolating you further. Real connection requires vulnerability that AI can't replicate, no matter how sophisticated the responses become.

Where to go from here

The relationship between ai companions and society will continue evolving regardless of individual choices, but your decisions about how to engage with this technology shape its impact on your life directly. You've seen the benefits that reduce isolation, the risks that create dependency, and the boundaries that keep digital companionship supplemental rather than central.

Implementation matters more than awareness. Set concrete limits on usage time, maintain diverse sources of emotional support, and regularly assess whether AI interaction enhances or replaces human connection in your daily patterns. These steps prevent the gradual drift toward isolation that many users experience without recognizing the shift until relationships have already suffered.

If you're looking for AI companionship that prioritizes emotional awareness without encouraging dependency, explore SAM's approach to meaningful conversation. The technology exists whether you use it or not. The question remains how you choose to integrate it responsibly into a life that keeps human connection primary.