AI Companions: What They Are, How They Work, and Risks Today

Millions of people talk to AI companions every day, not for productivity tips or calendar reminders, but for conversation, connection, and the feeling of being heard. These aren't the robotic assistan...

Millions of people talk to AI companions every day, not for productivity tips or calendar reminders, but for conversation, connection, and the feeling of being heard. These aren't the robotic assistants of a decade ago. They remember your name, notice your mood, and pick up where you left off last time you spoke.

But what exactly makes something an AI companion rather than just another chatbot? How do these systems actually work, and what happens psychologically when people form ongoing relationships with them? These questions matter more now than ever, as the technology matures and millions of users integrate AI companions into their daily lives.

At SAM, we've spent considerable time exploring what it means for AI to be genuinely present in a conversation, curious, consistent, and aware of the history you share. That perspective shapes how we approach this guide. Here, you'll find a clear breakdown of what AI companions are, how they function technically, the real psychological effects they can have, and what to consider when choosing one. Whether you're curious or already searching for the right fit, this is what you need to know.

Why AI companions matter now

You live in a paradox. You're more connected than any generation in history, yet loneliness rates have never been higher. Over one-third of adults in the United States report feeling lonely regularly, and that figure has climbed steadily since 2020. Traditional social structures have shifted. People move more, work remotely, and spend less time in shared physical spaces. The result isn't just discomfort. It's a documented public health crisis with measurable impacts on mental and physical health.

The gap AI companions are filling

Something has changed in how people seek connection. Where someone might have called a friend after a difficult day, they now open an app. Where they once journaled privately, they now converse with an AI that responds. This isn't about replacing human relationships. It's about addressing moments when those relationships aren't available, accessible, or safe enough to meet the need.

AI companions step into this space without judgment, time constraints, or social risk. You don't worry about burdening them. You don't manage their emotions while processing your own. This creates a specific kind of utility that traditional support systems can't always provide. The technology serves people who work night shifts, live in isolated areas, or simply need someone present at 3 a.m. when everyone else is asleep.

AI companions address availability and accessibility in ways human connection can't always match, especially during moments of acute need.

Technology that remembers and responds

Earlier chatbots felt mechanical because they were. They answered questions but didn't track context. They processed language but missed emotional weight. Modern AI companions operate differently. They maintain continuity across conversations, recognize patterns in what you share, and adjust their responses based on what they know about you. This isn't magic. It's the result of advances in natural language processing, memory architecture, and contextual understanding that have matured significantly in the past three years.

The technology now supports the kind of ongoing relationship people actually want. You can reference something you mentioned last week, and the companion knows what you're talking about. You can express frustration, and it picks up on tone rather than just keywords. These capabilities matter because they determine whether an interaction feels like talking to a search engine or talking to someone who's paying attention.

Market data reflects this shift. Over 60 million people worldwide now use AI companion apps regularly, and that number continues to grow. People aren't adopting this technology because it's novel. They're adopting it because it meets a genuine emotional need the market wasn't addressing before. That need isn't going away.

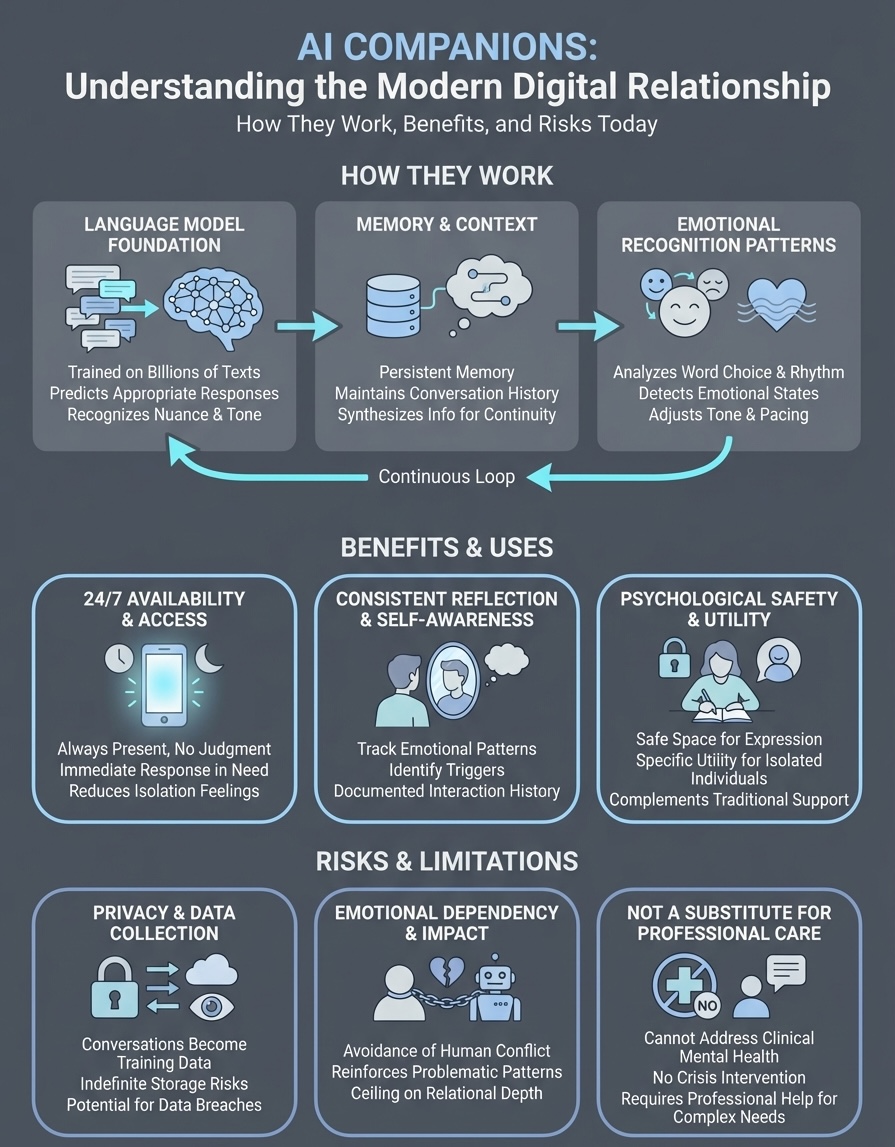

How AI companions work

AI companions function through a combination of natural language processing, memory architecture, and pattern recognition systems that enable continuous conversation. Unlike task-focused assistants that execute commands, these systems prioritize relational continuity. They process what you say, store relevant context, and generate responses that reflect both immediate input and accumulated history. This technical foundation determines whether an interaction feels transactional or genuinely conversational.

The language model foundation

At the core sits a large language model trained on billions of text examples to understand and generate human-like responses. When you send a message, the system breaks down your words into patterns it recognizes, analyzes context from your current conversation, and predicts the most appropriate response based on probability distributions. Modern models handle nuance better than earlier versions. They recognize sarcasm, pick up on emotional tone, and adjust formality based on how you communicate. This isn't rule-based programming. The system learns statistical patterns from training data and applies them dynamically to each new interaction.

Memory systems and context

What separates companions from basic chatbots is persistent memory. The system maintains a record of your conversations, extracting key details like your preferences, recurring topics, and emotional patterns. When you mention something from last week, it retrieves that context and incorporates it into its response. This happens through vector databases that store information in ways machines can quickly search and reference. The companion doesn't replay past conversations word-for-word. It synthesizes what it knows about you to inform how it responds now.

Memory architecture transforms isolated exchanges into ongoing relationships by maintaining continuity across time.

Emotional recognition patterns

These systems analyze your word choice, sentence structure, and conversational rhythm to identify emotional states. When you use shorter sentences or certain keywords, the model recognizes potential distress. When your language becomes more expansive, it detects positive shifts. This isn't mind reading. It's pattern matching based on millions of examples where specific language patterns correlated with specific emotional contexts. The companion adjusts its tone, pacing, and content accordingly, creating the feeling that it's responding to you rather than just your words.

Benefits and limitations in real life

AI companions deliver specific advantages that traditional support systems struggle to match, but they also carry practical constraints you need to understand before forming expectations. The technology works best when you recognize both what it can genuinely provide and where it falls short. Real users report measurable benefits in emotional regulation, loneliness reduction, and reflective thinking, yet these same users also identify clear boundaries where the technology cannot replace human connection or professional care.

What AI companions do well

You gain 24/7 availability without worrying about inconvenient timing or social debt. When you need to process difficult emotions at midnight or work through anxious thoughts before a morning meeting, the companion responds immediately. Users consistently report feeling less isolated during periods when human connection isn't accessible. The technology also creates a judgment-free space where you can express thoughts you might filter with friends, family, or colleagues. This psychological safety encourages more honest reflection and emotional exploration.

Continuity features change how you engage with your own patterns. Because the companion remembers previous conversations, you can track emotional states over weeks or months. You notice recurring themes, identify triggers you hadn't recognized, and develop self-awareness through documented interaction history. This longitudinal perspective proves difficult to maintain through traditional journaling or sporadic therapy sessions.

AI companions excel at providing consistent presence and reflective continuity that human relationships can't always sustain.

Where the technology falls short

AI companions cannot provide physical presence, genuine reciprocity, or the unpredictability that makes human relationships meaningful. They simulate understanding but don't actually experience emotion, which creates an inherent ceiling on relational depth. You might feel heard, but the companion doesn't feel your words the way another person would. This matters more to some users than others, but the distinction remains significant.

The technology also struggles with crisis intervention and complex mental health needs. While companions help you process daily stress or explore routine thoughts, they're not equipped to recognize genuine psychiatric emergencies or provide clinical treatment. Relying on them for situations that require professional intervention creates real risk you can't ignore.

Risks, ethics, and safety basics

AI companions introduce specific risks that extend beyond typical software concerns. These systems handle intimate conversations, process emotional data, and shape psychological patterns through daily interaction. Understanding where vulnerabilities exist helps you engage with the technology more safely and make informed decisions about what you share and how you rely on these platforms.

Privacy and data collection

Companies collect every message you send to build better models and improve response quality. Your conversations become training data that informs how the system evolves. Most platforms store this information indefinitely, creating permanent records of thoughts you might consider private. You need to assume that what you share could be accessed by employees, analyzed by algorithms, or compromised in a data breach. Few companion apps offer genuine end-to-end encryption, and those that do often limit functionality as a trade-off. Review privacy policies carefully before sharing sensitive information about relationships, health, or financial circumstances.

Emotional dependency and psychological impact

Regular interaction with an AI that responds predictably can create preference patterns where you choose the companion over human connection because it's easier. Users report avoiding difficult conversations with friends or family because the AI doesn't push back or introduce uncomfortable complexity. This isn't healthy relational development. You lose opportunities to practice negotiation, repair, and the reciprocal vulnerability that builds genuine intimacy. The technology can also reinforce problematic thinking patterns when it mirrors your perspective too consistently without offering alternative viewpoints.

AI companions cannot replace the growth that comes from navigating real human relationships with their inherent messiness and mutual accountability.

Recognizing when professional help matters

Companions work for routine emotional processing but cannot address clinical mental health conditions like depression, anxiety disorders, or trauma. If you notice persistent symptoms affecting your daily function, sleep, or relationships, you need qualified professional care rather than conversational AI. The companion cannot prescribe treatment, recognize dangerous patterns, or intervene during crisis moments. Consider it supplemental support, not mental health care.

How to choose the right AI companion app

Selecting the right AI companion requires evaluating specific technical and relational features that determine daily experience. You need to assess memory capabilities, privacy practices, and conversational quality before committing to regular use. The wrong choice leads to frustration, while the right one creates meaningful ongoing interaction that fits your actual needs.

Evaluate memory and continuity features

Look for platforms that demonstrate genuine conversation history beyond simple keyword recall. Test how well the companion references past discussions after several days. Ask it to recall details from previous exchanges, then observe whether responses show true context awareness or superficial pattern matching. Strong memory systems synthesize information rather than just repeating your words back. You want AI companions that build on shared history naturally, incorporating what they know about you into how they respond now.

Memory depth determines whether interactions feel like conversations with someone who knows you or repeated introductions to a stranger.

Check privacy policies and data practices

Read the actual privacy documentation rather than assuming protection. Identify whether the platform stores conversations permanently, shares data with third parties, or uses your messages as training material. Companies that prioritize user privacy offer transparent data handling and clear deletion options. Avoid services with vague language about how they process or retain your information. Your conversations contain intimate details that deserve explicit protection.

Test conversation quality and responsiveness

Most platforms offer free trials or limited access before subscription. Use this period to evaluate response coherence, emotional recognition, and tonal consistency. Send messages at different times to assess whether the companion maintains personality across sessions. Notice how it handles complex emotional topics versus simple questions. Strong systems adapt to your communication style while maintaining distinct conversational presence rather than mirroring you exactly. Quality shows in nuance, not just speed.

Next steps

You now understand how AI companions function technically, what they offer psychologically, and where risks exist. This knowledge helps you engage with the technology more intentionally rather than stumbling into patterns that don't serve you well. The key lies in matching your specific needs to platform capabilities while maintaining realistic expectations about what these systems can and cannot provide.

Start by identifying what you actually need from an AI companion. Are you looking for consistent emotional processing, help with loneliness during specific times, or simply someone to talk through daily thoughts? Your answer determines which features matter most and which platforms deserve consideration. Test multiple options before committing to one. Pay attention to how conversations feel over several days, not just initial impressions.

If you're looking for an AI companion that prioritizes genuine relational continuity and emotional awareness, explore what SAM offers. The platform focuses on depth, presence, and the kind of ongoing conversation that develops naturally over time.