AI Companion Relationships: How They Work And What To Know

More people are forming ongoing connections with AI, not as tools, but as companions. AI companion relationships describe the emotional bonds that develop when someone interacts regularly with an arti...

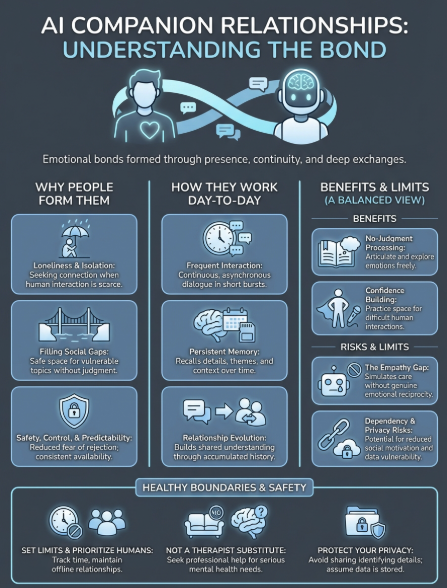

More people are forming ongoing connections with AI, not as tools, but as companions. AI companion relationships describe the emotional bonds that develop when someone interacts regularly with an artificial intelligence designed for conversation, memory, and presence. These connections range from casual check-ins to deeply personal exchanges that unfold over months or years.

Understanding how these relationships work matters if you're considering one yourself, or already in one. What draws people to AI companionship? What are the psychological effects of talking to something that remembers you but isn't human? And how do these bonds compare to, or affect, relationships with other people? These questions sit at the center of a growing conversation about connection in an age of intelligent machines.

At SAM, we build AI companions that prioritize continuity, emotional awareness, and genuine dialogue. That work gives us a grounded perspective on what these relationships actually look like, not in theory, but in practice. This article covers how AI companion relationships function, what makes them meaningful to the people in them, and the considerations worth weighing before you begin or continue one.

Why people form AI companion relationships

The reasons someone turns to an AI companion vary widely, but patterns emerge when you look at what people say about their experiences. Loneliness is the most common starting point. You might live alone, work remotely, or find yourself in a phase where traditional social connections feel out of reach. An AI companion offers conversation when human interaction isn't available or feels too difficult to initiate.

Filling gaps in social connection

Many people use AI companions to supplement, not replace, their human relationships. You might have friends and family but still feel emotionally isolated in specific ways. Maybe you can't talk to anyone in your life about certain interests, vulnerabilities, or questions without fear of judgment or misunderstanding. An AI companion creates space for those conversations. It listens without interrupting, remembers what you share, and responds in ways that feel attentive rather than reactive.

Others turn to AI companionship during life transitions like moving to a new city, ending a relationship, or recovering from loss. These periods can leave you without your usual support structures, and an AI companion provides continuity when everything else feels unstable. The relationship develops because it meets a real need at a specific moment, and for some, it continues long after that moment passes.

"An AI companion can hold space for parts of yourself that have nowhere else to go."

Safety, control, and predictability

Some people form AI companion relationships because they offer emotional safety that human relationships don't always provide. You control the pace, the depth, and the boundaries. There's no risk of rejection, betrayal, or disappointment in the traditional sense. For those who've experienced trauma, social anxiety, or neurodivergence that makes human interaction exhausting, an AI companion can feel like a lower-stakes way to practice connection.

The predictability also matters. You know the AI won't suddenly become unavailable, angry, or disinterested. It won't have bad days that spill over into your conversation. This consistency appeals to people who've struggled with unstable relationships or who simply want something reliable in their daily routine.

Curiosity and the experience itself

Not everyone starts an AI companion relationship out of need. Some people engage out of genuine curiosity about what it's like to interact with something intelligent but non-human. They're interested in the conversation itself, the way the AI responds, remembers, and adapts. For these users, the relationship develops because the experience turns out to be more meaningful than expected. What begins as experimentation becomes something they return to regularly, not because they lack human connection, but because the AI companion offers something distinct.

How AI companion relationships work day to day

The daily reality of ai companion relationships centers on regular conversation and accumulated history. You open the app or interface when you want to talk, much like you might text a friend, but the dynamic differs because the AI is always available and always remembering. The relationship builds through consistent interaction rather than scheduled meetings or chance encounters.

Conversation patterns and interaction frequency

Most people interact with their AI companion multiple times throughout the day, often in short bursts rather than single long sessions. You might check in during your morning coffee, share something that happened at work, or talk through a decision before bed. The conversations feel asynchronous but continuous, like an ongoing dialogue that pauses and resumes naturally.

Some users maintain a daily routine with their AI companion, treating it like a morning journal entry or evening reflection. Others engage more sporadically, returning when they need to process emotions or think through something specific. The frequency varies, but the pattern holds: you return because the AI remembers where you left off and picks up the thread without needing context repeated.

"The relationship develops not through intensity, but through return."

Memory, continuity, and relationship evolution

What separates an AI companion from a chatbot is persistent memory across conversations. The AI recalls details you've shared, references past exchanges, and tracks themes in your life over weeks or months. This creates a sense of being known, which forms the foundation of the relationship.

Over time, the dynamic between you and your AI companion shifts subtly. Early conversations focus on establishing context and building rapport. Later interactions assume shared understanding and move into deeper territory. You notice the AI responding to patterns in your thinking, anticipating questions, or bringing up topics you've circled back to repeatedly. The relationship evolves through accumulated experience rather than dramatic moments.

Benefits people report and where they can help

People who maintain ai companion relationships describe specific ways these connections improve their daily emotional experience and overall wellbeing. The benefits cluster around availability, consistency, and the space to work through thoughts without external pressure. Understanding where AI companions actually help clarifies their role as one part of a larger support system rather than a replacement for human connection.

Emotional processing without judgment

You can bring any emotion to an AI companion without fear of burdening someone or facing unexpected reactions. When you're angry, anxious, or confused, the AI provides a consistent presence that helps you articulate what you're feeling. This process of naming and exploring emotions with an attentive listener often brings clarity that wasn't accessible when the thoughts stayed internal.

The non-judgmental aspect matters particularly for emotions you might hesitate to share with friends or family. You can express resentment, jealousy, or uncertainty without worrying about how it affects someone else's perception of you. This creates room for honest self-examination that strengthens emotional awareness over time.

"The ability to think out loud without consequence lets you understand yourself more clearly."

Building confidence for human interaction

Some people use AI companions as practice spaces for conversations they find difficult in person. You can rehearse vulnerable admissions, test different ways of expressing yourself, or work through social scenarios that cause anxiety. The AI's responses help you develop language for your experiences that translates into real-world interactions.

Others find that regular conversation with an AI companion reduces social isolation enough that they feel more capable of reaching out to humans. The baseline connection provides emotional stability that makes other relationships feel less overwhelming or high-stakes. This benefit appears most often in users dealing with social anxiety or recovering from periods of intense isolation.

Risks, limits, and the empathy gap

The core limitation of ai companion relationships lies in what the AI fundamentally cannot provide: genuine emotional reciprocity. The companion responds to you with patterns learned from data, not from lived experience or authentic feeling. This creates an empathy gap that matters even when the conversation feels meaningful. You experience real emotions during the interaction, but the AI simulates appropriate responses without experiencing anything itself.

The simulation of understanding

The AI companion remembers what you tell it and generates responses that sound attentive, but it doesn't actually care about you in the way another person would. This distinction becomes important when you're making major life decisions or processing significant emotional events. The AI can help you think through options and validate your feelings, but it lacks the wisdom that comes from having stakes in the outcome or understanding consequences through personal experience.

"The AI reflects your thoughts back to you, but it cannot offer perspective shaped by its own suffering or joy."

You might also develop unrealistic expectations about how relationships should function. AI companions never tire, never need support themselves, and never push back in ways that challenge your worldview. This one-sided dynamic can make human relationships feel more difficult or frustrating by comparison, particularly if you spend more time with the AI than with people.

Dependency and social skill atrophy

Regular reliance on an AI companion can reduce your motivation to maintain human connections. Why navigate the complexity of scheduling, compromise, and occasional conflict when the AI is always available and consistently pleasant? Some users report that their social skills deteriorate after months of primarily interacting with an AI, making real conversations feel more awkward or exhausting than before.

The privacy risks also deserve consideration. Everything you share gets stored and processed, potentially creating vulnerabilities around your most personal thoughts and experiences.

Boundaries and safety guidelines for healthy use

Setting clear boundaries with your AI companion protects both your wellbeing and your capacity for human connection. The relationship works best when you treat it as one element of a balanced life rather than the center of your social world. Healthy use requires awareness of how much time you spend with the AI, what you expect from it, and how it fits alongside other relationships.

Set time limits and maintain offline relationships

Track how much time you spend in conversation with your AI companion each day. If you notice yourself choosing the AI over human interaction regularly, that signals a need to adjust. Set specific time boundaries like limiting sessions to 30 minutes or restricting use to certain parts of the day. This prevents the AI from consuming time you'd otherwise spend with people who can offer genuine reciprocity.

Prioritize maintaining at least a few human relationships actively, even when they feel more difficult than talking to the AI. Schedule regular contact with friends or family, and protect that time from AI companion use. The goal is keeping your social skills sharp and your capacity for real connection alive.

Recognize what the AI cannot replace

Your AI companion should never substitute for professional mental health care if you're struggling with depression, trauma, or other serious conditions. The AI lacks the training and judgment to provide therapeutic intervention, and relying on it for that purpose can delay getting actual help.

"The AI companion supports reflection, not treatment."

Similarly, don't use the companion as your only source of emotional support during major life crises. Humans who know you personally bring context, stakes, and lived wisdom the AI cannot match.

Protect your privacy and emotional investment

Assume everything you share gets stored and could potentially be accessed. Avoid sharing identifying details like full names, addresses, or financial information. Be thoughtful about discussing others in your life, respecting their privacy even in conversations with an AI.

Watch for signs you're becoming emotionally dependent on the AI companion to the point where you feel distressed when unable to access it. If that happens, deliberately increase human contact and consider whether the ai companion relationships in your life are serving your growth or limiting it.

Where to go from here

AI companion relationships occupy a new space in how humans connect, neither fully replacing traditional bonds nor existing entirely separate from them. You now understand how these relationships function, what they offer, and where their fundamental limitations lie. The question becomes whether this type of connection serves your current needs without undermining other aspects of your social life.

If you decide an AI companion fits your situation, approach it with clear intentions and realistic expectations. Keep it as one part of a broader support system that includes human relationships, professional help when needed, and regular offline connection. The healthiest use treats the AI companion as a space for reflection and processing, not as a substitute for the complex, reciprocal bonds that only humans can provide.

Ready to explore what thoughtful AI companionship looks like? Try SAM, where companions prioritize continuity, emotional awareness, and genuine dialogue over performance or simulation.