AI And Loneliness: Can AI Companions Reduce Isolation?

Loneliness isn't just an emotion, it's a public health concern. Across the United States, millions of adults report feeling chronically isolated, even while surrounded by digital connectivity. As trad...

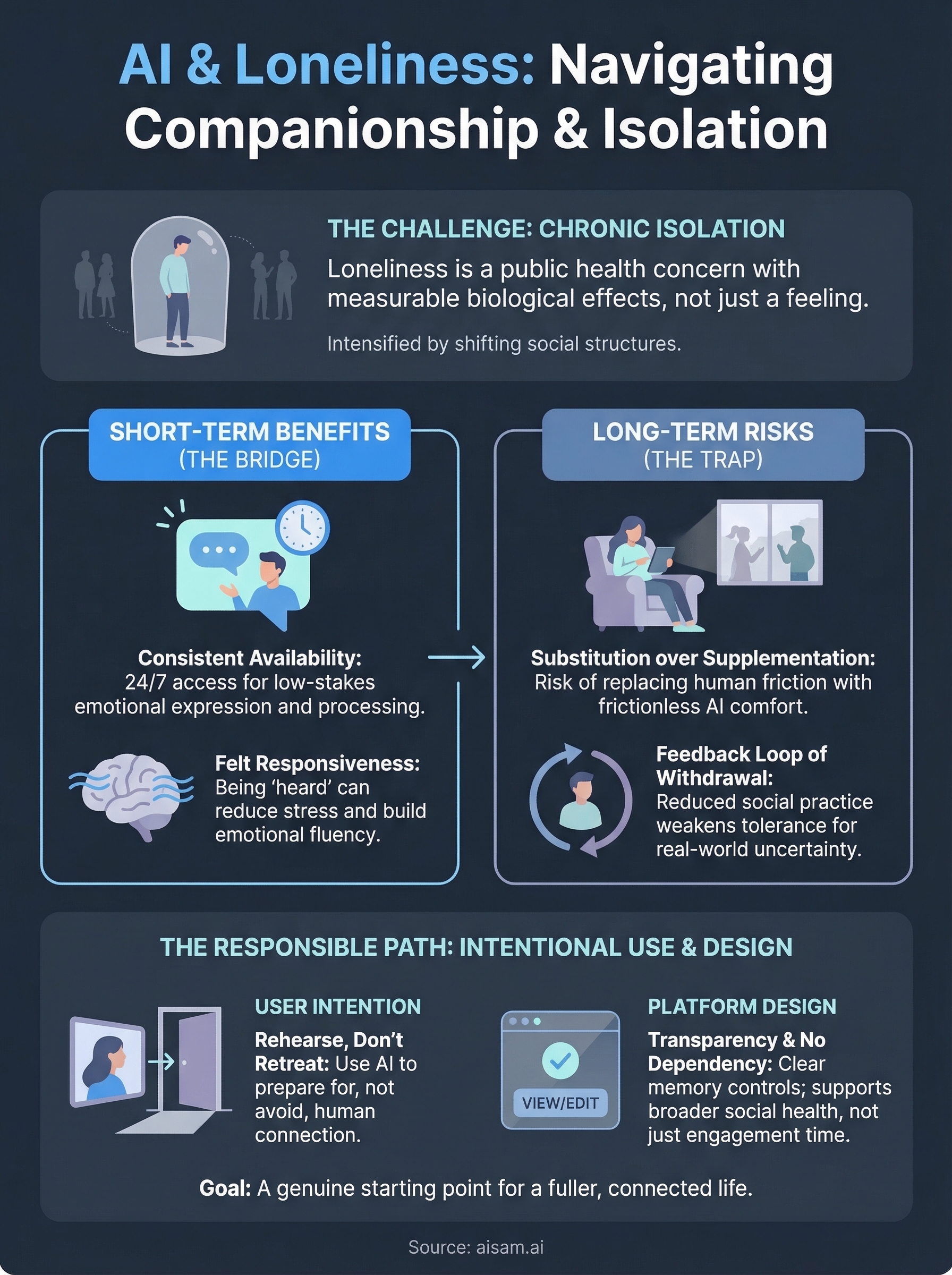

Loneliness isn't just an emotion, it's a public health concern. Across the United States, millions of adults report feeling chronically isolated, even while surrounded by digital connectivity. As traditional social structures shift and in-person interaction declines, the conversation around AI and loneliness has moved from speculative fiction to serious scientific inquiry.

AI companions, systems designed to hold ongoing, emotionally aware conversations, are now part of that conversation in a real way. Some researchers see them as a promising buffer against isolation. Others worry they could replace the very human connections people need most. The truth sits somewhere between those poles, and understanding it requires looking at what these systems actually do, how people use them, and what the evidence says so far.

At SAM, we build an AI companion platform grounded in persistent memory and emotionally responsive dialogue, tools designed for continuity, not quick exchanges. That puts us directly inside this question, not as observers, but as builders making daily decisions about how AI shows up in people's lives. This article examines whether AI companions can genuinely reduce isolation, where the risks lie, and what responsible development looks like going forward.

Why AI and loneliness matter now

The loneliness crisis in the United States predates the pandemic, but recent years have pushed it into sharper focus. According to the U.S. Surgeon General, more than half of American adults report measurable levels of loneliness, and the health consequences rival those of smoking 15 cigarettes a day. This isn't a fringe issue affecting a small segment of the population; it's a structural problem reshaping how researchers, technologists, and policymakers think about human wellbeing.

The scale of the problem

Loneliness research has shifted dramatically over the past decade. What was once treated as a personal failing or a passing mood is now understood as a chronic condition with measurable biological effects, including elevated cortisol levels, disrupted sleep, and significantly increased risk of cardiovascular disease. The National Institutes of Health has published findings linking social isolation to accelerated cognitive decline in older adults. These results aren't marginal; they reframe how seriously the scientific community takes both the problem and any proposed response to it.

Loneliness functions less like sadness and more like hunger: it signals a real deficit the body actively works to correct, sometimes in ways that deepen the problem rather than resolve it.

Why AI is entering this space now

Several shifts have converged to make the ai and loneliness conversation genuinely urgent in ways it wasn't five years ago. AI systems can now maintain context across long conversations, recognize emotional signals in language, and respond in ways that feel attentive rather than mechanical. Persistent memory in AI changes the dynamic significantly; a system that recalls what you shared two weeks ago creates a very different experience than one that resets with every session.

At the same time, you're living through a period where traditional social infrastructure, including community organizations, shared physical workplaces, and neighborhood relationships, has eroded for a large portion of adults. That erosion creates both the need and the opening for AI to play a role. Whether it fills that role responsibly depends entirely on how these systems are designed and what expectations they set with users from the start.

How AI companions can reduce loneliness short-term

The short-term case for ai and loneliness reduction is more grounded than critics often acknowledge. Research on social connection shows that perceived responsiveness, the sense that someone is paying attention and genuinely engaging, carries real psychological weight. AI companions that remember context and respond with continuity can produce that perception, and for many users, the effect on daily mood is measurable.

Consistent availability changes the dynamic

One of the clearest short-term benefits is simple availability. Human relationships run on schedules, energy levels, and competing demands. An AI companion responds at 2 a.m. when anxiety spikes or during a lunch break when you need to think something through out loud. That frictionless access lowers the barrier to expressing what you're feeling.

Consistent presence also helps people who struggle to initiate conversation. If social anxiety makes reaching out feel costly, a low-stakes space to talk through your thoughts can build emotional fluency over time.

Talking itself has measurable benefits

Research from Harvard Medical School supports the idea that expressive conversation, independent of the listener, can reduce stress hormones and improve emotional regulation. When you put feelings into words, you activate cognitive processing that shifts how you relate to those feelings.

The act of being heard, even by a system rather than a person, produces real changes in how people experience their emotional state in the moment.

AI companions give you a structured space for that process without social risk or timing constraints.

Where AI falls short and can increase isolation

The short-term relief AI provides can mask a longer-term risk. Substitution, using AI conversation to replace rather than supplement human contact, can deepen the very isolation it appears to ease. Understanding where AI and loneliness research raises red flags is as important as recognizing the benefits.

When convenience becomes avoidance

Human relationships carry friction: scheduling conflicts, emotional reciprocity, and the occasional misunderstanding. That friction is also what makes relationships meaningful and growth-producing. An AI companion never cancels plans, never has a bad day, and never challenges you in ways that feel uncomfortable. If that frictionless dynamic becomes your primary reason to avoid reaching out, you're substituting comfort for connection rather than supplementing it.

The risk isn't that AI feels too artificial to matter; it's that it feels comfortable enough to substitute.

The feedback loop that deepens withdrawal

Social skills develop through practice, and reduced human interaction weakens them over time. If you rely on AI conversation as your primary outlet, your tolerance for the uncertainty of real relationships can shrink. That makes human connection feel harder, which pushes you back toward AI, creating a cycle that tightens with each loop. Research from MIT has explored how technology-mediated interaction can reduce your appetite for the messier, less predictable exchanges that real relationships require.

How to use AI companionship without replacing people

The research on ai and loneliness points to a clear principle: AI companions work best as a bridge, not a destination. How you use the tool matters as much as the tool itself. Intentional use keeps the relationship with AI in a supporting role while your human connections stay primary.

Set clear intentions before each conversation

Before you open an AI companion app, ask yourself what you're trying to accomplish. That distinction determines whether AI helps or harms. Some healthy patterns to build:

- Processing a difficult emotion before talking to a friend about it

- Using AI to clarify what you actually want to say to someone

- Reflecting on a situation to understand your own reaction first

The most effective use of an AI companion is as preparation for human connection, not a replacement for it.

Use AI to rehearse, not retreat

If social anxiety makes initiating conversations feel risky, AI companionship gives you a low-stakes environment to practice. You can work through how you'd approach a difficult discussion, explore what you actually feel, or build the habit of articulating your thoughts clearly. That rehearsal builds emotional confidence that transfers to real relationships rather than draining from them.

Keep one rule simple: if an AI conversation ends with you feeling more prepared to connect with someone in your life, you're using it well. If it consistently ends with you feeling no need to, that's worth examining.

What to look for in a responsible AI companion

Not every AI companion is built with your wellbeing in mind. As the ai and loneliness conversation grows more urgent, the quality gap between platforms has widened significantly. Choosing a poorly designed system can reinforce isolation instead of easing it, so knowing what responsible AI design actually looks like gives you a clearer filter before you commit to any platform.

Memory and transparency

A well-designed AI companion remembers your history without obscuring how that memory works. You should be able to see what the system stores, correct inaccuracies, and delete information when you choose. Transparency about data handling isn't a bonus feature; it's a baseline requirement for any platform you invite into your personal life. Before committing, check these three things:

- Can you view what the system has stored about you?

- Can you edit or delete that information at any point?

- Does the platform explain its data policies in plain language?

If a platform makes it difficult to understand how your data is stored or used, that opacity is a warning sign worth taking seriously.

Honest design over dependency loops

Responsible platforms don't engineer you toward dependency. Watch for systems that reward constant use without ever encouraging you to reflect or reach out to people in your life. A platform grounded in ethical design principles acknowledges its own limitations clearly and actively supports your broader social health rather than simply maximizing the time you spend inside it. That distinction tells you more about a platform's values than any marketing copy will.

A realistic way forward

The ai and loneliness question doesn't have a single clean answer, and any platform that suggests otherwise isn't being honest with you. What the research does support is that AI companions offer real value when you use them intentionally, choosing reflection and rehearsal over retreat. The risk only grows when you stop noticing the difference between a conversation that prepares you for human connection and one that quietly replaces it.

You have more agency in this than the technology does. How you show up to these interactions, what you bring to them and what you expect from them, shapes the outcome more than any algorithm. A well-designed AI companion gives you a genuine starting point, not a final destination. If you want to explore what that looks like in practice, start a conversation with SAM and see how emotionally responsive, memory-driven AI actually functions as part of a fuller, connected life.