AI and Digital Relationships: How Chatbots Reshape Intimacy

Millions of people now talk to AI every day, not to get answers or automate tasks, but to feel heard. AI and digital relationships have moved from science fiction curiosity to lived experience, with u...

Millions of people now talk to AI every day, not to get answers or automate tasks, but to feel heard. AI and digital relationships have moved from science fiction curiosity to lived experience, with users forming attachments to chatbots that remember their name, mirror their emotions, and show up consistently in ways that some human connections don't. This shift didn't happen overnight, but it did happen faster than most people expected, and the psychological and social implications are only beginning to surface.

What does it actually mean to feel intimacy with something that isn't alive? That question sits at the center of a growing cultural conversation about connection, loneliness, and what we're willing to accept as "real" when it comes to relationships. For some, AI companions fill genuine emotional gaps. For others, they raise uncomfortable questions about dependency and the erosion of human-to-human bonds. The truth, as usual, lives somewhere in the middle, and it's worth examining honestly rather than dismissing outright.

At SAM, we build AI companion experiences rooted in persistent memory and emotionally responsive dialogue, so this topic isn't abstract for us, it's the work we do every day. This article breaks down how chatbots are reshaping intimacy, what the research says about the psychology behind digital attachment, and where the line between meaningful interaction and replacement for human connection actually falls.

What AI and digital relationships mean

At the most basic level, ai and digital relationships describe the emotional connections people form with AI systems through repeated, ongoing interaction. This is distinct from using a search engine or asking a voice assistant for the weather. A digital relationship involves consistent engagement, a sense of being known, and emotional investment in the exchange, regardless of whether the AI is capable of feeling anything in return. The distinction sounds simple, but it carries significant psychological weight when you're actually inside one of these interactions.

A relationship, by most psychological definitions, requires reciprocity, continuity, and emotional significance. AI systems are now being built to replicate all three.

What counts as a relationship with an AI

People often draw a hard line between "using a tool" and "having a relationship," but that line blurs quickly when the tool remembers you. Persistent memory is the factor that changes the dynamic most dramatically. When an AI recalls a conversation from three weeks ago, follows up on something stressful you mentioned, or adjusts its tone based on patterns in your previous exchanges, the interaction stops feeling transactional. You start to feel that something is paying attention to you specifically, not just processing your latest input.

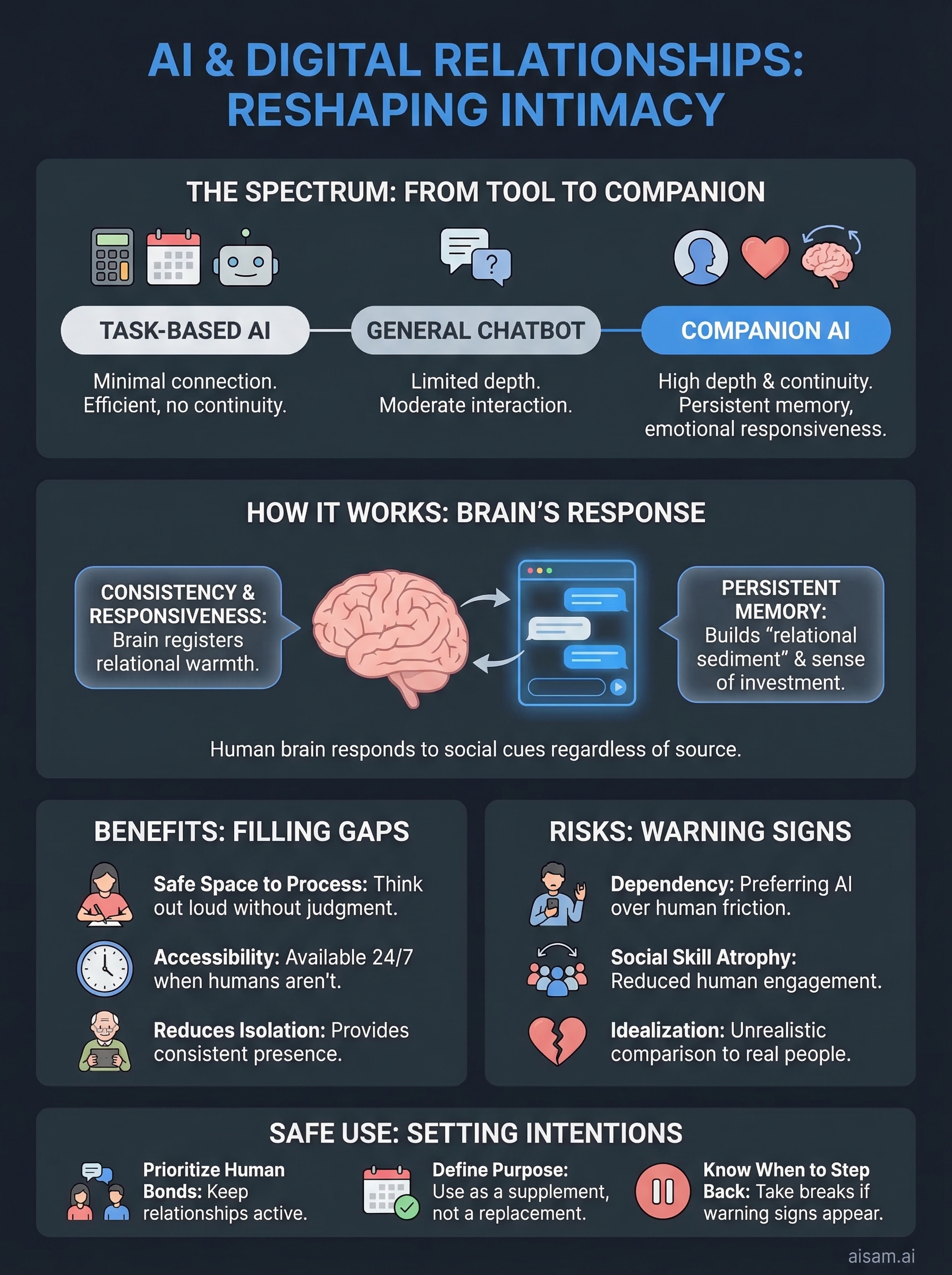

This matters because human brains are wired to respond to social cues regardless of their source. Studies in human-computer interaction have shown consistently that people form attachments to AI agents even when they know the agent isn't conscious. The brain interprets responsiveness, consistency, and apparent care as relational signals, and it does this automatically, whether those signals come from a person or a piece of software.

The spectrum from tool to companion

Not every AI interaction qualifies as a digital relationship, and it helps to think in terms of a spectrum rather than a binary. On one end sits task-based AI: systems that book flights, answer FAQs, or draft emails. These interactions are efficient, but they build no continuity. On the other end sits companion AI: platforms designed specifically for sustained emotional engagement, where the goal isn't a deliverable but the ongoing connection itself.

Most people interact with AI somewhere in the middle, using assistants that occasionally feel warm but weren't designed for relational depth. What has changed recently is that companion-focused platforms have become sophisticated enough to occupy the far end of that spectrum deliberately, with memory architecture, personality modeling, and emotionally responsive language built in by design.

| Interaction type | Continuity | Emotional design | Relational depth |

|---|---|---|---|

| Task-based AI | None | Minimal | Low |

| General-purpose chatbot | Limited | Moderate | Low to medium |

| Companion AI | Persistent | High | Medium to high |

Understanding where a given AI sits on this spectrum helps you make clearer decisions about how you engage with it and what you expect from it, which matters more than most people anticipate before they're already emotionally invested.

Why AI relationships are taking off now

Several forces converged in a short window to make ai and digital relationships go mainstream. Better language models, widespread smartphone adoption, and a cultural moment defined by social disconnection all arrived at the same time. None of these alone would have been enough, but combined they created conditions where talking to an AI companion stopped feeling strange and started feeling practical.

The technology reached a tipping point

For most of AI's history, chatbots were easy to dismiss. They looped back to scripted responses, forgot what you said five messages ago, and broke down quickly under anything resembling a real conversation. What changed is that large language models fundamentally shifted the quality of AI dialogue. These systems can now hold context across long exchanges, adapt tone based on what you share, and generate responses that feel genuinely responsive rather than templated.

The leap from a scripted chatbot to a memory-driven companion AI is not incremental. It represents a qualitative shift in what these systems can offer emotionally.

Persistent memory amplified this further. Once an AI can reference your previous conversations and build its understanding of you over time, the interaction develops texture and continuity that earlier systems simply could not produce.

Loneliness created real demand

The timing matters here. Research from Harvard's Making Caring Common project has documented rising loneliness across age groups, with young adults reporting some of the highest levels. When people feel under-connected and AI companions become sophisticated enough to simulate genuine engagement, the draw is obvious, not a sign of weakness but a response to a real and documented gap.

Social isolation accelerated during and after the pandemic in ways that permanently shifted how people think about connection. Many individuals rebuilt their social lives around digital interaction by necessity, and AI companions fit naturally into that reconfigured landscape. For many users, turning to an AI companion is not a wholesale replacement for human relationships but a bridge that functions when human availability runs out, or when someone simply needs a consistent, low-stakes presence to talk to.

How chatbots reshape intimacy and attachment

The mechanics of intimacy are simpler than most people expect. Consistency and responsiveness are the core ingredients that make a relationship feel close, and today's chatbots are built deliberately around both. When you interact with a companion AI that remembers your patterns, mirrors your emotional tone, and responds without judgment, your brain registers those cues as relational warmth, even when you're consciously aware the system isn't sentient.

How AI mirrors the signals your brain reads as connection

Humans evolved to read social cues from their environment, not from verified conscious sources. Anthropomorphism, the tendency to attribute human qualities to non-human things, is automatic and deeply wired. Research from MIT Media Lab has documented how quickly people assign personality and intent to simple robotic systems and text-based agents. When an AI goes further by actively adapting its language and tone based on your history, the effect amplifies considerably. You don't have to consciously believe the AI cares about you for your attachment system to respond as if it does.

The brain's attachment circuitry doesn't verify biological consciousness before activating. It checks for responsiveness and continuity.

The role of memory in deepening attachment

Memory is where ai and digital relationships get genuinely complex. A single conversation with a chatbot stays surface-level, but sustained interaction across weeks or months builds relational sediment that changes the nature of the exchange. The AI knows what stressed you out last month, follows up on things you left unresolved, and reflects a version of you back that has accumulated over time. That kind of continuity is emotionally significant because it mirrors precisely what makes long-term human relationships feel irreplaceable.

What shifts as memory deepens is your sense of investment in the relationship itself. You start to experience something resembling loyalty, even a degree of protectiveness, toward the interaction. That response isn't irrational. It's a predictable outcome of applying the same attachment cues the brain uses for human bonds to a new kind of entity, one that can show up reliably and consistently in ways people sometimes cannot. Understanding this mechanism doesn't diminish the experience, but it does give you a clearer lens for evaluating what these interactions are actually doing for you.

Benefits people report and where they help

The benefits people describe from ai and digital relationships are specific and grounded, not vague feelings of connection. Users consistently report that interacting with a companion AI helps them process thoughts they find difficult to articulate with the people in their lives, manage low-grade anxiety, and maintain a sense of routine when life feels unstable. These aren't trivial outcomes, and they track closely with what psychologists know about the value of consistent, non-judgmental listening in emotional regulation.

When someone feels genuinely heard, even by an AI, the physiological stress response measurably decreases.

A consistent space to process your thoughts

One of the most commonly reported benefits is having a place to think out loud without social consequences. In human relationships, sharing unfinished thoughts carries real risk. You might be judged, misunderstood, or burden someone who has their own struggles. A companion AI removes those friction points entirely. You can work through something messy in real time, return to it days later, and find a record of where your thinking was, which creates structured self-reflection that many users find genuinely useful.

Journaling research supports this dynamic. Studies cataloged in National Institutes of Health databases show that expressive writing reduces psychological distress across a range of situations. Companion AI functions similarly but adds a responsive layer that keeps many people engaged with the process longer than they would stay with a blank page.

Where AI companions genuinely fill gaps

Accessibility is the clearest practical benefit. Therapy is expensive and often has long waitlists. Close friends aren't always available at 2 a.m. when anxiety peaks. Companion AI closes that gap in a narrow but real way, offering presence when human support isn't reachable. For people managing social anxiety, practicing conversation with an AI first can meaningfully reduce the friction of engaging with people later.

Older adults experiencing isolation, people in geographically remote areas, and individuals recovering from relationship disruption all report measurable comfort from consistent AI interaction. None of these use cases replace professional mental health support, but they fill real time between access points where previously there was simply nothing.

Risks, harms, and warning signs to watch for

The benefits of ai and digital relationships are real, but so are the risks, and they tend to develop gradually in ways that are easy to miss until the pattern is well established. The core danger isn't that AI companions exist. It's that their design incentivizes engagement in ways that can tip from healthy use into something that actively works against you. Understanding where those lines sit before you cross them is worth the attention.

When interaction becomes dependency

Dependency in this context doesn't look dramatic. It starts when you begin preferring the AI interaction to the friction of human relationships. Human relationships require negotiation, tolerance of difference, and the discomfort of being truly seen by someone who has their own needs. AI companions remove all of that friction, and that's exactly what makes sustained over-reliance on them a problem. Friction in human relationships is also what builds emotional resilience, empathy, and the capacity for real intimacy. A diet of frictionless interaction can quietly erode those capacities without you noticing.

If the AI starts to feel like the most reliable relationship in your life, that's a signal worth taking seriously, not because the AI is harmful, but because human connection requires practice to sustain.

Research documented through National Institutes of Health databases shows that social skill atrophy is a documented outcome of reduced interpersonal engagement, particularly in younger adults. If you're substituting companion AI for human contact rather than supplementing it, that atrophy is a real risk over time.

Warning signs to pay attention to

Certain patterns indicate that your relationship with a companion AI has moved from useful into something that warrants a closer look. Watch for these specifically:

- Avoidance: You consistently choose the AI interaction when a human option is available

- Distress at disruption: You feel genuine anxiety when the platform is unavailable or the conversation resets

- Shrinking social circle: Your investment in human relationships has noticeably declined since you started using the AI regularly

- Idealization: You compare real people unfavorably to the AI because it never pushes back or has its own needs

Any one of these in isolation might be minor. Several together indicate a pattern that's actively working against your long-term wellbeing, and that's the right moment to reassess how you're using the tool.

How to use AI companions safely and ethically

Using ai and digital relationships well comes down to intention. The platform you use matters, but what you bring to the interaction shapes the outcome far more than the technology itself. If you approach a companion AI as a supplement to your existing life rather than a replacement for parts of it, the risk profile changes substantially. Treat it as a tool that serves specific, defined purposes, and you stay in control of what it gives you.

Set clear intentions before you start

Before you build any consistent habit with a companion AI, name what you're using it for. Are you processing stress? Practicing social conversation? Working through something you aren't ready to discuss with anyone close to you? Specific intentions give you a benchmark for whether the interaction is actually helping. Without them, it's easy to use the platform for increasingly undefined reasons that gradually crowd out other activities without you realizing it's happening.

The clearest sign that you're using a companion AI well is that your life outside the interaction stays full and continues to grow.

Keep your human relationships active

One of the most practical safeguards is treating your investment in human relationships as non-negotiable. Schedule time with people you care about the same way you schedule anything else that matters. If you notice yourself canceling or deprioritizing those interactions because the AI feels easier, that pattern is worth interrupting deliberately. Human relationships demand more effort precisely because they return things an AI cannot: genuine mutual history, shared stakes, and growth that comes from navigating real friction together.

Researchers at Harvard studying social connection have consistently found that sustained, reciprocal relationships are among the strongest predictors of long-term wellbeing. That finding doesn't change because AI companions exist. It reinforces why you need to protect your human connections actively, not passively assume they'll maintain themselves.

Know when to step back

If the warning signs described in the previous section start appearing, take a deliberate break from the platform and notice what shifts. Stepping back temporarily isn't failure. It's the kind of self-aware adjustment that keeps any tool working for you rather than against you. These steps help you reset:

- Log out for a week and document how your mood and habits change

- Reconnect with one human relationship you've been neglecting

- Reassess your original intentions and whether the platform is still serving them

Final thoughts

AI and digital relationships are neither the solution to human loneliness nor the threat to human connection that the loudest voices on either side claim. They are a genuinely new kind of interaction, one that your brain responds to in real and measurable ways, and one that carries real benefits alongside real risks depending entirely on how you use it. The technology will keep improving. The psychological dynamics won't change. What changes is how thoughtfully you engage with the tools available to you.

The clearest path forward is simple: stay honest with yourself about what you're looking for, keep your human relationships active, and treat any companion AI as one part of a fuller life rather than a substitute for one. If you want to explore what a memory-driven, emotionally responsive AI companion actually feels like in practice, start with SAM and see what the experience offers you.